The MACH Alliance now counts 111 member companies building composable commerce infrastructure. Gartner projects that by 2026, 70% of organizations will be mandated to acquire composable DXP technology. For brands and engineering teams evaluating AI chat in a headless commerce stack, the question isn't whether MACH matters but how each pillar shapes what your AI layer can do. This post breaks down all four letters and shows where an AI chatbot fits in a composable stack. (For the broader picture, see our guide to adding AI chat to a headless ecommerce stack.)

What MACH Means for Ecommerce and Why It Matters Now

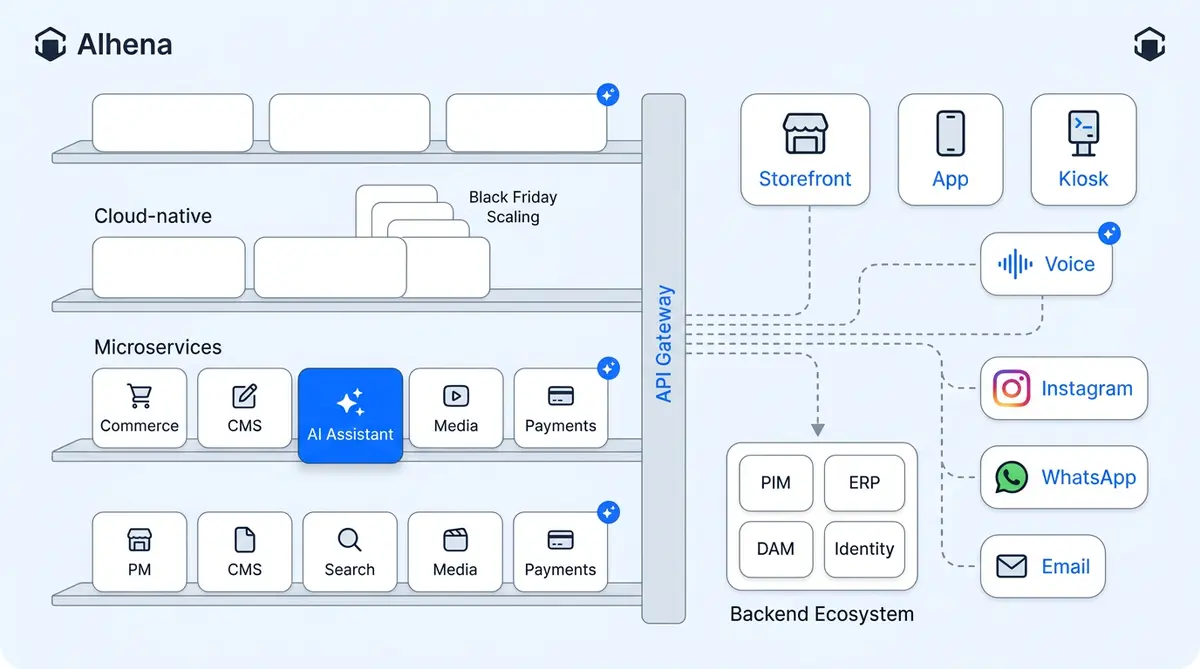

MACH stands for Microservices, API-First, Cloud-Native, and Headless. Each principle solves a specific architectural constraint that monolithic platforms impose. As an agile strategy, MACH gives every industry vertical the efficiency and flexibility to pick best-of-breed tools and deliver personalized experiences. Together, they let you leverage a modular, best-of-breed technology stack where every component, from commerce engine to AI chatbot, can be deployed, scaled, and replaced independently, giving you scalable capabilities across every layer.

The numbers back it up: 83% of brands that adopt MACH report positive ROI, and 79% plan to increase investment over the next 12 months (MACH Alliance global research). The Alliance's 2025 launch of an AI Agent Ecosystem and MCP Registry signals that AI is now central to the composable approach roadmap, and enterprise teams that adopt MACH early future proof their stack.

M is for Microservices: AI Chat as an Independent, Swappable Service

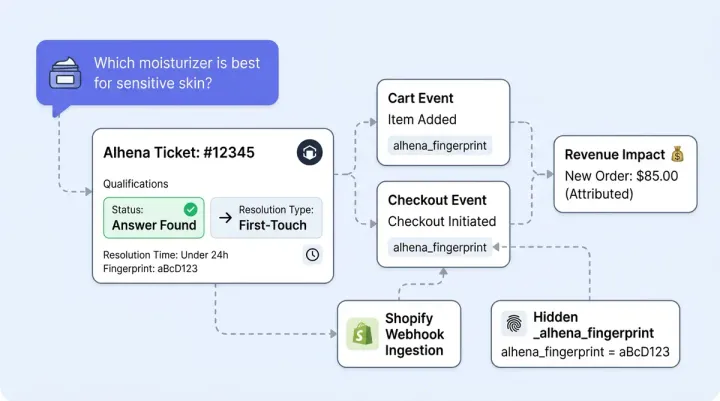

In a microservices architecture, AI chat runs as its own service with its own data store, deployment pipeline, and scaling rules. It doesn't share a database with your cart service or a release cycle with your catalog. If you need to swap LLM providers or replace your chatbot vendor entirely, only that one service changes. Your commerce engine, CMS, payment components, and other systems stay untouched.

Alhena AI operates exactly this way: a standalone SaaS software application and microservice that communicates with your stack through defined APIs. You don't fork your codebase to add it, and you don't rebuild anything to remove it. That's the point of microservices: a flexible setup you can optimize over time. Teams considering the build vs. buy decision should weigh this swappability as a core requirement.

A is for API-First: One Gateway, Every System

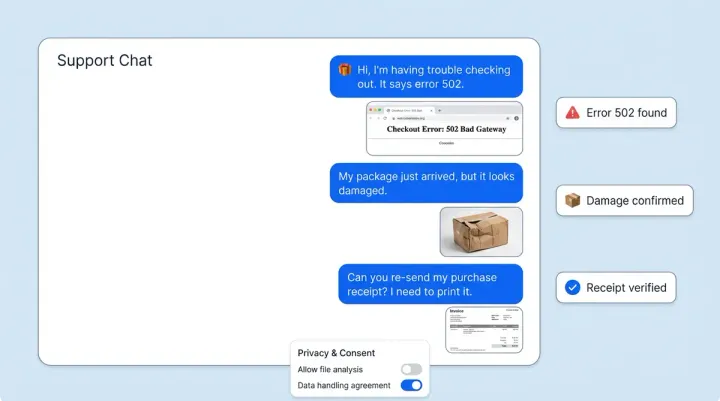

API-first means every capability is exposed through APIs before any UI gets built. For an AI chatbot, this is the connective tissue. Catalog queries, cart operations, order lookups, inventory management checks, and CRM profiles all flow through REST or GraphQL endpoints.

Postman's 2025 State of the API Report found that 1 in 4 developers now design APIs specifically for AI agents. GraphQL is emerging as the preferred abstraction layer because it lets an AI agent query multiple backends through a single endpoint, without managing dozens of REST calls. Alhena connects to commerce platforms (Shopify, WooCommerce, Salesforce Commerce Cloud), helpdesks (Zendesk, Gorgias, Freshdesk), and fulfillment tools through this API-first pattern, pulling real-time data into every conversation to optimize personalization, better customer experiences, and richer shopping capabilities.

C is for Cloud-Native: Elastic AI for Black Friday and Beyond

Black Friday 2025 hit $11.8 billion in U.S. online sales, with traffic spiking in minutes-long bursts. An AI chatbot that can't scale elastically becomes a liability at exactly the moment it matters most.

Cloud-native architecture solves this with containerized Kubernetes deployments, auto-scaling policies, and serverless inference that spins up GPU capacity on demand. Auto-scaling yields 70-90% savings compared to always-on infrastructure. Alhena runs as a scalable, cloud-native SaaS service, so your team doesn't manage inference infrastructure or plan capacity for peak season. See our BFCM AI playbook for peak-season prep details.

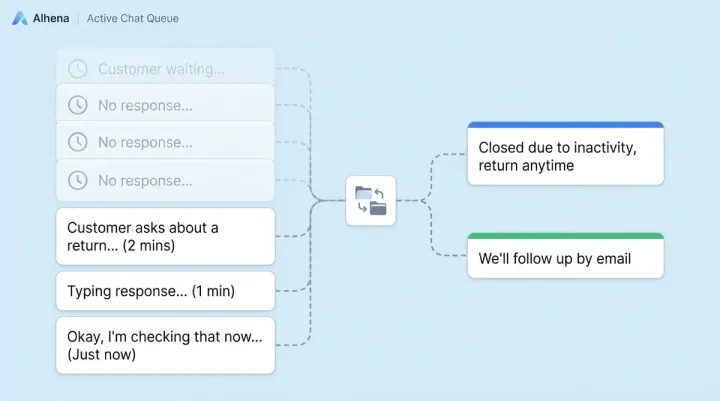

H is for Headless: Conversational AI on Any Frontend

In a headless commerce setup, the frontend is decoupled from the backend, and for AI chat, this means one backend serves every touchpoint. The same AI shopping assistant that runs on your Next.js storefront also powers your native mobile app, in-store kiosk, or voice assistant. This approach ensures product data, pricing, and customer context stay consistent because the backend is centralized, creating seamless customer experiences across every touchpoint.

Alhena supports this natively across social channels (Instagram DMs, WhatsApp), web chat, email, and voice. You render a seamless experience wherever your customers are without building separate AI integrations for each channel. That's the agentic storefront model in practice.

Where AI Chat Sits in the Full MACH Stack

A typical enterprise MACH technology stack includes Commercetools or Elastic Path for commerce, Contentstack or Amplience for CMS, Algolia for search, Cloudinary for media, and Stripe or Adyen for payments. AI chat sits alongside these as its own composable block, connected through the same API gateway. Marketing tools, ai-driven analytics, and optimization software that users rely on are available within the same gateway.

The MACH Alliance's MCP Registry, launched in 2025, formalizes this: it provides a directory of production-ready Model Context Protocol implementations so AI agents can discover and interact with other services in the stack. This is where composable commerce meets agentic commerce, and your AI shopping assistant becomes a first-class citizen in the stack.

When MACH Matters for AI Chat, and When Monolithic Is Fine

MACH isn't always the right call. Here's a simple decision framework:

MACH makes sense when your e-commerce approach spans multiple regions, need to iterate on your AI layer independently, handle seasonal traffic spikes, or run 50+ developers across multiple teams. This approach lets you swap and scale each software component for better user experience and personalization without a full replatform.

Monolithic is fine when you run a single-region store with straightforward needs, have a small team (under 15), and don't expect dramatic traffic variance. Shopify gives you a solid foundation, and you can still add an API-first AI layer like Alhena's Shopify integration without rebuilding.

The inflection point: when the cumulative cost of workarounds on your monolith exceeds the investment to decouple, it's time to go composable. As customer needs evolve and emerging channels grow, MACH gives you the business functionality to keep up.

Ready to add a MACH-aligned AI layer to your composable stack? Book a demo with Alhena AI or start free with 25 conversations.

Frequently Asked Questions

What is MACH architecture in ecommerce?

MACH stands for Microservices, API-First, Cloud-Native, and Headless. It's a set of design principles for building composable e-commerce stacks where each component (commerce engine, CMS, search, AI chat) can be deployed, scaled, and replaced independently. The MACH Alliance certifies vendors that meet all four MACH technology criteria, vetting performance and compliance, and 83% of adopters report positive ROI, with many citing faster time-to-market for new customer experiences.

How does an AI chatbot work as a microservice in a MACH ecommerce stack?

An AI chatbot runs as a standalone service with its own data store, deployment pipeline, and scaling rules. It communicates with other services like the cart, catalog, and CRM through defined APIs. If you need to change LLM providers or swap chatbot vendors, only the AI microservice changes while every other component stays untouched.

Why does API-first design matter for ecommerce AI chatbots?

API-first design means the AI chatbot can pull real-time data from any system that exposes an API: product catalogs, order management, CRM, fulfillment, CMS, and b2b pricing engines. Postman's 2025 report found that 1 in 4 developers now design APIs specifically for AI agents. GraphQL is becoming the preferred layer because it lets AI query multiple backends through a single endpoint.

Can a MACH-aligned AI chatbot handle Black Friday traffic spikes?

Yes. Cloud-native AI chatbots scale elastically using containerized Kubernetes deployments and serverless inference. Auto-scaling spins up GPU capacity during peak traffic, ensuring zero downtime, and scales back down afterward quickly, yielding 70-90% cost savings compared to always-on infrastructure. Black Friday 2025 hit $11.8 billion in U.S. online sales, and AI chatbots on cloud-native platforms handled the spikes without manual capacity planning, keeping experiences fast under load.

What ecommerce platforms work with MACH architecture AI chatbots?

MACH-aligned AI chatbots like Alhena AI integrate with headless commerce engines (Commercetools, Shopify, Salesforce Commerce Cloud, WooCommerce), CMS platforms (Contentstack, Amplience), search tools (Algolia), and helpdesks (Zendesk, Gorgias, Freshdesk). This API-first e-commerce approach means any system with a REST or GraphQL API can connect to the AI layer.

When should an ecommerce brand choose MACH architecture over a monolithic platform?

MACH makes sense when you sell across multiple regions, need to iterate on components independently, handle seasonal traffic spikes, or have 50+ developers across the industry who need to adapt quickly. Monolithic platforms work well for single-region stores with small teams and straightforward catalog needs. The inflection point is when workaround costs on a monolith exceed the investment to decouple. Learn more in our approach to composable AI.

How does headless architecture let AI chat run on multiple frontends?

Headless decouples the frontend from the backend, so one AI chat backend can serve a Next.js storefront, native mobile app, in-store kiosk, voice assistant, or social channels like Instagram DMs and WhatsApp. Product data, pricing, and customer context stay consistent because the backend is centralized. Alhena AI supports this natively across web, email, social, and voice.