What Headless and Composable Commerce Actually Mean for AI Chat

The headless commerce market hit $1.74 billion in 2025 and is on track to reach $7.16 billion by 2032, growing at 22.4% annually, according to Coherent Market Insights. That growth isn't just a backend trend. It's reshaping how every digital touchpoint and digital customer-facing tool connects to your store, including AI chat.

If you're running a composable commerce stack (or planning to migrate), you've likely discovered that plugging in a chatbot isn't as simple as pasting a script tag into your theme. There is no single theme. Your frontend could be Next.js, Nuxt.js, a React Native app, or all three. The commerce engine, CMS, search, and payments all talk through APIs. Your AI chat layer needs to do the same.

This guide covers the architecture behind adding an AI chatbot to a headless ecommerce stack: why traditional chatbots fail in decoupled headless commerce environments, the integration patterns that work, and how Alhena AI fits into composable commerce without adding complexity.

Why Traditional Chatbots Break in Headless Commerce Stacks

Most ecommerce chatbots were built for monolithic platforms. Gorgias, for example, is deeply tied to Shopify's Liquid theme layer. Tidio relies on JavaScript widget injection that assumes a single, server-rendered storefront. These tools work well when your frontend and backend live in one codebase. The moment you decouple them, three things break.

No theme to inject into. In a headless commerce setup, the storefront is a custom front-end application. A widget that expects to hook into a Shopify theme or WordPress template has nowhere to land. You can still load it via a script tag, but it sits outside your component tree, can't share state, and feels bolted on.

No native access to commerce data. Traditional chatbots pull order status, product info, and cart data through pre-built connectors tied to specific platforms. In a composable environment, product data might come from a PIM, orders from an OMS, and inventory from a separate microservice. A widget-based chatbot can't reach any of that without custom middleware or third-party glue code.

Session management falls apart. Monolithic platforms handle sessions in one place. In a microservices architecture, the AI chat service, commerce backend, and frontend each manage state independently. Without proper token propagation through an API gateway, the chatbot loses context between page views or across channels.

The result? Brands invest in a headless migration for speed and flexibility, then bolt on a chatbot that undermines both. For a deeper look at common deployment pitfalls, see our guide on 9 mistakes ecommerce brands make when implementing AI agents.

Three Integration Patterns for a Headless Commerce Chatbot

There are three ways to add an AI chatbot to a decoupled e-commerce stack. Each fits a different level of architectural maturity.

Pattern 1: Widget Injection (Quick, But Limited)

This is the traditional approach. A JavaScript snippet loads the digital chat UI as an overlay on top of your frontend. It works on any site, headless or not, because it doesn't interact with your application code.

The upside is speed. You can deploy faster, in minutes. The downside: the widget can't access your frontend's state (cart contents, logged-in user, current product), can't share your design system, and often loads its own CSS framework, hurting page performance. For brands that went headless to improve Lighthouse scores (one French audio brand saw scores jump from 70 to 95 after migration), a heavy chat widget can undo those gains.

Pattern 2: SDK or Component-Based (Headless-Native)

The AI provider ships a React component, Vue component, or npm package that drops into your front-end framework. It uses hooks for state management, streams responses natively, and inherits your digital design tokens.

This is the pattern that fits composable commerce AI best. The chat component lives inside your component tree, shares context with the rest of the app, and communicates with the AI backend through the same API layer that powers your storefront. You get full control over styling, placement, and behavior.

Pattern 3: API-Only (Maximum Flexibility)

The AI provider exposes REST or GraphQL endpoints. Your team builds the chat UI from scratch. The AI service becomes another microservice in your MACH stack: independently deployable, API-first, cloud-native, and decoupled from the presentation layer.

This pattern appeals to engineering teams and companies with strong frontend capabilities. It's the purest expression of composable headless commerce architecture, but it requires the most development effort. For most brands, Pattern 2 hits the sweet spot between flexibility and speed, making it easier to ship faster.

How AI Chat Connects to Your Commerce APIs

Regardless of which frontend pattern you choose, the AI chatbot backend needs to talk to your commerce engine. Here's how that data flow works in headless commerce.

Product Catalog and Inventory

The AI pulls product data (titles, descriptions, pricing, availability, variants) from your commerce API. On Shopify Hydrogen, this means GraphQL queries against the Storefront API. On commercetools, it's their product projection API. On WooCommerce with a headless frontend, it's the REST API or WPGraphQL.

GraphQL is the clear winner here for an api-first AI chatbot. It lets the AI request exactly the fields it needs in a single query rather than multiple REST calls. Shopify reports that stores using GraphQL render 2.4x faster, delivering faster page loads, and network payloads drop by roughly 48%. For real-time chat where every millisecond matters, that efficiency is significant.

Cart and Checkout

This is where an AI shopping assistant earns its keep. In a headless commerce setup, the chatbot can programmatically create carts and add products through API mutations. On Shopify, that's cartCreate and cartLinesAdd via the Storefront API, which handles contextual pricing, real-time tax calculations, and discount application with no rate limits.

Alhena AI takes this further with agentic checkout: the AI populates the cart, pre-fills checkout fields, and hands the shopper a ready-to-complete checkout URL. Tatcha saw a 3x conversion rate and 38% AOV uplift with this approach, with 11.4% of total site revenue attributed to AI-assisted conversational interactions. Read the full Tatcha case study.

Order and Customer Data

Post-purchase support (order status, returns, exchanges) requires access to order management APIs. In a composable setup, this data might live in Shopify's Admin API, a dedicated OMS like Narvar, or a custom service. The AI chat layer connects to each through authenticated API calls, typically using OAuth 2.0 tokens passed through an API gateway.

Alhena's Support Concierge handles this natively, connecting to order management systems and shipping providers like Narvar and ShipStation to resolve inquiries without human intervention. Puffy, a DTC mattress brand, hit 63% automated inquiry resolution while maintaining 90% CSAT.

MACH Architecture and Why Your AI Chatbot Should Be API-First

The MACH Alliance (now 111 members, including commercetools and Contentful) defines the principles that composable commerce stacks follow: Microservices, API-first, Cloud-native, Headless. Gartner forecasts that 60% of organizations including mid-sized and large retailers will rely on composable commerce architectures by 2027.

An API-first AI chatbot aligns with every MACH principle:

- Microservices: The AI chat runs as an independent service. It scales separately from your storefront, CMS, or digital payment gateway. If chat traffic spikes during a flash sale, only the chat service scales up. This scalability is built into the architecture.

- API-first: All communication happens through documented APIs. The chat service consumes commerce APIs for product and order data, and exposes its own APIs for the frontend to render conversations.

- Cloud-native: The AI backend runs in the cloud with auto-scaling for scalability, global CDN distribution, and no infrastructure for your team to manage.

- Headless: The chat UI is completely decoupled from the AI logic. You can render it as a web widget, a mobile component, a WhatsApp integration, or a voice interface without changing the backend.

In late 2025, the MACH Alliance launched the MACH AI Exchange. (For a pillar-by-pillar breakdown, see our deep dive on <a href="https://alhena.ai/blog/mach-architecture-ai-ecommerce/">MACH architecture for AI ecommerce</a>.) to guide enterprise AI adoption within composable stacks. The message is clear: AI isn't an add-on to modern MACH architecture. It's becoming a core building block of agentic commerce, much like search or payments.

For a technical deep dive into how AI agents are structured for ecommerce, see our AI agent architecture blueprint.

How Alhena AI Works in a Headless Commerce Environment

Alhena AI was built to work across any ecommerce platform, not just monolithic ones. Here's what that looks like in a composable environment.

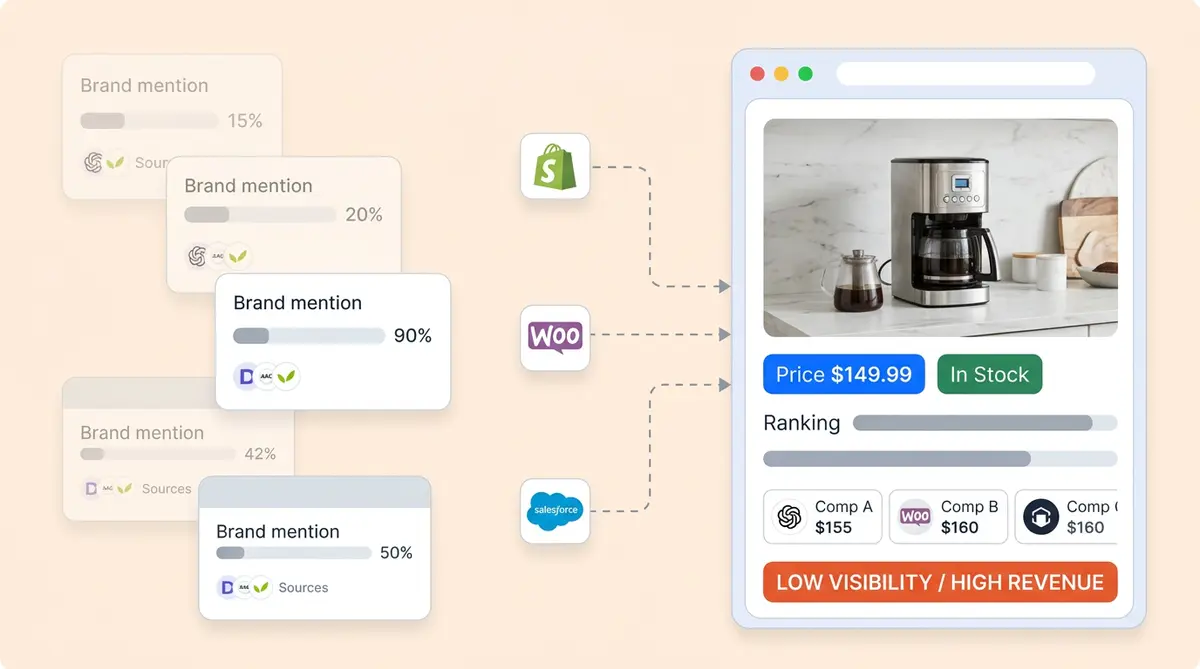

Platform-Agnostic by Design

Alhena connects to Shopify (including Hydrogen), WooCommerce, Magento, Salesforce Commerce Cloud, BigCommerce, and custom headless builds. The integration layer pulls product catalogs, order data, and customer profiles through each platform's APIs. When you switch frontends or add a new channel, the AI backend doesn't change. For brands mid-migration, our <a href="https://alhena.ai/blog/ecommerce-replatforming-ai-chatbot-migration/">ecommerce replatforming guide</a> covers exactly what carries over. See our Alhena AI for BigCommerce guide for a complete walkthrough.

Two Specialized Agents for Composable Commerce

Alhena runs two purpose-built agents rather than one general-purpose bot:

- The Product Expert Agent handles pre-purchase conversations: product discovery, comparisons, recommendations, and cart building. It's grounded in your actual product catalog, so it won't hallucinate specs or recommend items that are out of stock.

- The Order Management Agent handles post-purchase: order tracking, returns, exchanges, and day-to-day operations. It connects to your OMS and helpdesk (whether that's Zendesk, Gorgias, Freshdesk, or Intercom) to pull real-time data.

This dual-agent setup mirrors how composable stacks separate concerns. Each agent is a microservice with a defined scope, shared context, and independent scaling.

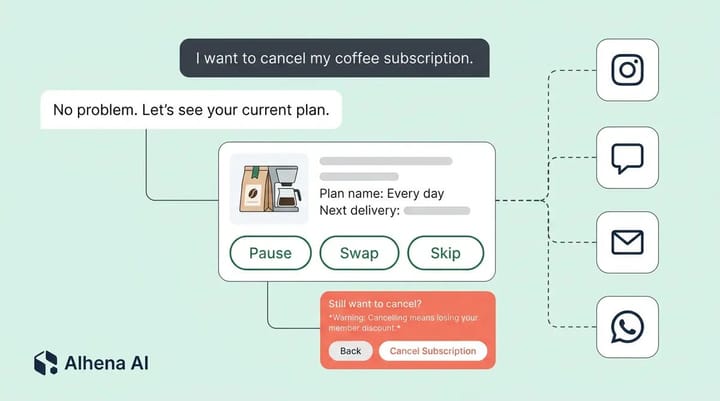

Omnichannel Without Extra Architecture

Because the AI backend is decoupled from the frontend, Alhena supports web chat, email, Instagram DMs, WhatsApp, and voice from a single deployment. In a headless commerce build where you're already serving multiple storefronts and touchpoints, this means the AI maintains context as a shopper moves from your Next.js storefront to Instagram to email. Our voice commerce guide covers how this extends to voice-first shopping.

Deployment in Under 48 Hours

Despite the architectural flexibility, setup doesn't require a months-long integration project. Alhena deploys in under 48 hours with no dev resources for the initial launch. The AI ingests your product catalog, connects to your commerce APIs, and enables handling conversations from day one. For merchants mid-migration to headless, this means you can add composable ecommerce AI faster, before the full stack is in place.

What Alhena Does That Widget-Based Chatbots Cannot

The gap between an API-first AI shopping assistant and a widget-based chatbot isn't just architectural. It shows up in measurable outcomes.

- Revenue generation, not just deflection. Widget chatbots are built to deflect support tickets. Alhena is built to sell. The Product Expert Agent guides shoppers through discovery, answers product questions with verified data, delivers personalization at scale, and drives checkout. Victoria Beckham saw a 20% increase in AOV from AI-assisted conversations. See the full results.

- Hallucination-free responses. Alhena uses retrieval-augmented generation grounded in your actual product catalog and knowledge base. It won't invent product features, quote wrong prices, or recommend discontinued items. Learn more about this approach in our architecture deep dive on knowledge-base-powered AI.

- Built-in revenue attribution. Alhena tracks which conversations lead to purchases, the exact revenue influenced, and the AOV lift from AI-assisted sessions. Widget chatbots rarely offer this level of analytics. Try the ROI calculator to estimate your potential returns.

- Continuous learning. The AI improves over time by analyzing conversation patterns, identifying friction points, and refining its responses. Our post on Alhena's continuous learning architecture explains how this works under the hood.

Getting Started: Adding AI Chat to Your Headless Commerce Stack

Whether you're on Shopify Hydrogen, commercetools, a custom Next.js build, or somewhere in between, here's how to approach the integration.

Step 1: Map Your Commerce APIs

Identify where your product catalog, order data, inventory, and customer profiles live. In a composable stack, these may span multiple services. The AI chat layer needs read access to product and order APIs, plus write access to cart APIs if you want agentic checkout.

Step 2: Choose Your Frontend Integration Pattern

For most headless builds, the SDK or component-based approach (Pattern 2) is the fastest path to production. If your team has strong frontend engineering and wants full control, the API-only approach (Pattern 3) gives maximum flexibility. Widget injection (Pattern 1) works as a stopgap during headless commerce migration but won't give you the full benefits of composable ecommerce AI integration.

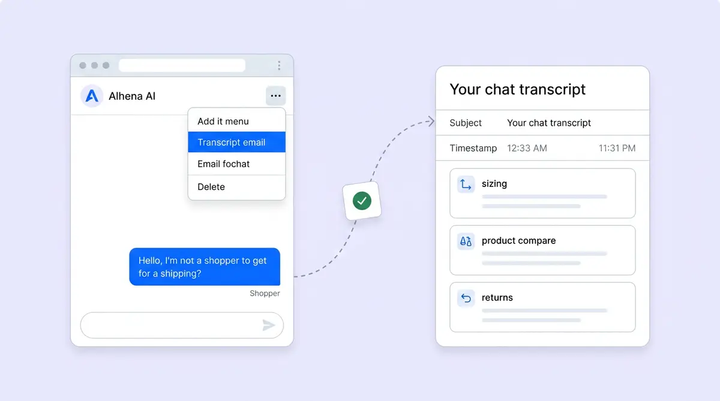

Step 3: Connect Your Support Stack

If you're using a helpdesk (Zendesk, Freshdesk, Gorgias, Intercom), connect it to the AI layer so the Order Management Agent can handle post-purchase inquiries, escalate when needed, and sync conversation history. Alhena's Agent Assist also gives your human agents AI-powered suggestions during live conversations.

Step 4: Launch and Measure

Start with AI handling common pre-purchase questions (sizing, materials, compatibility) and post-purchase inquiries (order status, return policies). Monitor deflection rates, conversion lift, and CSAT. Crocus, a UK gardening retailer, reached 86% deflection and 84% CSAT within weeks of deployment. See how they did it.

For a week-by-week plan after going live, check out our 30-day AI chatbot tuning playbook.

The Bottom Line

Composable commerce enables you the freedom to pick best-of-breed components for every layer of your stack. Your AI chatbot should meet that same standard: API-first, platform-agnostic, and capable of driving revenue, not just answering questions.

Brands that treat AI chat as a composable building block (rather than a bolted-on widget) see the difference in conversion rates, average order values, and support costs. With 73% of businesses and organizations already on some form of headless architecture and AI shopping assistants proven to lift conversions by 3x or more, the question isn't whether to add AI chat. It's whether your current chatbot was built for the architecture you're running.

Ready to bring AI chat into your headless commerce stack? Book a demo with Alhena AI to see how it fits your composable architecture, or start free with 25 conversations to test it on your store today.

Frequently Asked Questions

What is a headless commerce chatbot?

A headless commerce chatbot is an AI-powered chat solution designed to work in decoupled ecommerce architectures where the frontend and backend are separated by APIs through decoupling. Unlike widget-based chatbots built for monolithic platforms like standard Shopify themes, a headless chatbot connects to commerce APIs directly for real-time product data, cart management, and order lookups. Alhena AI works this way, connecting to Shopify Hydrogen, commercetools, WooCommerce, and other headless backends through their native APIs.

How does composable commerce AI differ from traditional ecommerce chatbots?

Traditional ecommerce chatbots rely on pre-built platform connectors and widget injection into a single storefront theme. Composable commerce AI treats the chatbot as an independent, API-first microservice that fits into a MACH architecture alongside your CMS, PIM, OMS, marketing automation, and other commerce technologies and third-party technologies. It can pull data from multiple backend services, scale independently, and serve any frontend or channel, including social channels, without code changes.

Can Alhena AI work with Shopify Hydrogen and other headless frontends?

Yes. Alhena AI connects to Shopify (including Hydrogen) through the Storefront API and Admin API, as well as WooCommerce, Magento, Salesforce Commerce Cloud, BigCommerce, and custom headless builds. The integration is backend-to-backend via APIs, so the frontend framework (Next.js, Nuxt.js, Remix, or others) doesn't affect the AI's capabilities. Deployment takes under 48 hours regardless of your stack.

Does an API-first AI chatbot slow down a headless storefront?

No. An API-first chatbot communicates asynchronously with your commerce backend and doesn't block page rendering. By using GraphQL, the AI fetches only the specific data fields it needs, reducing payload sizes by roughly 48% compared to REST calls. The chat component delivers content independently from your storefront content, so it won't affect your Lighthouse performance score or time-to-interactive metrics.

What MACH architecture principles should an ecommerce chatbot follow?

An ecommerce chatbot in a MACH stack should be: a standalone microservice (scales independently), API-first (communicates only through documented APIs), cloud-native (auto-scaling, no self-hosted infrastructure), and headless (chat UI decoupled from AI logic). Alhena AI follows all four principles, running as an independent service that connects to any commerce backend and can deliver conversations across web, mobile, social channels, email, and voice channels.

How does AI chat handle cart and checkout in a headless architecture?

In a headless stack, the AI chatbot creates and modifies carts through commerce API mutations. On Shopify Hydrogen, this uses GraphQL mutations like cartCreate and cartLinesAdd via the Storefront API. Alhena AI goes further with agentic checkout, populating the cart with recommended products, applying discounts, and generating a pre-filled checkout URL. Tatcha saw a 3x conversion rate using this approach.

What are the costs of adding AI chat to a composable commerce stack?

Costs vary by integration pattern. Widget injection is cheapest but least effective in headless environments. SDK-based integration requires minimal frontend work if the AI provider offers a component library. API-only integration has the highest initial dev cost but maximum flexibility. Alhena AI offers a free tier with 25 conversations to test, and the platform deploys in under 48 hours without requiring dedicated dev resources. Check the pricing page or ROI calculator for detailed estimates.

How do I choose between widget injection, SDK, and API-only integration?

Widget injection works as a temporary solution during headless migration but misses most composable benefits. SDK or component-based integration is best for most headless builds because it balances speed with native frontend integration. API-only is ideal for teams with strong frontend capabilities who want full UI control. If you are early in your headless commerce migration, start with widget injection and upgrade to SDK-based integration once your composable stack stabilizes.