The CTR Trap: Why Clicks Don't Pay the Bills

Click-through rate became the default success metric for AI in e-commerce because it's easy to measure. Every analytics tool tracks it. Every dashboard surfaces it. And every team celebrates when it goes up.

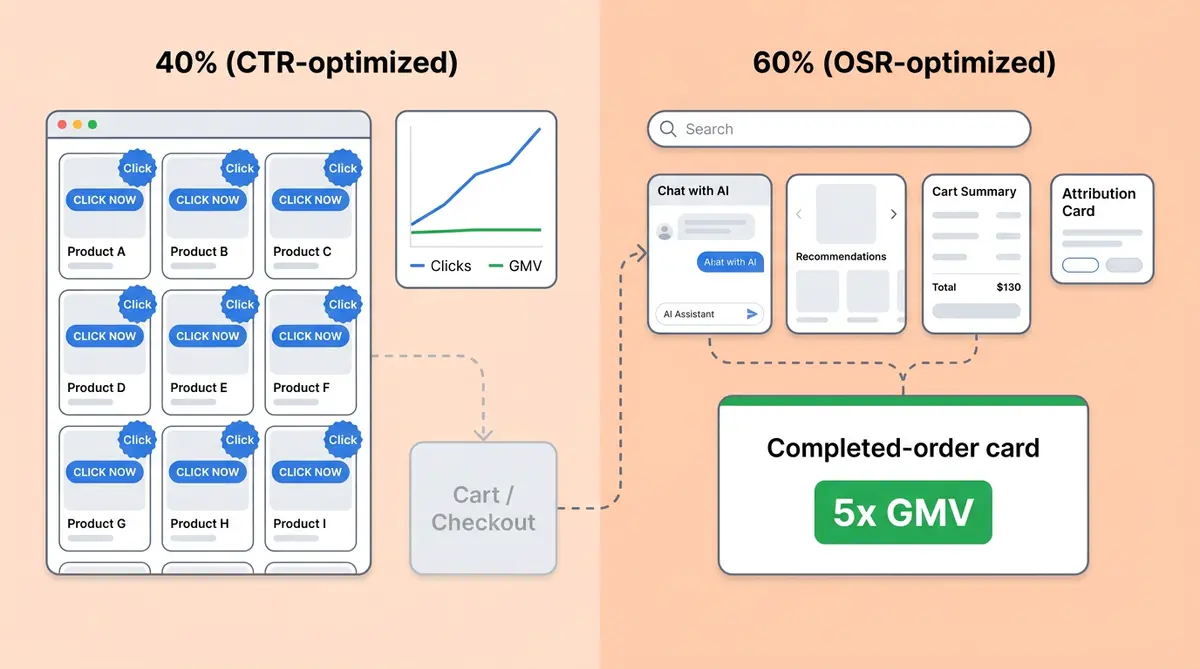

But here's the problem: a recommendation that gets clicked isn't a recommendation that gets bought. A 2025 e-commerce research study confirms that optimizing a recommender for Order Success Rate (OSR) instead of CTR produced a GMV uplift more than five times larger. Five times. Same online store traffic, same catalog, wildly different business results and business outcomes.

It’s a simple principle. What you optimize is what you get. And if your AI shopping experience is tuned for clicks instead of purchases, you'll get clicks, not carts.

Goodhart's Law Hits Your Product Feed

Goodhart's Law states that when a measure becomes a target, it stops being a good measure. For retailers and e-commerce teams using AI, this plays out predictably:

- CTR-optimized recommendations surface cheap, broadly appealing, or already-trending SKUs that attract clicks but don't move margin

- Engagement-optimized chatbots extend conversations and show more products without improving conversion rate or closing sales

- Session-length metrics reward friction disguised as interaction

None of these pay your supplier invoices. The metric that matters is a completed order showing real purchase intent through the checkout process, and the gap between "clicked" and "bought" is where revenue quietly disappears.

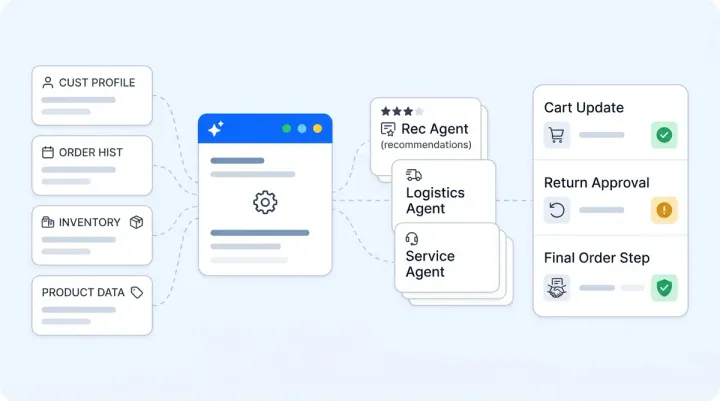

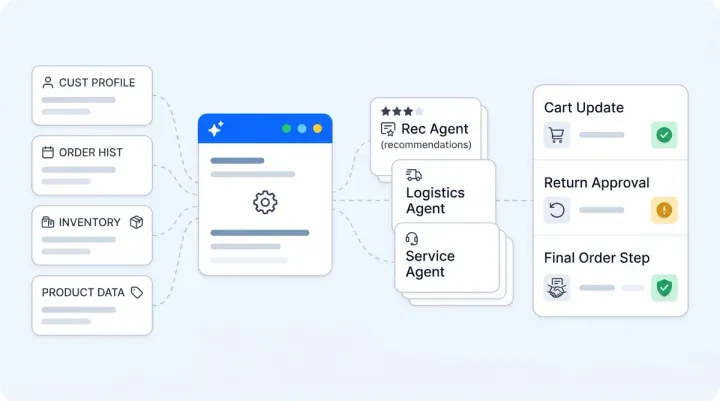

This isn't just a ranking-model problem. The same trap shows up across every AI agent and customer-facing surface that touches buyers: search results, product discovery surfaces, chat recommendations, nudges, and AI shopping assistants. If the success metric isn't tied to a purchase-stage outcome, you're training your AI to be good at the wrong thing.

A Practitioner's Checklist: Making Your AI Conversion-Objective

Translating "optimize for revenue" from a research finding into something actionable takes five shifts:

1. Measure the right thing from day one

Track Total Add-to-Cart GMV, average cart value, and attributed checkout revenue. Not impressions, not sessions, not "products shown". Alhena's Revenue Impact dashboard surfaces these numbers out of the box: GMV, cart value, and revenue attributed directly to AI interactions.

2. A/B testing on revenue, not engagement

Split users into a control group that doesn't see the AI and judge the checkout conversion rate delta in the context of actual revenue. If your experiment framework scores on "messages exchanged" or "recommendations clicked", you're measuring the wrong thing. Alhena's experiment framework splits users by fingerprint and reports add-to-cart value, checkout revenue, and statistical significance with won/lost/inconclusive verdicts.

3. Don't reward clicks in your tuning loop

When you boost products and product recommendations or adjust AI behaviour, evaluate the change against downstream cart and order events. Boost the products and SKUs that convert in your cart and product data, not the products that get the most views.

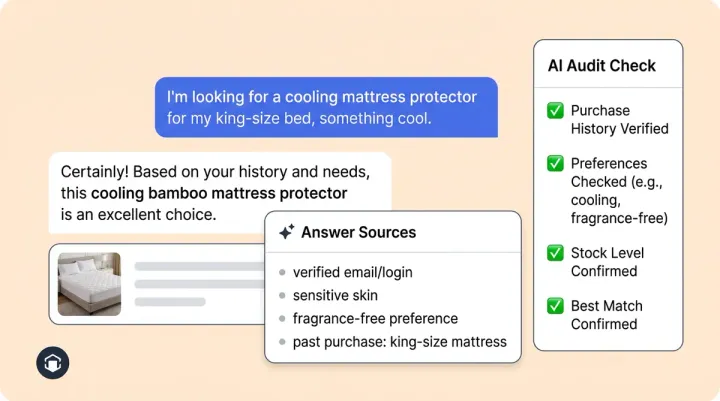

4. Attribute conservatively

A 24-hour first-touch attribution window keeps credit honest. Longer windows inflate numbers and make every AI surface look like a hero. Alhena only counts interactions where the AI provided a genuinely helpful answer (found a real product match, didn't escalate or say "I don't know") before attributing revenue.

5. Watch for proxy drift

If your AI's engagement metrics climb but cart GMV stays flat, you've drifted. Set a monthly check to improve visibility: plot CTR or session length against actual revenue. The moment they diverge, your AI is overfitted to the wrong target.

Why This Matters More in 2025

As AI shopping agents, retail agents, and conversational shopping agents multiply across channels (chat, voice, social DMs, agentic checkout), the temptation to measure "AI activity" instead of "AI revenue" grows. More surfaces means more customer engagement metrics to celebrate as vanity numbers.

Brands like Tatcha that measure AI on actual conversion see 3x conversion rates and 38% AOV uplift. That doesn't come from optimizing clicks. It comes from building the entire measurement stack around purchase outcomes.

The 2026 E-commerce AI ROI Playbook makes the same argument from a different angle: ticket deflection is the wrong north star. So is CTR. The only north star that compounds is attributed revenue per AI interaction.

The One Question That Cuts Through

Audit every AI surface in your stack, including search, chat, nudges, and recommendations. Ask one question:

"What metric, if it doubled tomorrow, would make us celebrate? And is that the metric this AI is being measured, tuned, and tested on through proper testing?"

If the answer to the first question is "revenue" but the answer to the second is "clicks" or "engagement", you have an alignment problem. Brands that fix the metric improve your measurement stack. The AI will follow.

Ready to measure your AI on revenue instead of vanity metrics? Book a demo with Alhena AI or start for free with 25 conversations to see your first attributed revenue numbers.

Frequently Asked Questions

Why is CTR a poor metric for AI shopping assistants?

CTR measures whether someone clicked a product, not whether they bought it. It doesn’t improve revenue. In e-commerce, high CTR often misleads teams. It often correlates with a poor conversion rate because it favors with popular or cheap items that attract browsing intent but don't convert. Research shows optimizing for purchase-stage metrics like Order Success Rate produces 5x more GMV than optimizing for clicks.

What is Order Success Rate (OSR) in e-commerce?

OSR measures the percentage of AI interactions that lead to a completed order showing real purchase intent through the checkout process. Unlike CTR (which stops at the click) or Add-to-Cart Rate (which stops at the cart), OSR captures the full funnel from AI-powered discovery to purchase. It's the closest proxy to actual revenue impact.

How does Alhena AI measure revenue attribution?

Alhena uses a 24-hour first-touch attribution window. It only counts interactions where the AI provided a genuinely helpful answer before attributing revenue. The Revenue Impact dashboard shows Total GMV, Average Cart Value, and Add-to-Cart GMV attributed to AI conversations.

Can I A/B test my AI shopping assistant on revenue instead of engagement?

Yes. Alhena's experiment framework splits users by fingerprint into test and control groups, then reports checkout revenue delta with statistical significance. You see Won, Lost, or Inconclusive verdicts based on actual purchase conversion rate outcomes, not click or session metrics.

What is proxy drift in AI optimization?

Proxy drift happens when your AI's engagement metrics (CTR, session length, messages exchanged) climb while actual revenue stays flat or declines. It means the AI has learned to be engaging without being commercially effective. Monthly checks comparing engagement metrics against cart GMV catch this early.

How quickly can I see revenue attribution data after deploying Alhena?

Revenue attribution starts working immediately after deployment. Alhena integrates with Shopify automatically. Shopify brands using Alhena see revenue attribution data from day one. For other platforms, integration works via cart and checkout SDK events. Most e-commerce brands see their first attributed revenue data within 48 hours of going live.