Most teams building RAG systems obsess over the embedding model, the retriever architecture, or the chunk size. They're optimizing the wrong variable. The single biggest lever for retrieval relevance and quality is what you retrieve against, not how you retrieve it.

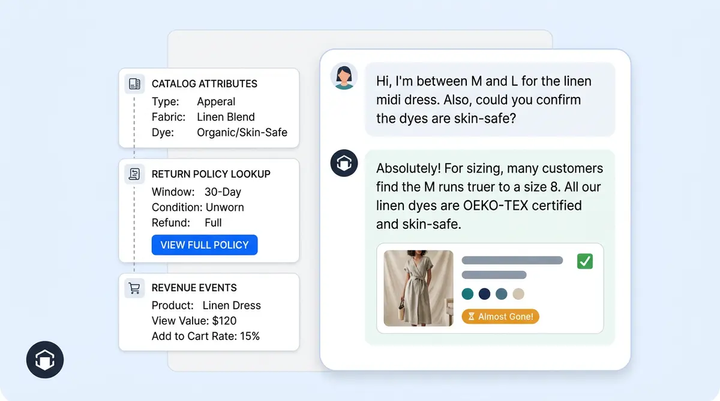

A user message in a real multi-turn exchange is almost never a good search query. Pronouns, ellipsis, implied subjects, hard constraints mixed with semantic intent. If you fire that raw text at a vector database, you get garbage back. Alhena's answer to this problem is a pre-retrieval pipeline called the Contextualizer, and it runs before a single embedding is computed.

This post walks through the four parallel layers of query rewriting that transform raw user input into retrieval-ready queries, explains the architectural choices behind each, and shows why this is the hidden driver behind Alhena's hallucination-free accuracy in AI shopping conversations.

The Core Problem: Conversational Messages Are Not Search Queries

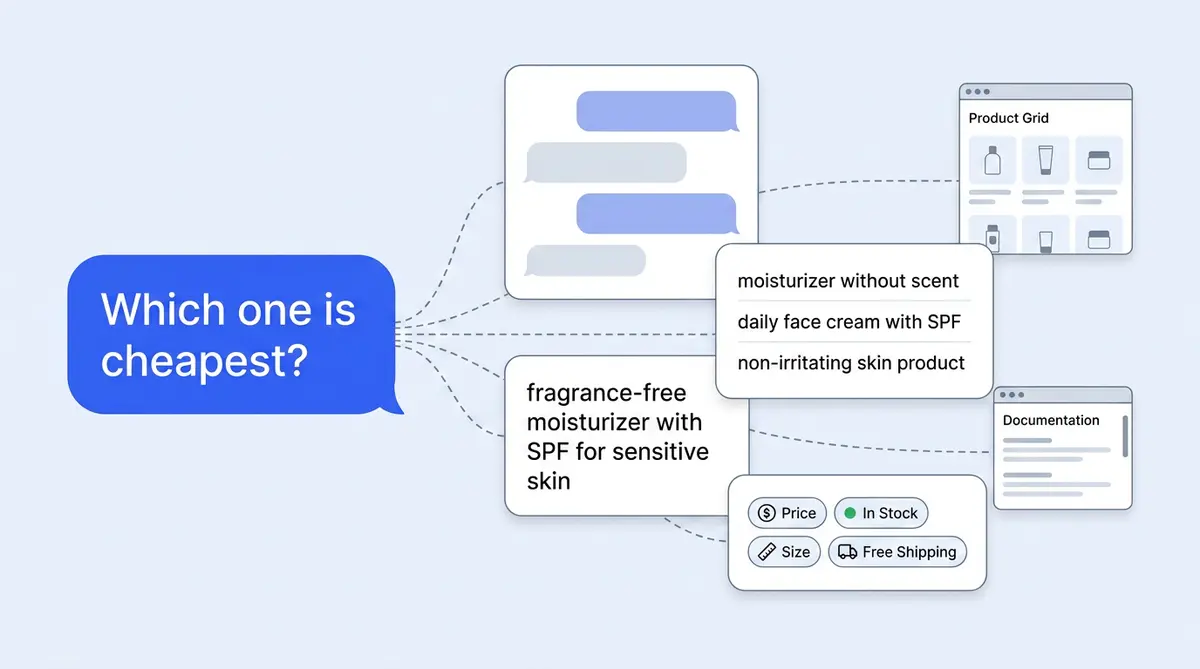

Consider a real shopping session:

- Turn 1: "Do you have office chairs?"

- Turn 2: "What about in leather?"

- Turn 3: "Which one is cheapest?"

If you embed Turn 3 ("Which one is cheapest?") and run a vector search beyond keyword matching, you'll retrieve generic "cheapest product" documents. The question has no topical anchor. All the meaning lives in the conversation history, not in the sentence itself.

This isn't an edge case. In production ecommerce user query patterns, over 60% of follow-up messages contain unresolved coreferences or implicit context that depend entirely on prior turns. Standard retrieval-augmented generation (RAG) pipelines treat each message as independent, which is why they break down after turn one.

Alhena solves this by treating the raw user query as intent to be reconstructed, not a query to be executed. Before any embedding, filter, or vector search happens, the query passes through a Contextualizer pipeline that rewrites it into one or more retrieval-ready queries.

The Pipeline Shape: Four Parallel Contextualizers

The Contextualizer Orchestrator runs multiple specialized rewriters in parallel. Each one transforms the raw user query for a different purpose:

- ChatHistoryContextualizer collapses multi-turn into a standalone query

- ProductSearchContextualizer rewrites using catalog taxonomy and accumulated preferences

- QueryExpansionContextualizer generates paraphrases for recall diversity

- ProductsFilterContextualizer extracts hard constraints into structured filters

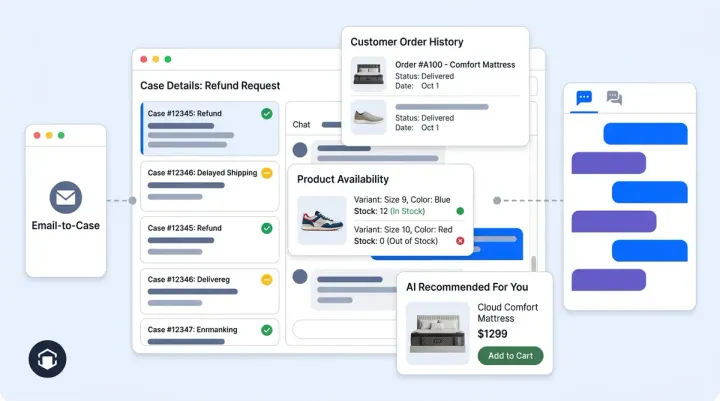

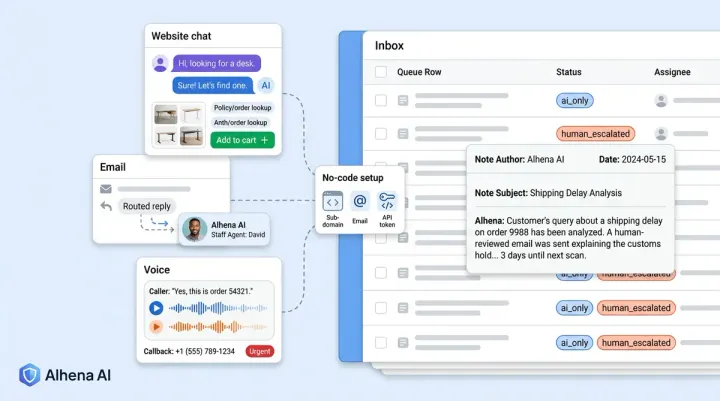

The orchestrator selects which contextualizers to activate based on the bot's configuration and the current context. Chat history exists? Add the ChatHistoryContextualizer. Conversational product search enabled? Add ProductSearchContextualizer. Image attached? Add ImageContextualizer. Each contextualizer issues its own LLM call, runs independently, and emits a transformed query.

Retrieval then executes against the union of these rewritten queries. Not the raw input. This framework is fundamentally different from single-query RAG, where one embedding represents one shot at getting the right documents.

Layer 1: Chat History Contextualizer (Coreference Resolution)

This is the foundational rewriter. Its job: collapse a multi-turn conversation into a single self-contained question.

Input: The latest user message plus the full chat history as a formatted string.

Processing: A fast LLM (GPT-4.1-nano by default) rewrites the latest message into a standalone question that encodes all relevant context from prior turns. The prompt instructs the model to resolve pronouns, carry forward implied subjects, and preserve intent.

Output: A single rewritten string.

Using the office chair example:

- Turn 3 raw: "Which one is cheapest?"

- Turn 3 rewritten: "Which leather office chair is cheapest?"

Now the embedding has something to match against. Pronouns resolved, product category anchored, modifier from Turn 2 preserved.

Why a nano-tier model? Latency. This step sits on the critical path of every response. Alhena tracks it explicitly (question_contextualization_seconds in the request's time-tracking attributes) because even 50ms matters at scale. Coreference resolution doesn't need GPT-4 level reasoning. A small, fast model handles it well, and the latency stays under 100ms p95.

This layer alone, applied to our multi-agent architecture, transforms retrieval accuracy across any multi-turn conversation. Every downstream retriever and agent benefits because the query hitting the index is the reconstructed intent, not raw keystrokes.

Layer 2: Product Search Contextualizer (Domain-Aware Rewriting)

A standalone question works for FAQ or general knowledge retrieval. For product catalogs, you need structure. Products have categories, subcategories, and attributes, and retrieval improves dramatically when the query mirrors the catalog's shape.

This contextualizer takes five inputs:

- The user query

- Full chat history

- Accumulated user needs (preferences/constraints learned from earlier turns, like "under $200", "for a home office", "must have lumbar support")

- The bot's product taxonomy (the full category/subcategory tree)

- Previously displayed product chunks (what the user has already seen in the current view)

It emits a structured query like:

Category: Office Equipment | Subcategory: Office Chairs | Product Description: comfortable leather office chair | Product Attributes: material - leather

Two behaviors make this powerful:

Topic Change Detection

If the user pivots (say, from office chairs to laptops), the contextualizer detects the shift and resets carried context. Preferences like "leather" and "lumbar support" don't leak into the laptop search. This is one of the most common RAG failure modes documented in 2024 research in naive implementations. Stale context from a previous topic pollutes retrieval for the new one. Alhena handles it explicitly at the prompt level with a topic-change detection mechanism.

Context Continuity

When the user stays on topic ("show me something cheaper", "what about in black"), the contextualizer preserves prior attributes and layers the new constraint on top. For "similar product" requests ("show me something like this one"), it uses the previously displayed product chunks as seeds to construct a refined query.

The model here is Gemini 2.5 Flash. Structured output adherence is the bottleneck for this layer, and Gemini's JSON/structured-text generation is strong. The result feeds directly into Alhena's agentic chunking index, where product documents are already structured to match this query shape.

Layer 3: Query Expansion Contextualizer (Paraphrase Diversity)

Vector search is lexically sensitive even with dense embeddings. The specific wording of a query still shifts the neighborhood you land in. Two semantically identical questions can retrieve different document sets simply because one uses "laptop" and the other uses "notebook computer."

To broaden recall without sacrificing precision, this contextualizer generates up to three paraphrases of the query.

Input: The already-contextualized query plus chat history.

Output: A list of semantically equivalent rewordings.

Example:

- Original: "What's the best laptop for programming?"

- Paraphrase 1: "Which laptop is recommended for software development?"

- Paraphrase 2: "What is a good developer laptop?"

- Paraphrase 3: "Top coding laptops?"

Each paraphrase becomes its own retrieval query. The returned chunks are merged, deduplicated, and re-ranked by relevance downstream. Think of it as a cheaper, more controllable cousin of HyDE (Hypothetical Document Embeddings). Instead of hallucinating a full hypothetical answer and embedding that, we generate multiple valid surface forms of the question and retrieve against all of them.

The cost-to-benefit ratio is excellent. Three extra embedding calls per query is trivial compared to the recall improvement from covering lexical variations across the user didn't happen to type.

Layer 4: Filter Extraction (Structured Constraints)

The ProductsFilterContextualizer runs in parallel and does something orthogonal to the other layers: it extracts hard constraints from the conversation and emits a structured qdrant_filter object.

Things like "under $50", "in stock", "size medium", "ships to Canada" become metadata filters that the vector database applies alongside hybrid semantic search. Not instead of it.

Why does this matter? Because you don't want "under $50" to be an embedding signal. Price is a hard constraint. It belongs in the filter layer, not the semantic layer. If you embed "comfortable leather chair under $50" as a single vector, the "$50" part warps the embedding space. You might miss the perfect $45 chair because its product description doesn't contain a price string that's close to "$50" in embedding space.

The contextualizer pipeline separates these two concerns cleanly. Semantic meaning flows through the embedding. Hard constraints flow through structured filters. The vector database runs hybrid search simultaneously, giving you documents that are both semantically relevant and satisfying the user's constraints.

This separation is a key reason Alhena's AI Shopping Assistant can handle complex multi-constraint queries ("vegan leather bag under $200 that fits a 15-inch laptop") without hallucinating products that don't actually meet the criteria.

Orchestration: Parallelism and Graceful Degradation

The Contextualizer Orchestrator ties everything together with three design principles and strategy:

Configuration-Driven Selection

Each bot profile can enable or disable specific contextualizer layers. A simple FAQ bot might only need ChatHistoryContextualizer. A full product search experience gets all four layers plus ImageContextualizer. The pipeline adapts to the use case without code changes or new tools.

Parallel Execution

All active contextualizers fan out simultaneously. Each issues its own LLM call to whatever model is optimal for its task (nano for coreference, Flash for structured output, etc.). Total added latency is bounded by the slowest contextualizer, not the sum of all of them. In practice, this means 100 to 300ms of added pre-retrieval time.

Graceful Failure

If any single contextualizer errors or times out, retrieval falls back to the original query for that branch. The pipeline never hard-fails on a contextualization error. You lose some recall quality on that specific dimension, but the request still completes. This is critical for production system reliability, where you can't let an LLM timeout cascade into a user-facing error.

After all contextualizers emit their results, the merged set of queries passes to the ContextRetrieverOrchestrator. It builds dense and sparse embeddings for hybrid search for each rewritten query and fires parallel retrievers against the appropriate indexes (knowledge base, products, FAQs). The result: a single user message spawns multiple high-quality retrieval passes, each optimized for a different aspect of the user's intent.

Before and After: The Impact Made Visceral

Let's trace a real conversation through the pipeline to see what the retriever actually receives:

Scenario: A customer on a skincare brand's site (three turns in)

- Turn 1: "I have sensitive skin and I'm looking for a moisturizer"

- Turn 2: "Something without fragrance"

- Turn 3: "Do you have one with SPF?"

Without query rewriting (raw Turn 3 to retriever):

"Do you have one with SPF?" retrieves generic SPF products, sunscreens, random "one" matches. No mention of moisturizer, sensitive skin, or fragrance-free. The AI either hallucinates context or returns irrelevant products.

With Alhena's Contextualizer pipeline:

- ChatHistoryContextualizer output: "Do you have a fragrance-free moisturizer with SPF for sensitive skin?"

- ProductSearchContextualizer output:

Category: Skincare | Subcategory: Moisturizers | Product Description: fragrance-free moisturizer with SPF for sensitive skin | Attributes: SPF - yes, fragrance - none, skin type - sensitive - QueryExpansionContextualizer output: ["SPF moisturizer for sensitive skin without fragrance", "gentle daily moisturizer with sun protection", "unscented SPF face cream for reactive skin"]

- ProductsFilterContextualizer output:

{"must": [{"key": "spf", "match": {"value": true}}, {"key": "fragrance_free", "match": {"value": true}}]}

Seven retrieval queries fire in parallel, each capturing a different facet of the user's true intent. The vector database returns products that are semantically relevant (high relevance scores) to moisturizers for sensitive skin AND filtered to only include SPF-containing, fragrance-free products. No hallucination possible because the retrieved documents actually match what the user wants.

Why This Is the Hidden Driver Behind Hallucination-Free AI

The connection between query rewriting and hallucination prevention is direct and causal:

Better queries produce better retrieval. When the retriever sees "fragrance-free moisturizer with SPF for sensitive skin" instead of "Do you have one with SPF?", it returns documents with high relevance to the actual question.

Better retrieval produces fewer hallucinations. LLMs hallucinate when they lack grounding information. If the retrieved context contains the right products with the right attributes, the model doesn't need to guess or fill gaps with fabricated details.

Structured filters enforce hard constraints. Even if the semantic search returns a product that's close but not quite right (great moisturizer, but contains fragrance), the filter layer catches it before the LLM ever sees it. You can't hallucinate a product that violates a filter.

This is why Alhena's accuracy numbers (and the results brands like Tatcha see, including 3x conversion rates) trace back to what happens before retrieval, not during it. The index stays the same. The embedding model stays the same. The documents stay the same. What changes is the quality of the query hitting that index.

For a deeper look at how this fits into the broader architecture, see our posts on the multi-agent planning system (which generates the sub-queries that feed into contextualizers) and unified memory (which supplies the user preferences that the ProductSearchContextualizer uses).

Architectural Takeaways for RAG Builders

Three principles fall out of this design that apply to anyone building production retrieval-augmented generation applications:

1. Contextualization is a pre-retrieval stage, not a retrieval-time trick. It owns the query rewrite responsibility cleanly, is observable (each stage is independently timed), and is configurable per deployment. Mixing query rewriting into the retriever itself makes both harder to debug.

2. Domain knowledge lives in prompts, not in training. Want to support a new vertical? Add a contextualizer with a taxonomy-aware prompt. No embedding retrain, no index rebuild. Alhena supports beauty, fashion, home furnishing, and travel verticals from the same pipeline by swapping taxonomy prompts.

3. Separate semantic intent from hard constraints. Prices, sizes, boolean attributes (in stock, fragrance-free, vegan) should never be embedding signals. Extract them into structured filters and let the vector database enforce them at query time. Your recall goes up and your hallucination rate drops.

The tagline is accurate in a precise technical sense: the prompt the retriever sees is not the prompt the user typed. The context of the conversation is injected into the query itself, before a single vector is compared. That is what makes multi-turn RAG actually work for ecommerce.

Ready to see how query rewriting improves retrieval accuracy for your product catalog? Book a technical demo with Alhena AI or start free with 25 conversations to test it on your own data.

Frequently Asked Questions

What is query rewriting in RAG systems?

Query rewriting transforms a user's raw message into one or more optimized queries before retrieval. In multi-turn conversations, this means resolving pronouns, carrying forward context from prior turns, and separating semantic intent from hard constraints like price or size filters.

Why does multi-turn RAG fail without query rewriting?

Follow-up messages like \"Which one is cheapest?\" contain no topical anchor. Without rewriting, the vector database receives a context-free query and returns irrelevant documents. Over 60% of conversational follow-ups have unresolved coreferences that standard RAG pipelines can't handle.

How does Alhena's Contextualizer pipeline differ from HyDE?

HyDE generates a full hypothetical answer document and embeds it for retrieval. Alhena's QueryExpansionContextualizer takes a lighter approach: it generates 3 paraphrases of the query and retrieves against all of them. This gives similar recall diversity at lower latency and without the risk of hallucinated content in the hypothetical document influencing retrieval.

What models does Alhena use for query rewriting?

Each contextualizer uses the model best suited to its task. ChatHistoryContextualizer runs on GPT-4.1-nano for speed (sub-100ms p95). ProductSearchContextualizer uses Gemini 2.5 Flash for strong structured output adherence. Model choices are independently configurable per bot.

How much latency does query rewriting add?

Because all contextualizers run in parallel, total added latency is bounded by the slowest layer, not the sum. In practice, this means 100 to 300ms of pre-retrieval time. Each stage is independently timed and tracked, making evaluation of regressions easy to catch.

How does the filter contextualizer prevent hallucinations?

The ProductsFilterContextualizer extracts hard constraints (price ranges, sizes, boolean attributes) into structured metadata filters applied at the vector database level. This means even if semantic search ranking returns a close-but-wrong product, the filter layer blocks it before the LLM sees it. You can't hallucinate a product that violates a database filter.

Can the Contextualizer pipeline handle topic changes mid-conversation?

Yes. The ProductSearchContextualizer includes explicit topic-change detection. When a user pivots from one category to another (say, office chairs to laptops), accumulated preferences from the old topic are reset so they don't pollute the new search. This prevents one of the most common failure modes in naive multi-turn RAG.

Does Alhena's query rewriting work with any vector database?

The Contextualizer pipeline is vector-database agnostic. It outputs rewritten queries (plain text) and structured filters (compatible with Qdrant's filter syntax). The rewritten queries can be embedded with any model for hybrid retrieval and searched against any vector store. The filter format is adaptable to other databases like Pinecone, Weaviate, or Milvus.