Most of your customers have already talked to an AI chatbot for customer support. Fewer than one in fifty prefer it as their only option. That gap between usage and preference isn't a technology problem. It's a design problem.

Roughly two-thirds of consumers used a chatbot for customer service in the past year, yet only about 2% want AI handling everything with no human backup. At the same time, 91% of support leaders feel pressure to roll out AI faster. The result? Brands ship AI that works fine technically but feels wrong to the people using it.

Confidence doesn't come from model accuracy alone. It comes from how the customer experience is designed. Here are the patterns that separate AI customer support people tolerate from the hybrid model they actually believe in.

Confidence Is a Design Problem, Not a Model Problem

A perfectly accurate AI can still feel untrustworthy if the experience around it sends the wrong signals. Customers don't evaluate your hybrid support model the way engineers do. They don't check retrieval precision or token confidence scores. They notice whether the interaction feels honest, whether they can get out when they want to, and whether someone has their back if things go sideways.

That means customer confidence lives in the UX layer, not the model layer. And it's something organizations can actively design across every digital channel.

Five UX Patterns That Build Customer Confidence in AI

These aren't theoretical. They show up consistently in high-CSAT hybrid support deployments where human support and automation work side by side.

1. Name the AI and state its limits up front. Don't hide behind ambiguous greetings. Tell customers they're talking to an AI assistant, what requests it can handle, and that a person is available if needed. Transparency at the start sets the tone for the entire interaction.

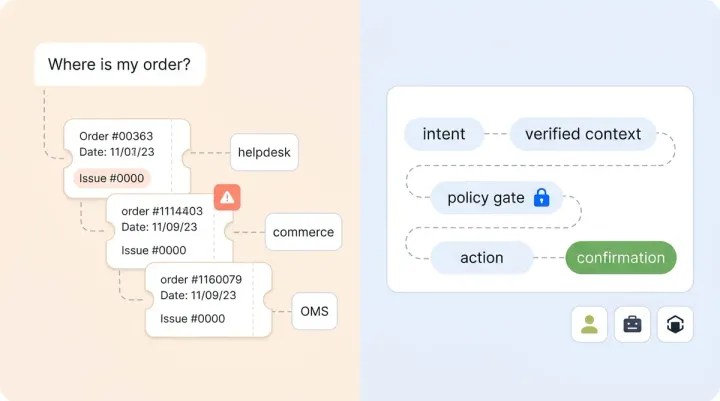

2. Show where answers come from. When your AI pulls from a return policy, a product spec, or an order record, surface that data source. Customers believe answers they can verify. Alhena's Shopping Assistant grounds every response in live store data for exactly this reason: answers you can trace feel personalized, not generic.

3. Let the AI say "I'm not sure." Confidence-aware language matters more than sounding polished. An AI that asks a clarifying question when uncertain earns more credibility than one that bluffs through an answer. This is why grounded retrieval architectures matter: they give the system a real basis for knowing what it knows and what it doesn't.

4. Keep the human exit visible at all times. A "talk to a person" option buried three menus deep sends a clear message: we'd rather you didn't. A persistent, one-click path to human support sends the opposite. Customers use AI more freely when they know they can leave it easily across any channel.

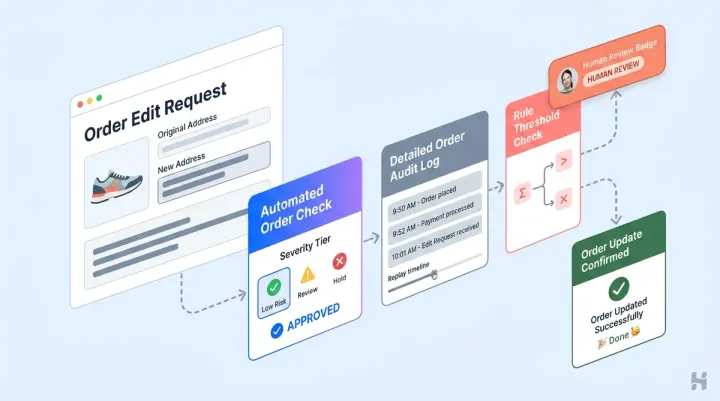

5. Confirm before acting. For anything that changes state (processing a return, updating an address, canceling an order), the AI should pause and confirm. "I'm about to submit your return for order #4471. Want me to go ahead?" That one extra step prevents the kind of mistakes that destroy customer experiences permanently.

The Handoff Is Where Confidence Lives or Dies

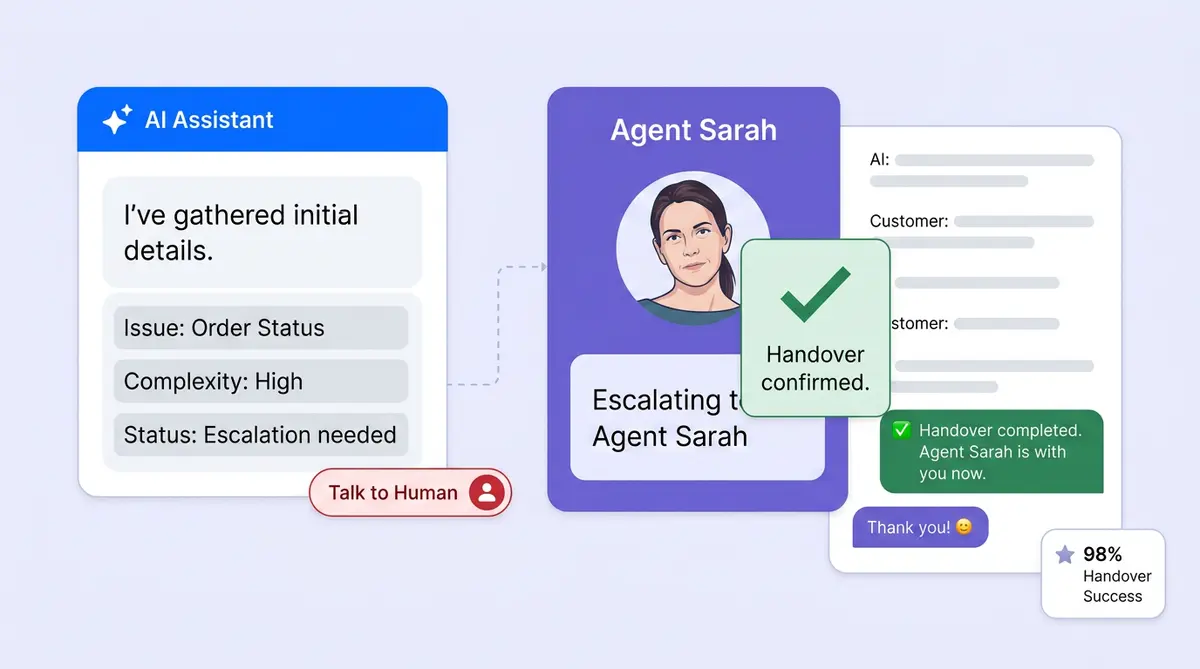

The handoff from AI to human agents is the highest-stakes moment in any hybrid model. Get it wrong and customers feel like they wasted their time with the chatbot. Get it right and the AI conversation becomes a head start, not a dead end.

Three principles matter most on the customer service side:

- No starting over. Human agents should see the full transcript, the AI's summary, and the detected intent before saying hello. We've written a detailed handoff playbook covering the mechanics, but the customer-facing takeaway is simple: don't make people repeat themselves.

- Honest wait times. Tell customers how long the transfer will take. A realistic "about 3 minutes" builds more credibility than silence.

- Explain the why. "I'm connecting you with a specialist who handles shipping exceptions" is better than a generic "please hold." Context makes the transition feel intentional, not broken.

Alhena's Support Concierge passes structured context (conversation summary, sentiment, and suggested next steps) so human agents pick up mid-conversation, not from scratch. That's also why AI-structured tickets outperform human-created ones on handle time and data quality.

Measuring What Matters Beyond Deflection

Most organizations track containment rate: the percentage of requests resolved without a human. It's a useful metric, but optimizing for it alone pushes staff to suppress handoffs, which is the opposite of building customer confidence at scale.

A better measurement panel for your hybrid support model includes:

- AI-only CSAT vs. post-handoff CSAT measured separately, so you know where satisfaction actually lives across interactions

- Handoff rate by reason (customer-requested, AI-uncertain, out-of-scope) trended over time

- Repeat contact within 7 days because a "resolved" conversation that generates a follow-up wasn't really managed well

- A single confidence question in your post-chat survey: "Did you feel confident in the response you received today?"

Alhena's analytics dashboard breaks out AI-resolved vs agent-resolved metrics with revenue attribution, so you can see whether your AI is driving personalized customer experiences or just deflecting volume. Brands like Puffy maintain 90% CSAT while automating 63% of inquiries because they measure both sides of their hybrid model. Read the full case study here.

Governance Before You Scale

Before organizations expand AI coverage across digital channels, put guardrails in place:

- A written list of common topics the AI must always hand off to human agents (legal requests, complaints involving specific people, anything touching sensitive data)

- Approval rules for agentic actions that touch money, identity, or inventory

- A fallback mode you can activate if AI quality drops on a specific topic cluster

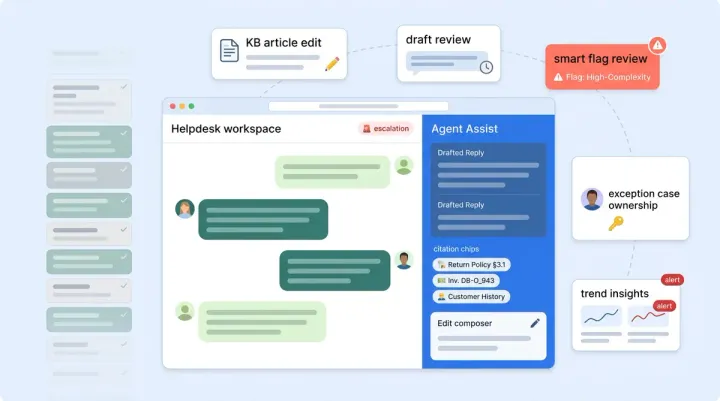

- A weekly human review of a sample of low-CSAT and escalated interactions managed by the system

These aren't bureaucratic hurdles. They're the brand-safety layer that lets you scale with confidence. Alhena's Agent Assist keeps human expertise in the loop on complex cases while automation handles routine customer support volume, so your staff stays in control without becoming a bottleneck.

A System You Design, Not a Feature You Ship

The brands winning at hybrid customer service aren't the ones with the most advanced models. They're the ones that designed their AI interactions around honesty, clean exits, and real measurement. Transparency at the start, confirmation before action, smooth handoffs in the middle, and metrics that track customer confidence (not just ticket counts) at the end.

Alhena is built to give organizations that control surface: grounded answers, structured handoffs, and analytics that show what's actually working across every channel.

Ready to build a hybrid support model your customers believe in? Book a demo with Alhena AI or start for free with 25 conversations.

Frequently Asked Questions

What does hybrid AI support mean?

Hybrid AI support combines AI-powered automation for routine queries with human agents for complex or sensitive issues. The AI handles volume and speed while humans provide empathy, judgment, and expertise. The key is designing clean transitions between the two so customers never feel stuck.

Why do so few customers prefer AI-only support?

Surveys consistently show that while most consumers are comfortable starting with AI, only about 2% want AI as their sole support option. Customers value speed but also want the safety net of a real person for edge cases, emotional situations, or high-stakes decisions like returns and billing disputes.

How does Alhena AI handle escalation to human agents?

Alhena transfers the full conversation context to the human agent, including a summary, detected intent, sentiment score, and suggested next steps. The customer doesn't repeat themselves, and the agent picks up mid-conversation with everything they need to resolve the issue quickly.

What metrics should I track for AI support trust?

Go beyond containment rate. Track AI-only CSAT separately from post-escalation CSAT, monitor escalation reasons (customer-requested vs. AI-uncertain vs. out-of-scope), measure 7-day repeat contact rate, and add a trust-specific question to your post-chat survey.

How quickly can I deploy hybrid AI support with Alhena?

Alhena deploys in under 48 hours with no dev resources required. It integrates with major helpdesks so your human agents stay in their existing helpdesk tools while AI automation handles frontline customer support volume.

Does AI support hurt customer satisfaction scores?

Not when designed well. Alhena customers like Puffy maintain 90% CSAT while automating 63% of inquiries. The key is grounding AI answers in real data, keeping human escalation easy, and measuring satisfaction for AI and human interactions separately.