The 95/5 Problem Every AI Vendor Hides From You

Every e-commerce AI shopping vendor says the same thing: no-code checkout tools, self-serve dashboard, live in 48 hours. And they're telling the truth. The first 95% of an AI shopping assistant deployment is that simple. You connect your catalog, install a widget, configure smart settings, and the chatbot starts answering questions.

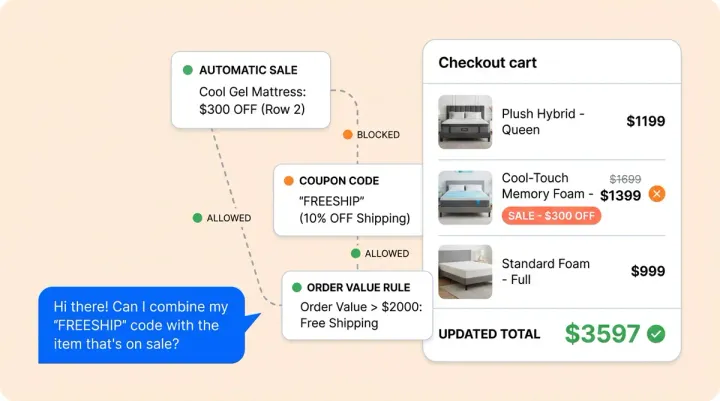

Then a shopper tries to stack a gift card with a promo code on a pre-order item. The shopping AI confidently tells them it works. It doesn't. That's the last 5%. And according to RAND Corporation research, it's where 80% of AI projects go to die.

This post isn't anti-self-serve. Alhena AI genuinely goes live in 48 hours with zero dev resources from your team. The contrarian argument is simpler: you don't need your engineers. But your AI shopping vendor better bring theirs. Here's why that last 5% kills shopping pilots, and what to demand from your vendor before signing.

The Last 5%: Where AI Shopping Pilots Quietly Fail

The self-serve part of deployment handles the predictable stuff. Product information ingestion. Basic FAQ training. Smart widget styling. Greeting messages. Any smart AI shopping platform can get this right because the inputs are clean and the expected outputs are straightforward.

The last 5% is different. It's the edge cases your ecommerce knowledge base never anticipated, the operational integration quirks your platform tools don't cover, and the brand voice nuances that take a real human (who understands NLP) to get right. These are specific, concrete problems.

Product logic edge cases

A shopper asks: "Can I use my $50 gift card and the SUMMER20 code together on a bundle?" Your AI needs to know your current online store's smart coupon stacking rules, which change by promotion, by product type, and sometimes by customer segment. A Shopify store with custom discount logic built through Shopify Functions handles this differently than one using a third-party app like Bold Discounts. No generic knowledge base covers this.

Or: "I need this monogrammed with the initials J&K, can you do ampersands?" Custom engraving with special characters, character limits, and font restrictions isn't in any standard product feed. Neither is multi-address shipping for a single order, or the logic behind "buy 2, get 1 free" when the shopper only wants to buy 2.

According to AI Journal, models that hit 92% accuracy on clean demo data drop to 71% when they hit real product data with real-world edge cases. That 21-point gap is made up of edge cases like these.

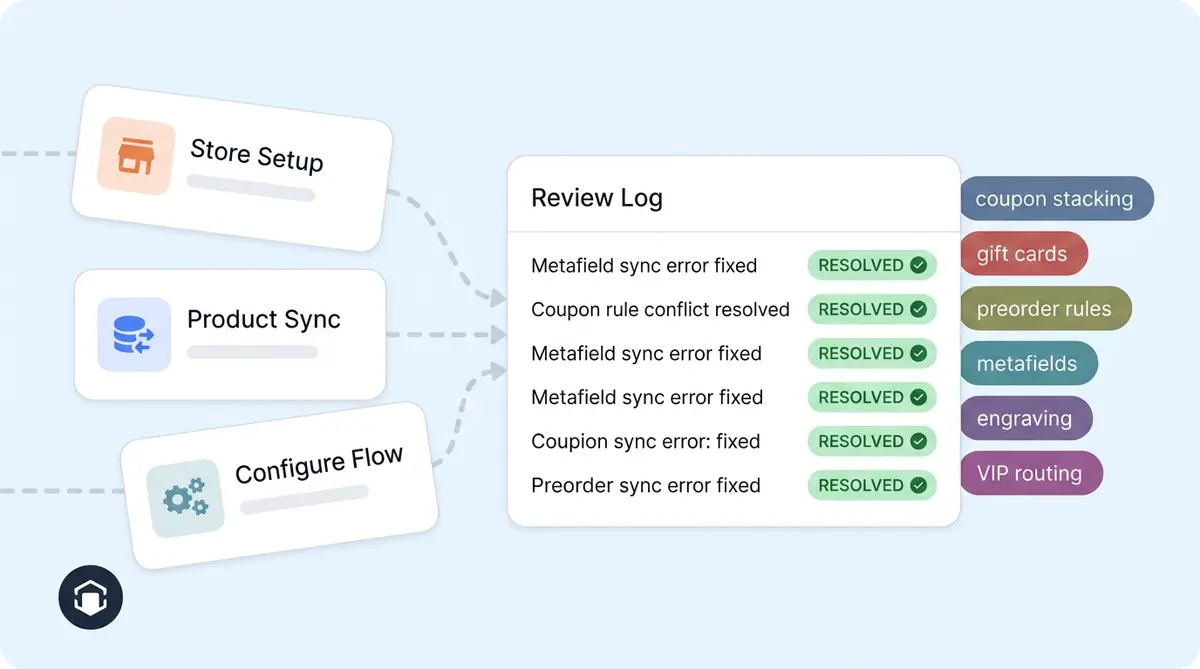

Integration quirks nobody documents

Your Shopify store uses a custom metafield for estimated delivery dates. Your Zendesk instance routes VIP shoppers through a different escalation path. Your returns portal has a holiday extension policy that only applies to orders placed between November 15 and December 31, but only for full-price items.

None of this is in a setup tools and wizard. Someone has to discover these rules by reading your actual conversation tools and logs, connecting with your customer support team, and testing the shopping AI against real scenarios. 68% of shoppers abandon a chatbot after one bad experience. You don't get a second chance to handle the weird ones right.

Brand voice calibration

Victoria Beckham doesn't talk like Puffy Mattress. A luxury skincare brand's AI should never say "Hey! Great pick!" when a shopper adds a $180 serum to checkout. But getting tone right isn't a slider you adjust in a dashboard. It takes iterating on real conversations, reviewing how the AI handles complaints versus upsells, and having someone with an ear for language catch the moments where the bot sounds robotic.

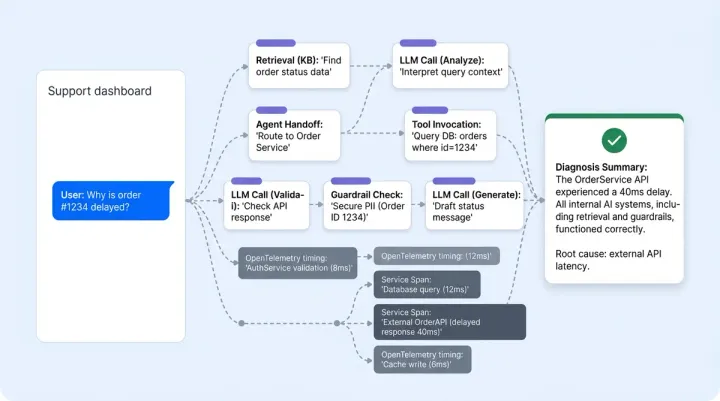

What a Forward-Deployed Engineer Actually Does (It's Not What You Think)

The forward-deployed engineer (FDE) model started at Palantir in the early 2010s. FDE job listings surged 800% in 2025, and OpenAI, Anthropic, Scale AI, and Databricks all now hire them. Andreessen Horowitz calls it "The Palantirization of Everything" and argues every vertical AI company will eventually need this capability.

But here's what most people get wrong about FDEs in ecommerce: they're not building your AI from scratch. The product is already built. The FDE's job is solving the last 5%.

In practice, that looks like:

- Sitting in your Slack channel. Not a shared support queue. A dedicated channel where your CX lead can flag a weird conversation and get a config change pushed within hours, not weeks.

- Reviewing conversation logs daily. Not waiting for your team to report problems. Proactively scanning for patterns: where is the AI giving vague answers? Where are shoppers dropping off? Where is the tone wrong?

- Pushing config changes in real time. A shopper discovered that the AI doesn't know your store charges restocking fees on electronics over $500? That rule gets added today, not in the next sprint.

- Catching quality degradation before it reaches your CX leader. AI performance drifts. New products get added without descriptions. Pricing changes don't sync. An FDE monitors these signals and fixes them before your CSAT score takes a hit.

- Translating business rules into AI logic. Your CX team says "we're flexible on returns for loyal shoppers." An FDE turns that into a structured policy the AI can actually follow: loyalty tier thresholds, exception criteria, escalation triggers.

As Palantir's blog puts it: "While a traditional software engineer focuses on creating a single capability for many customers, FDEs focus on enabling many capabilities for a single customer."

The "Set It and Forget It" Death Spiral

Here's the exact sequence that kills most AI shopping pilots. We've seen it dozens of times, and the data from Pertama Partners backs it up: the median time to abandonment for failed AI shopping projects is 11 months.

Week 1-2: Brand launches AI shopping chatbot. The team is excited. Early performance levels look promising because the easy inquiries (shipping times, return policies, product availability) get handled cleanly. This is the 95% at work.

Week 3-4: Complex inquiries start appearing. A shopper gets a wrong answer about an order with a bundle discount. Another gets a stale order shipping estimate because the warehouse integration lagged. The CX team files tickets with the AI vendor. Response time: 48-72 hours through a shared support queue.

Week 5-8: Edge cases pile up faster than fixes ship. The CX team starts overriding the shopping AI on complex inquiries, which defeats the purpose of sales automation. CSAT on shopping AI-handled purchase conversations drops below 80%. Nobody on the vendor side is proactively reviewing logs.

Week 9-12: The CX director flags declining satisfaction levels to leadership. The team's conclusion isn't "we need better vendor support." It's "AI doesn't work for our business." The shopping pilot gets killed at the 60-day review, or limps along until 90 days before someone pulls the plug.

If you've lived through something like this, you're not alone. Our post on why AI didn't move the needle breaks down the five root causes. Spoiler: four of them trace back to vendor support, not technology.

Pertama Partners reports that abandoned AI shopping projects cost an average of $4.2 million in sunk costs. For mid-market e-commerce operations and businesses, the cost isn't that high in purchases or dollars, but in opportunity cost and team morale it's just as devastating. Your CX team tried something new, it failed, and now they're skeptical of every AI pitch for the next two years.

Six Things to Demand From Your AI Vendor Before You Sign

If the AI agent evaluation checklist covers product capabilities, consider this the vendor label checklist. Add these as label items in your buy-side contract or RFP.

- A dedicated Slack (or Teams) channel with engineering access. Not an operational support portal. Not an email alias. A live channel where your team can label and tag an engineer and get a response within 4 business hours. If the vendor pushes back on this, they don't plan to support you past go-live.

- A named implementation lead. One person who knows your store, your catalog quirks and operational processes, your integration stack, and your CX goals and operational workflows. Not a rotating cast of support agents reading from a script. Ask for their name before you sign.

- A weekly conversation review cadence for the first 30 days. The vendor should be reviewing your AI's conversation logs every week, flagging quality issues proactively, and pushing tuning changes. Our 30-day tuning playbook describes what that cadence should look like.

- An SLA on config changes. When you discover the AI doesn't handle a product return edge case correctly, how fast does the fix ship? If the answer is "next release cycle" or "we'll put it in the backlog," that's not a vendor committed to your success.

- Access to raw conversation logs and performance and quality metrics. You can't improve what you can't measure. If your vendor controls the dashboard and limits what data you see, you won't catch target drift until your customers already have. Setting the right KPIs before launch makes this data actionable from day one.

- A post-launch escalation path that doesn't go through general support. Day 45, your AI starts hallucinating a discontinued product. You need engineering eyes on it in hours, not a ticket that sits in a queue for three days.

How Alhena AI Solves the Last 5%

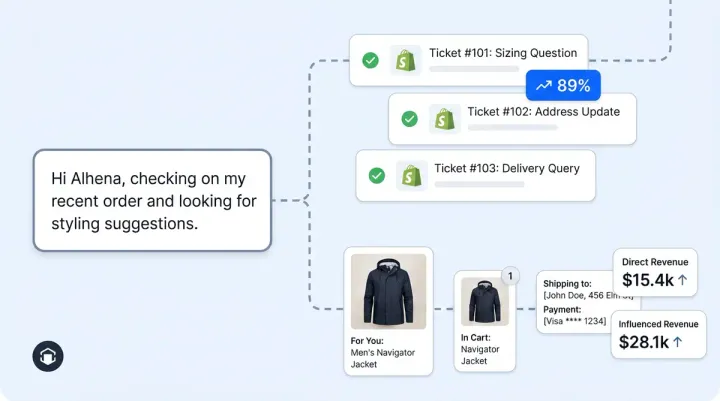

Alhena AI is self-serve for the first 95%. The AI Shopping Assistant ingests your product information from feeds, docs, spreadsheets, and web pages. The system uses smart content segmentation that breaks down data by semantic structure, not arbitrary size limits. Widget installation takes minutes. You're live in under 48 hours with no dev resources from your team.

But it's the last 5% of ecommerce AI where Alhena's model is different from every other shopping AI vendor in the market:

- Dedicated Slack channels for every deployment. Your customer support lead can message the Alhena implementation team directly. Operational config changes ship in hours, not sprints.

- Named implementation engineers who learn your store, your catalog edge cases, and your brand voice. They review conversation logs proactively during the first 30 days and flag issues before your team does.

- Founder-led onboarding for strategic accounts. For high-volume stores, Alhena's founding team is directly involved in deployment, bringing product-level expertise and context that no support tier can match.

- Pre-launch edge case mapping. Before go-live, Alhena's team audits your catalog for the exact problems that kill pilots: complex discount rules, unusual return policies, variant-level data gaps, and integration quirks across your Shopify, WooCommerce, or Salesforce Commerce Cloud, or Magento stack.

- Ongoing AI coaching that continues after the initial deployment window. As your catalog grows, promotions change, and seasonal policies shift, the AI stays calibrated.

The results speak to the model, not just the technology or the sales pitch. Tatcha saw a 3x sales conversion rate on purchases and 38% AOV uplift, with 11.4% of total site sales from purchases through the AI. Puffy achieved 63% automated inquiry resolution with 90% CSAT and strong sales. Victoria Beckham saw a 20% AOV increase. Those numbers don't happen with self-serve alone. They happen when an engineering team is actively tuning the AI against real conversations, real edge cases, and real revenue data.

How This Fits Your AI Deployment Roadmap

If you're planning a low-risk AI pilot, the vendor's engineering commitment should be part of your buy-in target criteria from day one. Think of it as the tenth question on every evaluation checklist: "What does your vendor do after go-live?"

Here's how the pieces connect to maintain deployment quality:

- Before launch: Scope your pilot with target KPIs and an internal owner. Map your edge cases. Negotiate the six vendor commitments listed above.

- Days 1-2: Self-serve setup handles the 95%. The first 48 hours are about catalog ingestion, widget install, and basic configuration.

- Days 3-30: The vendor's engineering team tackles the last 5%. Follow the 30-day tuning playbook with your vendor actively reviewing logs and pushing improvements.

- Day 31+: Shift from intensive tuning to ongoing maintenance and monitoring. If your vendor disappears at this stage, flag it immediately.

Alhena's Support Concierge and Agent Assist handle the support side of the equation. When customers do escalate, your human agents get full AI-powered agentic checkout context: what the shopper asked, what the AI tried, and why the handoff happened. Every interaction gets better, whether it's handled by the AI or a person.

Key Takeaways

- Self-serve AI setup is real. The first 95% of deployment works out of the box. Don't let anyone tell you otherwise.

- The last 5% is where shopping pilots die. Gift card stacking, unusual return rules, complex discount logic, multi-address shipping, integration quirks. These edge cases are specific to your store and can't be solved by a dashboard.

- You don't need your engineers. But your vendor better bring theirs. A dedicated implementation lead, a Slack channel with engineering access, and an SLA on config changes aren't nice-to-haves. They're the difference between a pilot that scales and one that gets killed at 60 days.

- The "set it and forget it" death spiral is predictable and preventable. When your vendor goes silent after go-live, edge cases compound into a CSAT crisis. Demanding engineering access from day one stops the pattern.

- Alhena AI combines self-serve speed with hands-on vendor engineering. Live in 48 hours, then backed by dedicated Slack channels, named engineers, and founder-led onboarding for strategic accounts. That's how Tatcha hit 3x conversions and Puffy reached 90% CSAT.

Ready to stop worrying about the last 5%? Book a demo with Alhena AI and see how our implementation team handles the edge cases that kill other solutions. Or start free with 25 conversations and experience the 95% first.

Frequently Asked Questions

Why do most e-commerce AI chatbot pilots fail within 90 days?

Most e-commerce AI chatbot pilots fail because the self-serve setup handles only the predictable 95% of queries. The remaining 5%, which includes edge cases like coupon stacking rules, custom product logic, multi-address shipping, and integration quirks, requires hands-on engineering from the vendor. Without a dedicated implementation engineer reviewing conversation logs and pushing workflow and config changes, these edge cases pile up, CSAT drops, and the pilot gets killed at the 60-day review. RAND Corporation found that 80% of AI projects fail to deliver intended business value.

What is a forward-deployed engineer and why do ecommerce AI vendors need them?

A forward-deployed engineer (FDE) is a senior engineer from an AI vendor who embeds directly with a customer's team to solve implementation and deployment challenges the product can't handle out of the box. Pioneered by Palantir and now used by OpenAI, Anthropic, and Scale AI, FDEs sit in your Slack channel, review conversation logs daily, push config changes in hours instead of weeks, and catch quality degradation before it impacts customers. FDE job listings surged 800% in 2025 because the industry recognized that self-serve AI setup alone doesn't produce successful production-quality results.

What should I ask an AI shopping assistant vendor about post-launch support before signing?

Ask for six specific commitments: (1) a dedicated Slack or Teams channel with engineering access, not a support portal, (2) a named implementation lead who knows your store, (3) weekly conversation review cadence for the first 30 days, (4) an SLA on config changes with a defined turnaround time, (5) access to raw conversation logs and quality metrics, and (6) a post-launch escalation path that bypasses general support. If the vendor can't commit to these in writing, they plan to disappear after go-live.

How does Alhena AI handle complex product edge cases like coupon stacking and custom engraving?

Alhena AI's implementation team maps your store's specific edge cases before go-live, including discount stacking rules, custom product options like monogramming or engraving with special characters, multi-address shipping logic, and bundle pricing. During the first 30 days, named implementation engineers review real conversation logs daily through dedicated Slack channels and push config changes within hours when new edge cases surface. This hands-on approach is why Tatcha achieved 3x conversions and Puffy reached 90% CSAT.

What is the AI pilot death spiral in ecommerce and how do you prevent it?

The AI pilot death spiral is a predictable four-stage pattern: (1) the brand launches AI and early performance results look good because easy questions get handled, (2) edge cases start appearing and the vendor responds slowly through a shared support queue, (3) the CX team overrides the AI on anything complex, defeating the automation and agentic AI purpose, (4) leadership blames the technology and kills the pilot at 60-90 days. You prevent it by demanding a dedicated vendor engineering resource who proactively reviews conversation logs weekly and pushes fixes before quality drops reach your CSAT scores.

Can an AI shopping assistant really go live in 48 hours without developer resources?

Yes, for the first 95% of setup. Platforms like Alhena AI handle catalog ingestion, widget installation, and basic configuration in under 48 hours with no dev resources from your team. The remaining 5%, which covers complex discount logic, unusual return policies, platform-specific integration quirks, and brand voice tuning, requires the vendor's engineering team. That's why the vendor's post-launch support model matters more than the setup speed. Self-serve gets you live. Vendor engineering keeps you live and helps you maintain quality.

How long should an AI vendor actively support an ecommerce deployment after launch?

The critical window is the first 30 days. During this period, the vendor's engineering team should review conversation logs weekly, push config changes for discovered edge cases, and hold calibration calls with your CX team. After day 30, support shifts to continuous monitoring and maintenance as your catalog grows, promotions change, and seasonal policies shift. A vendor that disengages at go-live is a vendor whose AI will drift in accuracy within weeks. The median time to abandonment for failed AI projects is 11 months, per Pertama Partners.

What is the difference between AI chatbot deflection rate and resolution rate for ecommerce?

Deflection rate measures how many conversations the AI handles without escalating to a human. Resolution rate measures how many the AI actually solves correctly. A chatbot that says "I've forwarded your request to our team" has deflected a ticket but resolved nothing. For ecommerce AI, resolution rate is the metric that matters. Alhena AI's case studies show real resolution metrics: Puffy achieved 63% automated inquiry resolution with 90% CSAT, and Crocus reached 86% deflection with 84% CSAT, both measured on actual purchase outcomes, not ticket routing.