Most ecommerce brands deploy a single AI chatbot powered by a single language model. That one model tries to handle everything: product recommendations, order tracking, return processing, ingredient questions, sizing advice, and general support. All at once. All through the same engine.

That is the equivalent of hiring one employee and asking them to simultaneously be a product expert, a logistics coordinator, a customer service agent, and a stylist. No human could do all four well. No single AI model can either. Autonomous AI agents that act on specific tasks solve this by distributing work across specialists. AI agents bring focused intelligence to every commerce domain. These AI agents replace the generalist approach with domain-specific expertise. Yet most retailers still rely on this exact setup, expecting quality output across every domain.

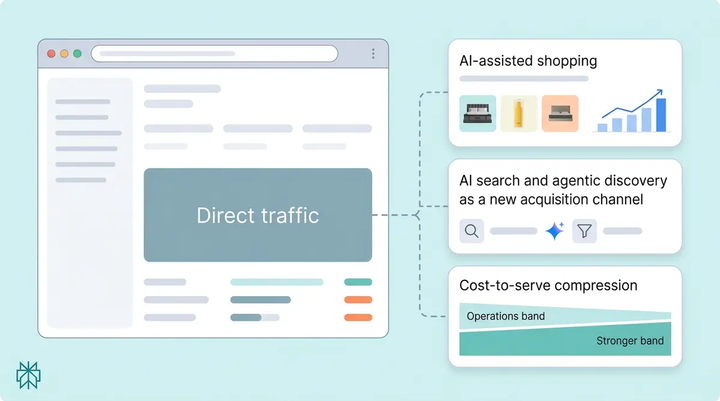

This post covers the two architectural shifts and the key use cases that solve this problem: multi-model AI (using different language models for different tasks) and multi-agent orchestration (deploying specialized AI agents as autonomous AI agents for different commerce domains). Together, they form the foundation of AI that actually performs in ecommerce, not just responds.

Why One Language Model Is Not Enough

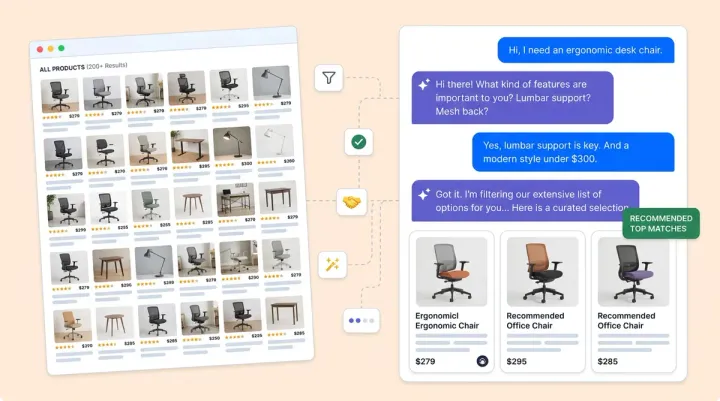

Every language model has tradeoffs. Some excel at nuanced conversational reasoning, making them ideal for complex product consultations where a shopper needs help choosing between six moisturizers based on skin type, active ingredients, and sensitivities. Others are faster, more powerful at structured lookups, and cheaper for routine tasks and automated tasks like order status lookups, where speed matters more than depth. Some multimodal models handle diverse inputs better, making them ideal for image-based queries involving visual data like shade matching, visual search, or identifying a product from a photo.

A single-model approach forces every interaction through the same engine. A multi-model, multi-agent approach does the opposite. That creates a lose-lose tradeoff. You either overpay for simple tasks by routing everything through the most powerful (and most expensive) model, or you underperform on complex tasks by using a cheaper LLM that can't handle nuanced product guidance.

Consider two real conversations that happen on online stores every day:

- Conversation A: "Where is my order #4829?" This requires a fast lookup against the order management system. It needs a fast agent with speed, not deep reasoning.

- Conversation B: "I have sensitive skin, rosacea, and I'm on retinol. What moisturizer won't cause a reaction?" This requires the model to reason across dozens of product attributes, ingredient interactions, and skin compatibility factors.

Running both through the same model means one of them gets the wrong tool for the job. Multi-model AI ecommerce solves this by evaluating each incoming conversation based on complexity, intent, inputs, and required capabilities, then automatically routing it to the optimal model.

In this distributed architecture, Conversation A routes to a fast, cost-efficient model that returns the answer in under a second. Conversation B routes to the most capable reasoning model that can weigh ingredient lists, cross-reference contraindications, and deliver a confident recommendation grounded in verified product data. The shopper experiences one seamless conversation. Behind the scenes, the platform selects the right engine for every message within its agentic multi-model framework, enabling autonomous decision-making.

Why Model Selection Includes Multimodal Capabilities

Not all commerce interactions are text-based. Shoppers upload photos of their skin concerns, send screenshots of products they saw elsewhere, or ask "do you have anything like this?" while sharing an image. Some models process these multimodal inputs with far greater accuracy than others. Multi-model architecture means the platform can route image-based queries to a model built for visual understanding, while text-based conversations use models optimized for language.

The Vendor Lock-In Risk of Single-Model Architecture

Brands locked into a single LLM provider, relying on one of many available LLMs, are exposed to pricing changes, capability shifts, model deprecation, and outages from that one provider. If your entire AI experience runs on one of many available LLMs and that model's pricing doubles (as has happened multiple times in the industry), you absorb the cost or lose the capability.

Multi-model architecture ensures that if one model's performance drops or pricing increases, the platform shifts to the best available alternative without disrupting the customer experience. It can orchestrate across providers dynamically. This is infrastructure resilience across your technology ecosystem, not a technical luxury. Autonomous model routing ensures your AI agents always use the most capable option. As multimodal models and LLM solutions advanced rapidly through 2025, this flexibility became even more valuable. When Alhena AI routes conversations across models from OpenAI, Anthropic, and Google, no single provider change can degrade the shopper experience. This architecture gives you scalability without compromise.

Why One Bot Is Not Enough

Using multiple models solves the "right brain for the task" problem. But you also need multiple agents, each with a defined role, to solve the "right specialist for the domain" problem. Understanding how agents work in a multi-agent system is key to understanding why this architecture outperforms single-bot solutions.

Instead of one all-purpose bot trying to handle everything, agentic multi-agent AI architecture deploys autonomous specialized agents that each handle a specific domain of commerce. Think of it as the difference between a general practitioner and a team of specialists. In a multi-agent system, agents act independently on their assigned domain. The GP can address most concerns at a surface level. The specialists deliver expert-level care in their domain.

The Core Agents Ecommerce Requires

Product Expert Agent. This agent understands your full catalog at the attribute level. It handles ingredient questions, sizing guidance, product comparisons, and personalized recommendations. Every answer is grounded in your verified product data, designed to predict what shoppers need and predict purchase intent based on real world signals, not generated from the model's general training and research data. When a shopper asks which running shoe has the most arch support in a size 11 wide, the Product Expert Agent queries your catalog data and returns a specific, accurate answer.

Order Management Agent. This agent connects directly to your commerce platform and helpdesk to check order status, process returns, initiate exchanges, update shipping details, and handle subscription lifecycle actions. It pulls from live system data in real time across order workflows, not cached snapshots. When a shopper asks to cancel one item from a three-item order, this agent executes the action, not just describes it.

Support Agent. This agent handles general inquiries, policy questions, and account issues with brand-voice consistency and tone alignment. It knows when to resolve autonomously and when to escalate to a human agent with full conversation context, enabling collaborative workflows, distributed task handling, and seamless human-AI collaboration across all workflows, so the human picks up where the AI left off, not from scratch. This collaborative approach enables true human-AI collaboration.

Vertical Specialists for specific use cases. These are category-specific intelligent agents that bring intelligence no general-purpose bot can match. The Fit Analyzer for apparel sizing uses body measurement data and brand-specific fit profiles to recommend the right size across different brands. The Skin Analyzer for beauty consultations evaluates skin type, concerns, and current routine to recommend compatible products. The Outfit Builder for fashion styling creates complete looks from your catalog based on occasion, style preference, and budget. These agents exist because commerce verticals have domain knowledge that a general bot simply doesn't possess.

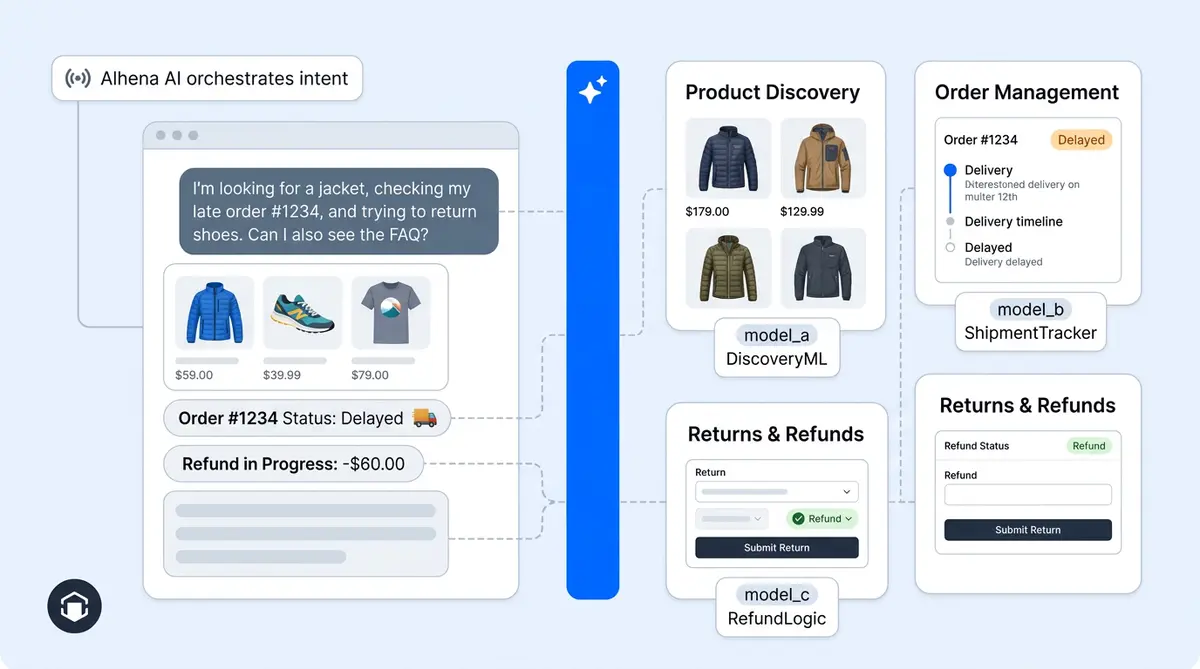

How the Orchestration Layer Works

An intelligent router sits above all agents. It manages agent communication and inter-agent communication for coordination, reads each incoming message for intent, complexity, and domain, then directs it to the right specialist agent in milliseconds.

If a conversation starts with a product question and shifts to an order status inquiry, the orchestrator hands off between intelligent agents mid-conversation, making decisions in real time without the shopper noticing any transition. The unified memory layer ensures full context carries across every handoff.

This is why multi-agent conversations feel seamless while single-bot conversations feel like talking to someone who keeps forgetting what they're good at. The single bot tries to context-switch between domains within one model. The multi-agent system routes to the specialist who already has the right tools loaded.

How Multi-Model and Multi-Agent Work Together

This is where the full architecture comes into focus. When shoppers interact with your AI, they should not notice any complexity behind the scenes. Multi-model selection and multi-agent orchestration aren't separate features. They're two layers of the same system.

Each specialized agent uses a different model optimized for its task, powering different workflows:

- The Product Expert Agent uses the strongest reasoning model for complex consultations where nuanced attribute matching determines whether a recommendation converts or bounces

- The Order Management Agent uses a fast, efficient model for structured data lookups where speed and accuracy on deterministic queries matter most

- The Skin Analyzer uses a multimodal model for image analysis, processing multimodal data like photos of skin concerns alongside text descriptions to recommend compatible products

- The orchestrator itself uses lightweight classification to route conversations in milliseconds, adding near-zero latency to the experience, even in complex systems with distributed workflows

This combination delivers the best possible answer for every interaction at the lowest possible costs and latency. You're not paying reasoning-model prices for order status lookups. You're not forcing a text-only model to analyze product images. Every agent gets the engine it needs.

The Single-Bot Counterargument

Some will argue that the latest frontier models are capable enough to handle everything. And they're right that frontier models are impressively versatile. But versatility is not the same as optimization.

A single model handling everything produces acceptable results across the board. You cannot simulate specialist-level performance with a generalist. Specialized agents using the right model for each task produce exceptional results in every domain. In e-commerce, where the difference between a good and great product recommendation is a 38% average order value uplift (as Tatcha achieved with specialized product guidance), "acceptable" leaves significant revenue on the table.

Acceptable also carries risk. A single model generating product information from its training data, rather than from your verified catalog, will eventually hallucinate an ingredient, invent a feature, or quote a wrong price. Specialized agents grounded in your actual product data within a scalable framework don't guess. They use retrieval from your verified sources and verify every response.

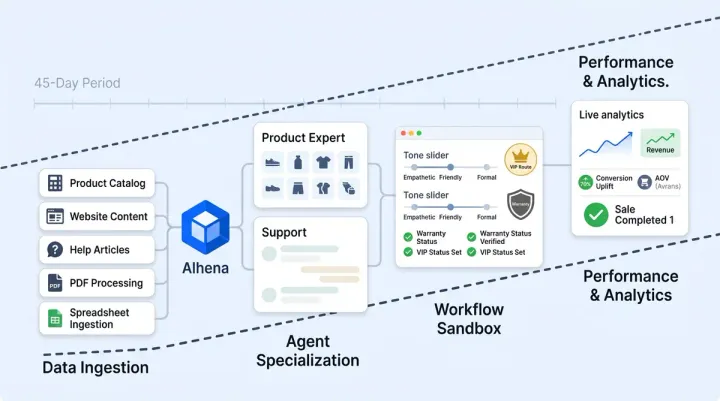

What This Architecture Looks Like in Practice

Alhena AI is built on multi-model, multi-agent architecture from the ground up for e-commerce. The platform provides automatic model selection across GPT-5, GPT-4.1, Claude Opus 4, Claude Sonnet 4, Gemini 2.5 Pro, and Gemini 2.5 Flash, choosing the optimal model from multiple LLMs for every interaction based on complexity and task type. These LLMs span different providers and capabilities.

Specialized agents, including the Product Expert Agent, Order Management Agent, Fit Analyzer, Skin Analyzer, Outfit Builder, and Support Agent, each operate as purpose-built specialists with deep domain knowledge. They hand off seamlessly through the intelligent orchestration layer that plans and routes every conversation.

The shopper experiences one conversation, never knowing that multi agent routing connects them to the right agent for each question. Behind it, multiple models and multiple agents work together and coordinate to deliver accurate shopping guidance, real-time order actions, and brand-aligned support simultaneously. This works across web chat, email, Instagram DMs, WhatsApp, and voice, with the same specialized routing, voice analysis, and domain intelligence on every channel.

This is why Alhena delivers hallucination-free responses, 3x conversion rates, 38% AOV uplift, and 86% ticket deflection while single-bot platforms struggle to handle anything beyond basic FAQ deflection. The architecture isn't a technical detail. It's the reason the results are different.

How Multi Agent AI Systems Scale for Ecommerce

Distributed multi agent systems scale differently than single-bot architectures. When AI agents act autonomously within their domain, each agent handles its own workload without bottlenecking the others. The Product Expert agent can process complex catalog queries while the Order Management agent simultaneously executes real-time order modifications across distributed workflows. Agent communication flows through the orchestration layer, which manages coordination between agents without creating a single point of failure.

This is why agentic AI built on multi agent architecture outperforms monolithic LLMs for ecommerce at scale. Intelligent agents that collaborate on complex tasks, predict customer intent, and work together across real world scenarios deliver results that no single model can simulate or replicate. You cannot simulate this level of specialization with a monolithic approach. The scalability of this framework comes from its distributed design: add a new agent for a new use case without rebuilding the existing distributed system or disrupting active workflows. Each agent connects to the right LLMs for its task, and the multi model layer ensures you always use the most cost-efficient, capable option.

The Single-Bot Era Is Over

The single-bot approach served its purpose as a starting point. It proved that AI could handle customer conversations at scale. But e-commerce in 2026 demands AI for enterprises, support teams, and commerce teams that thinks like a team, not like one overworked generalist.

Multi-model selection ensures every response uses the best available intelligence for its specific task. This agentic approach transforms AI from a simple chatbot into an autonomous commerce engine. Agentic AI is the future of intelligent ecommerce. The agentic approach means each agent makes autonomous decisions within its domain. Multi-agent orchestration ensures every commerce domain gets a specialist with the right tools and data. Together, they deliver the performance that no single model or single bot can match.

Ready to see what multi-model, multi-agent AI looks like on your store? Book a demo with Alhena AI or start free with 25 conversations. You can also use the ROI calculator to estimate your revenue impact before committing.

Frequently Asked Questions

How does automatic model selection across 7+ LLMs improve ecommerce AI accuracy?

Alhena AI evaluates each incoming conversation for complexity, intent, and required capabilities, then routes it to the optimal model from cloud providers including OpenAI, Anthropic, and Google. Simple order lookups go to fast, cost-efficient models. Complex product consultations go to the strongest reasoning models. This means every response uses the best available intelligence for its specific task, reducing hallucinations and improving recommendation quality.

What specialized agents does Alhena AI use for ecommerce and how do they hand off mid-conversation?

Alhena AI deploys a Product Expert Agent for catalog-grounded recommendations, an Order Management Agent for real-time order actions, vertical specialists like the Fit Analyzer and Skin Analyzer, and a Support Agent for general inquiries. An intelligent orchestration layer reads each message for intent and domain, then routes to the right agent in milliseconds. If a shopper shifts from a product question to an order inquiry, the handoff happens invisibly through seamless agent communication with full context preserved through Alhena AI's unified memory layer.

Why is vendor-agnostic multi-model architecture more resilient than single-LLM ecommerce AI?

Brands locked into one LLM provider face pricing changes, capability shifts, model deprecation, and outages from that single source. Alhena AI's multi-model architecture routes across GPT-5, Claude Opus 4, Gemini 2.5 Pro, and other models simultaneously. If one model's performance degrades or costs increase, the platform shifts to the best alternative without any disruption to the shopper experience, making it true infrastructure resilience rather than a technical luxury.

How does multi-agent orchestration differ from a single AI chatbot for ecommerce customer service?

A single chatbot forces one model to context-switch between product expertise, order management, returns processing, and general support within the same conversation. Multi-agent orchestration in Alhena AI assigns each domain to a dedicated specialist agent with its own tools, data connections, and guardrails. The Product Expert Agent queries verified catalog data. The Order Management Agent executes real-time actions against your OMS. Each agent excels in its domain rather than performing acceptably across all of them.

What measurable results does multi-model multi-agent AI deliver compared to single-bot ecommerce platforms?

Alhena AI's multi-model, multi-agent architecture delivers 3x conversion rates, 38% average order value uplift, and 86% ticket deflection for ecommerce brands. These results come from specialized agents using the optimal model for each task: the strongest reasoning model for product consultations, fast models for order lookups, and multimodal models for image-based queries. Single-bot platforms using one model for everything produce acceptable but not exceptional results, leaving significant revenue on the table.