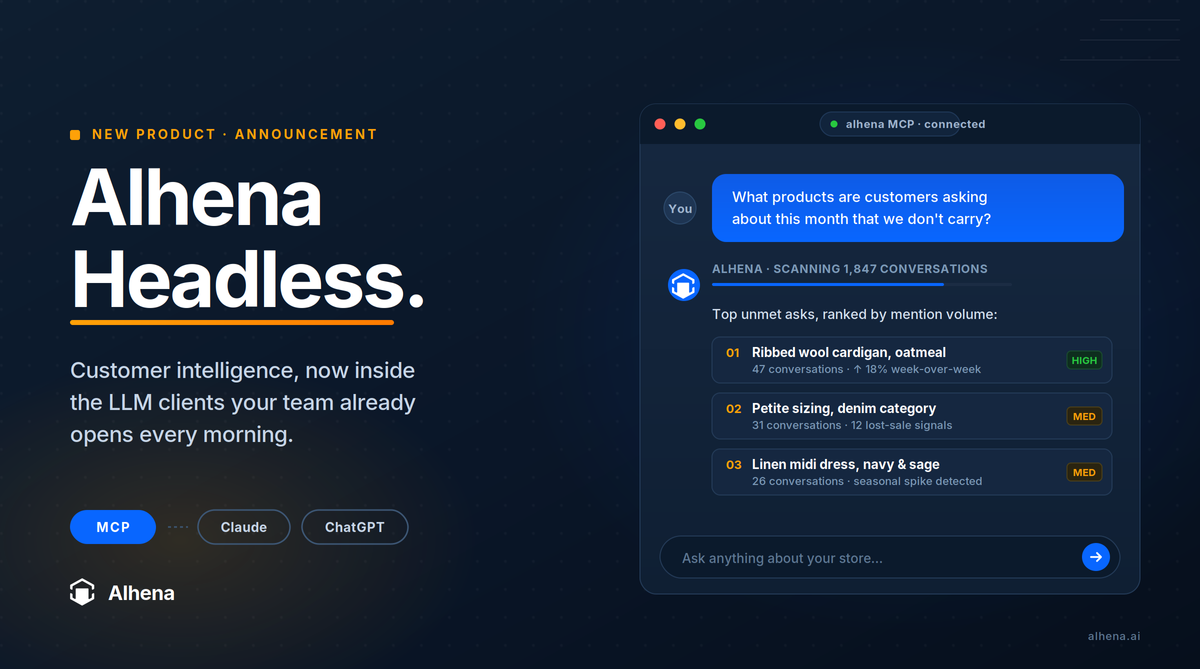

Today we are announcing Alhena Headless.

E-commerce leaders who use Alhena can now connect their own Claude or ChatGPT account directly to their Alhena workspace and ask anything they want about their store, their customers, and their operation, all in plain English.

Opening a second front door

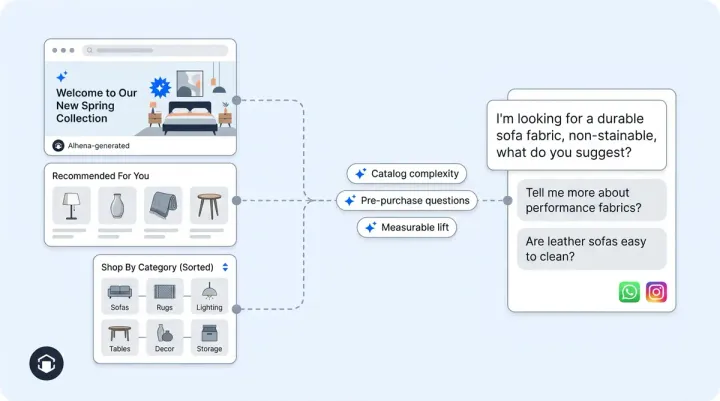

For the last few years, Alhena has powered the AI concierge experience for some of the most discerning e-commerce brands in the world. Every conversation a shopper has with your concierge is a signal. Why they are buying. What they are asking about. What they want and cannot find. Which product they almost bought before something stopped them. Until today, your team accessed all of that intelligence through our dashboard.

Today we are opening a second front door. Connect your Claude or ChatGPT account to Alhena and ask anything about your store through conversation. The same data that lives in our dashboard, available through the LLM client your team already opens every morning.

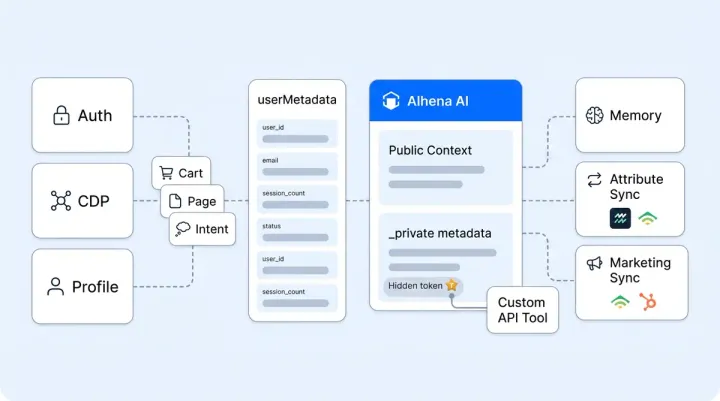

The technical layer making this possible is the Model Context Protocol, an emerging open standard for connecting language models to enterprise systems. We exposed Alhena as an MCP server. Compatible LLM clients can now call directly into Alhena's tools, knowledge, and analytics, with the same permissions and audit trail your team is already operating under.

Five things your team can do today

Concrete examples of work that used to take a week of analyst time and now takes a minute of conversation.

1. Surface hidden demand for products you do not yet stock. Ask "what products are customers asking about this month that we do not carry." Your LLM scans the past month of conversations, clusters the unmet asks, and gives you a ranked list with example transcripts. Real demand signal, straight from your shoppers, ready for your next merchandising review.

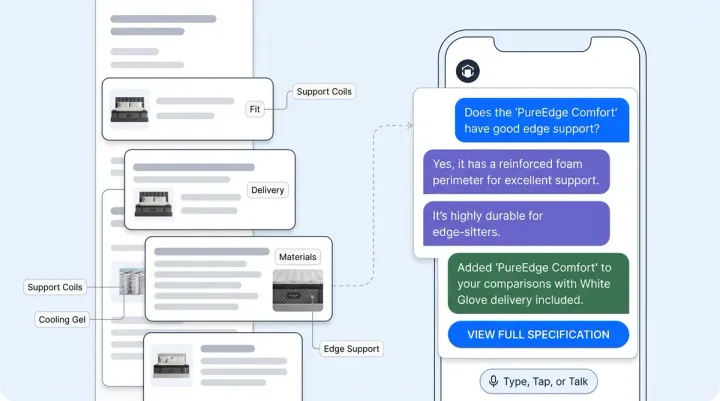

2. Decide what to stock more of and what to drop. Ask "based on conversation volume and conversion patterns, which SKUs should I increase inventory on for next quarter, and which are losing momentum." Pre-purchase question volume is one of the cleanest leading indicators of demand. Your LLM correlates it with what actually converted, and gives you a buy plan you can take to your supplier conversation that afternoon.

3. Understand why a sales pattern moved. Ask "why were our weekend sales higher than usual." Your LLM reads the conversations from those days, identifies the category that spiked, traces the questions back to a TikTok mention or a newsletter feature, and shows you which products rode the wave and which did not. The kind of analysis that used to require pulling four reports and a Slack thread with the data team.

4. Diagnose conversion drop-off on a specific product. Ask "what is making people hesitate on the wide-leg trousers." Your LLM pulls every conversation that mentioned the product, surfaces the common objections (fit, fabric weight, shipping windows, return policy), and tells you whether the bottleneck is the product page, the size guide, or the price. Now you know what to fix.

5. Audit your CX health and brand voice. Ask "which guidelines fired most last week, where did they conflict, and is our brand voice holding up across the last 200 tickets." Your LLM reads your configuration, your recent conversations, and reasons through the tension. Operational hygiene without opening a single dashboard tab.

Why this matters

For your growth. Every shopper conversation is demand intelligence waiting to be read. Until now, that signal lived in dashboards your operators were too busy to mine. With Alhena Headless, the merchandising question, the inventory question, the pricing question, the campaign attribution question all become conversational. Decisions get made on the real voice of your customer.

For your operation. You stop being limited by the questions your dashboard was designed to answer. The follow-up question, the lateral pivot, the "wait, can you also check" all become natural. The afternoon your team used to spend pulling reports becomes a five-minute conversation.

For your platform choice. Exposing yourself through MCP is a credibility test. To work this way, the underlying platform has to be coherent. The data layer has to be clean. The knowledge representation has to be queryable. The agents and guidelines have to be first-class objects an LLM can reason over. Few platforms in the AI customer experience space pass this test today. Alhena does, and we did the work over the last few years specifically so we could.

How to get started

- Log into your Alhena workspace.

- Generate an MCP server URL with the scopes appropriate for your team and role.

- Add the URL to your Claude or ChatGPT workspace following their MCP connector setup.

- Open your LLM client and start asking questions.

Most customers are up and running in under ten minutes. Your customer success contact at Alhena can walk you through it on a call if helpful. Book a walkthrough here.