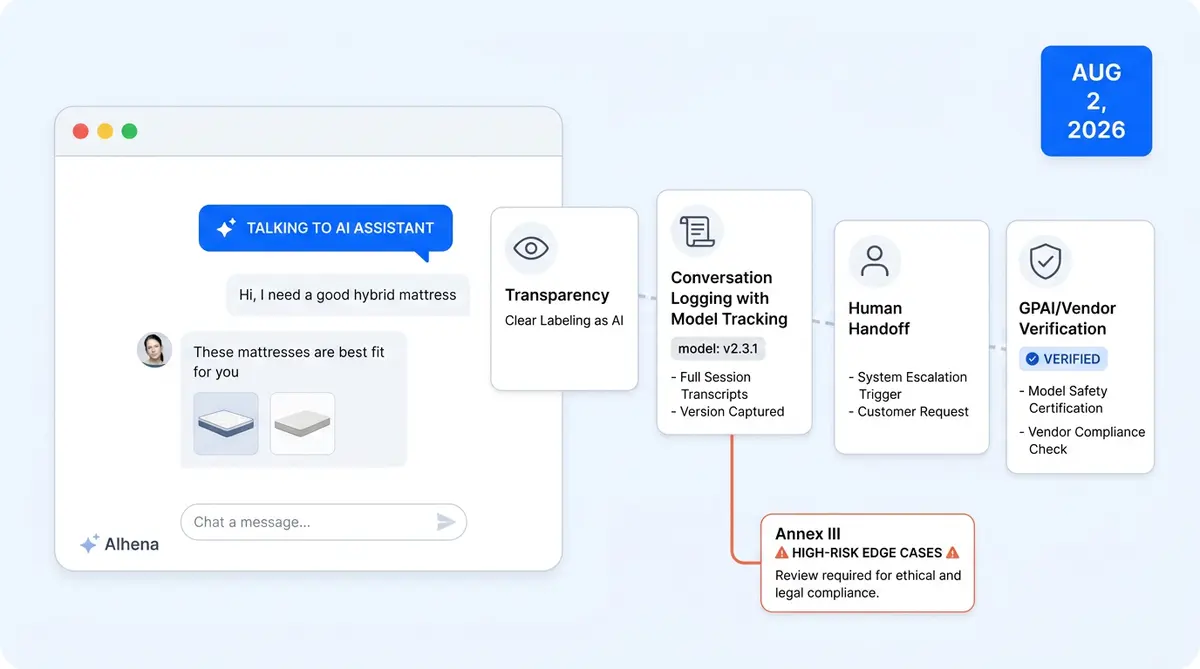

The August 2, 2026 Deadline: What's at Stake

The EU AI Act's broadest enforcement wave hits on August 2, 2026. That's the date when Article 50 transparency obligations for limited-risk AI systems take full effect, along with high-risk system rules and European Commission and AI Office enforcement powers.

Every ecommerce chatbot, shopping assistant, and AI-powered support tool serving EU customers falls under this regulation. The penalties aren't abstract: up to €35 million or 7% of global annual turnover for prohibited practices, €15 million or 3% for high-risk non-compliance, and €7.5 million or 1% for providing misleading information to regulators.

Here's the uncomfortable part. While 83% of ecommerce companies now use AI chatbots (DemandSage, 2026), only 26.2% of managers have started concrete compliance activities (Deloitte). Over half of organizations lack even a basic inventory of their AI systems in production.

If your ecommerce brand uses AI in any customer-facing capacity, you have roughly 100 days to get compliant. This guide breaks down the compliance requirements and exactly what you need to do.

Where Ecommerce AI Falls in the Risk Classification

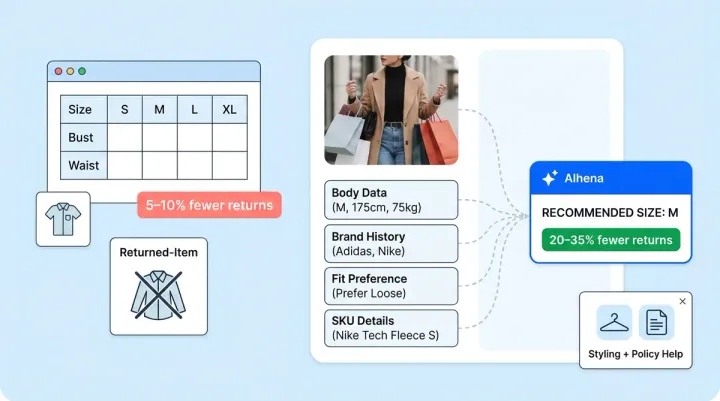

The EU AI Act sorts AI systems into four risk tiers: prohibited, high-risk, limited-risk, and minimal-risk. Most ecommerce AI lands squarely in the limited-risk category under Article 50, which carries transparency requirements but not the full compliance burden of high-risk systems.

Limited Risk (Article 50): Most Ecommerce AI

- Customer service chatbots must disclose their AI nature before the conversation starts

- Shopping assistants and virtual product advisors must inform users at first interaction

- AI-generated product descriptions and images must be labeled as AI-generated content

- Recommendation engines and dynamic pricing generally fall into minimal or limited risk

When Ecommerce AI Crosses Into High Risk

Some edge cases push ecommerce AI into Annex III high-risk territory, which triggers a much heavier compliance burden:

- Emotion recognition: Voice analysis or facial expression tracking to gauge customer sentiment qualifies as high-risk.

- Biometric categorization: Categorizing customers by race, religion, sexual orientation, or political opinions is either high-risk or prohibited.

- AI creditworthiness scoring: Using AI for buy-now-pay-later eligibility or fraud risk that affects customer access to services falls under Annex III.

An appliedAI study of 106 enterprise AI systems found that 40% had unclear risk classifications. Don't assume your chatbot is "just limited risk" without auditing its full capabilities.

The Four Compliance Requirements That Matter for Ecommerce

Ecommerce enterprises face four practical compliance areas. Here's what each one means.

1. Transparency: Customers Must Know They're Talking to AI

Article 50 requires that customers are informed "in a clear and distinguishable manner at the latest at the time of the first interaction" that they're interacting with an AI system. A small footnote buried in your terms and conditions won't cut it.

For ecommerce, this means a visible disclosure banner or message before any chatbot conversation begins, labels on AI-generated product descriptions and lifestyle images, and accessibility-compliant presentation of all disclosures. The good news: our Transparency Playbook covers the implementation details, including A/B testing different disclosure formats to protect conversion rates.

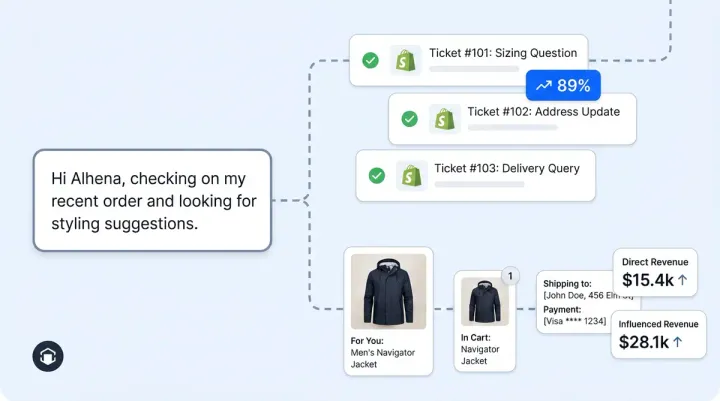

2. Documentation and Logging

The AI Act requires automatic recording of events over a system's lifetime. For ecommerce AI, that translates to conversation logs retained for at least six months, version tracking (which build of GPT-4 or Claude handled each interaction), and tamper-resistant logging that goes beyond standard application logs.

Balancing the AI Act's six-month minimum retention with GDPR's data minimization principle takes careful planning. Our GDPR Architecture post covers the technical approach to managing this tension across EU and US data regions.

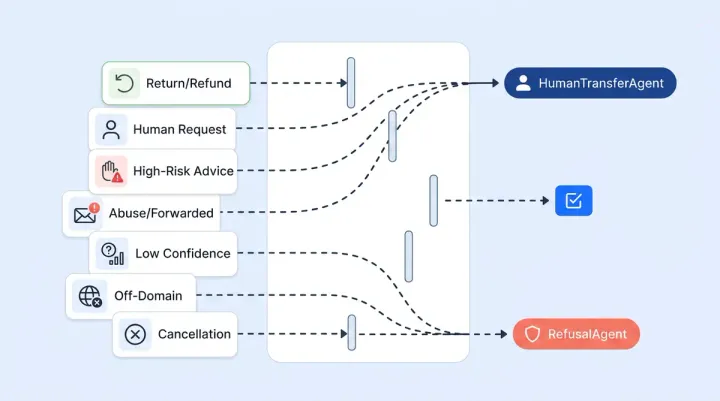

3. Human Oversight and Escalation

For high-risk AI, Article 14 mandates that humans can "effectively oversee" the system. Even for limited-risk ecommerce chatbots, human escalation capability is both a best practice and a customer expectation.

In practice: clear escalation triggers (order disputes, safety concerns, complex complaints), documented override mechanisms, and records of each escalation event including the AI's output and the human's decision. The oversight must be real, not a checkbox exercise.

4. GPAI Model Provider Obligations

If your ecommerce AI runs on GPAI models like GPT-4, Claude, or Gemini, the GPAI models provider has separate requirements under the AI Act: technical documentation, training data summaries, and Copyright Directive compliance. For models with systemic risk (trained with 1025+ FLOPs), providers must also conduct adversarial testing and report serious incidents.

As a deployer, you can't outsource your compliance to your AI vendor. As AI regulation consultants have warned, "organizations cannot rely solely on vendor assurances." You need to verify your GPAI provider meets their Article 53 obligations and ensure your own deployment handles transparency and oversight independently.

Your 8-Step EU AI Act Compliance Checklist

Print this, assign owners, set deadlines.

- Audit every AI system in your ecommerce stack. Chatbots, recommendation engines, search, dynamic pricing, content generation, fraud detection, customer analytics. If it uses a model, it goes on the list. Over half of organizations lack this basic inventory (LegalNodes).

- Classify each system's risk level. Map every tool against the four risk tiers. Flag anything using emotion recognition, biometric data, or AI decisions that affect customer access to services. When in doubt, classify up, not down.

- Implement AI disclosure on every customer-facing AI touchpoint. Add clear, branded notifications to chatbots and assistants before conversations begin. Don't bury it. Make it visible, accessible, and timestamped. See our Transparency Playbook for implementation patterns.

- Review conversation logging and model versioning. Ensure logs are retained for at least six months with tamper-resistant storage. Track which model version handled each interaction. Document data sources feeding your AI systems.

- Set up human oversight and escalation paths. Every AI interaction needs a clear route to a human agent. Document escalation triggers, override mechanisms, and keep records of all human interventions. Test your escalation paths regularly.

- Document training data sources. Record what data your AI systems were trained or fine-tuned on, where it came from, and how it was processed. This applies to custom models and fine-tuned deployments.

- Review vendor compliance. Request AI Act compliance documentation from each AI vendor and partner in your stack. Verify GPAI models providers (OpenAI, Anthropic, Google) meet their Article 53 obligations. Don't assume they've handled everything for you.

- Configure data retention and redaction policies. Align AI log retention with both the AI Act's six-month minimum and GDPR data minimization. Build a reconciliation strategy for potentially conflicting requirements. Implement automatic redaction of sensitive data from conversation logs.

EuroCommerce estimates that basic limited-risk compliance costs €6,000 to €7,000 per AI system for smaller retailers (SoftwareSeni). That's a fraction of the penalty exposure, but it's not zero. Start now to spread the cost across your remaining timeline.

How Alhena AI Is Already Built for EU AI Act Compliance

We built compliance into Alhena's architecture from day one. Here's how it maps to the four obligation areas above.

Transparency: Privacy Consent Gate

Alhena's Shopping Assistant includes a Privacy Consent Gate that physically blocks the chat input until the shopper clicks through your AI disclosure. A single toggle in the dashboard activates it. You write the disclosure text, set the button label, and the widget handles the rest: when a shopper opens chat, the input box is disabled and the disclosure renders above a consent button. Clicking it writes a timestamped consent row to the database, keyed to the shopper's fingerprint and your company. The write is one-way, so consent can't be silently reset. The disclosure text auto-translates into the shopper's language across EU markets. A separate Attachment Consent prompt intercepts file uploads with its own accept/deny flow. If the shopper declines, no file data leaves the browser, supporting GDPR data minimization by default.

The Guideline Studio lets you test Article 50 disclosure wording against real conversation flows before your deadline, then A/B test variants with real shoppers and measure conversion impact through source-level attribution.

Documentation: Conversation Logging and Model Tracking

Every conversation through Alhena is logged with full version tracking and audit trails. GDPR Automatic Redaction runs as a scheduled background task that walks tickets older than your configured retention window (minimum 30 days) and rewrites each message body to a redaction notice. The structural record, including ticket IDs, timestamps, and tags, stays intact for analytics, but PII-carrying text is wiped. The search index updates in sync, so redacted content can't leak through the agent-assist search UI. Processing is batched and idempotent: it handles 1,000 tickets at a time and never re-touches already-redacted data.

Our multi-region GDPR architecture keeps EU customer data in EU data centers, with independent production environments per region and edge servers handling cross-region routing.

Human Oversight: Smart Flagging and Escalation

Alhena's Smart Flagging system and Hallucination Detector provide meaningful oversight, not just a token escalation button. The Hallucination Detector runs a multi-step pipeline on every response: it extracts each factual claim, validates it against your approved knowledge base and any tool-call results, then scores a faithfulness percentage (validated facts divided by total facts). A separate entity-validation pass catches hallucinated URLs, emails, and product names using regex for deterministic entities and LLM-based semantic matching for named ones. You set a per-brand faithfulness threshold via a dashboard slider (default: 50%). Anything below it gets flagged automatically.

The Agent Assist module gives human agents full context on escalation. Smart Flagging surfaces flagged conversations with an orange icon in the dashboard. QA reviewers filter to flagged responses only, expand each flag to see exactly which statements weren't grounded in the knowledge base, and provide thumbs-up/thumbs-down feedback that trains the system. Every detection result persists to the audit log with full context: user query, response, extracted facts, validations, reasoning, and timestamps. The GuardrailAgent classifies each response before it reaches the shopper, catching jailbreak attempts, off-topic responses, and policy violations. Each brand gets a custom guardrail prompt for rules like 'never quote prices' or 'always escalate refund disputes.'

Security Foundation

Alhena holds SOC 2 Type 2 certification, providing the security baseline regulators expect. Combined with GDPR multi-region data architecture, configurable retention policies, and the full audit trail from Smart Flagging, the platform gives your compliance team forensic-level evidence across all four obligation areas.

Three Myths That Will Get Ecommerce Teams in Trouble

"Our chatbot vendor handles compliance for us." Wrong. The EU AI Act places obligations on both providers and deployers. You, the ecommerce brand deploying the chatbot, bear accountability for transparency disclosures, human oversight, and proper use. Buying from an established vendor is not a compliance strategy.

"The August 2026 deadline will probably be extended." The proposed Digital Omnibus package could extend high-risk obligations to December 2027. But Article 50 transparency obligations for limited-risk systems like chatbots are not subject to that extension. August 2, 2026 stands firm for transparency requirements (OneTrust).

"This only applies to EU companies." The AI Act applies to any company whose AI systems are placed on the EU market or whose AI outputs are used in the EU. If you sell to EU customers, you're in scope, regardless of where your headquarters sit. The extraterritorial reach mirrors GDPR, and EU member states are building national enforcement bodies, and some member states have already designated their market surveillance authorities.

Start Now: The Compliance Window Is Closing

EY's global survey found that non-compliance with AI regulations and new rules is now the most pressing AI risk cited by C-suite leaders. And with 95% of customer service interactions projected to be AI-powered by 2026 (Master of Code), compliance isn't a niche concern. It's an operational requirement at scale.

The smartest ecommerce brands are reframing AI Act compliance as a trust signal for shoppers, not an innovation blocker. Customers respond well to transparency: 74% of consumers prefer brands that proactively disclose AI interactions. Building trust through disclosure in your customer experience isn't just a legal box to check. It's a conversion opportunity.

For more detail, read our 47-Point AI Brand Safety Checklist and the AI Chatbot QA guide for ongoing governance practices.

Ready to make your ecommerce AI EU-compliant before August 2026? Book a demo with Alhena AI to see how the Privacy Consent Gate, GDPR Automatic Redaction, and Smart Flagging work in your store. Or start free with 25 conversations and test compliance features yourself.

Frequently Asked Questions

Does the EU AI Act apply to ecommerce businesses outside Europe?

Yes. The EU AI Act applies to any company that places AI on the EU market or whose AI outputs are used in the EU, regardless of headquarters location. If your ecommerce store serves EU customers through chatbots, recommendation engines, or AI-generated content, you’re in scope. The extraterritorial reach works the same way as GDPR.

What risk level are ecommerce chatbots under the EU AI Act?

Most ecommerce chatbots fall under the “limited risk” category (Article 50), which requires transparency disclosures but not full high-risk compliance. However, chatbots that use emotion recognition, biometric categorization, or make decisions affecting customer access to financial services can cross into high-risk territory under Annex III. Audit your chatbot’s full capabilities to classify correctly.

What are the penalties for non-compliance with the EU AI Act?

Penalties scale by violation type: up to €35 million or 7% of global annual turnover for prohibited AI practices, €15 million or 3% for high-risk non-compliance, and €7.5 million or 1% for providing misleading information to regulators. For most ecommerce brands, the primary exposure is the €7.5M tier for failing to meet transparency obligations.

How do I disclose AI use to customers in ecommerce chat?

Article 50 requires a “clear and distinguishable” notification before the first interaction begins. A buried footnote in terms and conditions doesn’t qualify. Best practice is a visible banner or modal that customers acknowledge before the chat starts. Alhena AI’s Privacy Consent Gate blocks the chat interface until the customer acknowledges AI disclosure, generating a timestamped consent record automatically.

Will the August 2026 EU AI Act deadline be extended?

The proposed Digital Omnibus package could extend high-risk system deadlines to December 2027, but Article 50 transparency obligations for limited-risk systems like chatbots are not included in that extension. The August 2, 2026 deadline for transparency requirements stands firm. Don’t plan around a potential delay that may not cover your obligations.

Does my AI vendor’s compliance cover my obligations as a deployer?

No. The EU AI Act places separate obligations on both providers (vendors) and deployers (your brand). Your AI vendor must meet their provider obligations, but you’re independently accountable for transparency disclosures, human oversight, and proper use of the AI system. Request compliance documentation from vendors but build your own compliance program.

How much does EU AI Act compliance cost for ecommerce?

Basic limited-risk compliance costs €6,000 to €7,000 per AI system for smaller retailers, according to industry estimates. High-risk systems cost significantly more: €180,000 to €420,000 per system. Ongoing compliance runs about €29,000 per AI system annually. Choosing a platform like Alhena AI that has compliance features built in reduces these costs significantly.

How does Alhena AI help with EU AI Act compliance?

Alhena AI includes a Privacy Consent Gate (blocks chat until AI disclosure is acknowledged), GDPR Automatic Redaction (configurable rolling-window data wipe), Smart Flagging with a Hallucination Detector (validates 100% of responses against verified data), GuardrailAgent (real-time policy enforcement), and SOC 2 Type 2 certification. The platform also supports EU data residency with independent production environments per region.