Shoppers who chat with AI convert at 12.3% compared to 3.1% for those who don't, according to Rep AI's 2025 Ecommerce Shopper Behavior Report. But that gap only holds when the conversation keeps moving. The moment a shopper has to think about what to type next, momentum dies. A peer-reviewed study published in PMC found that chatbots offering follow-up suggestions increased user queries by 20% and extended engagement by 114 seconds per session.

That's the job of Related Questions: the 3-5 tappable chips that appear below every AI response in Alhena's chat widget. They aren't prebuilt FAQ buttons or static quick replies. Unlike static e-commerce suggestions pulled from preconfigured lists, each one is generated fresh by a dedicated LLM agent that reads the full conversation, the retrieved knowledge, and the AI's latest answer, then predicts what the shopper will want to know next.

This post walks through the five design decisions behind the feature: how chips generate without adding latency, why every button leads somewhere real, when the system goes silent on purpose, how it avoids repeating itself, and why each click compounds into deeper product discovery.

Design Decision 1: Answer First, Suggest Second

The worst thing a follow-up suggestion can do is slow down the answer. If the AI pauses to generate chips before delivering its response, the shopper feels the wait. Alhena solves this with a strict async architecture.

How the pipeline works

When a shopper sends a message, Alhena's orchestrator generates the main answer and streams it to the widget immediately. In parallel, a Celery task fires off a separate request to the SuggestedQuestionAgent, a dedicated agent running on Gemini 2.5 Flash, one of the fastest AI models available (chosen specifically for speed). The agent generates its suggestions and POSTs them back to the app server via a callback URL. The app server stores them against the specific message, and the widget renders the chips beneath the answer.

The shopper sees the answer arrive at full streaming speed. A beat later, the chips appear. Zero perceived latency cost.

Why this matters for conversion

Nielsen Norman Group research shows 77% of chatbot conversations involve more than one exchange. Chatbot-led funnels convert 2.4x higher than traditional web forms, but only when the interaction feels instant. Adding 800ms of suggestion-generation time to every turn would compound across a 5-turn session into 4 seconds of dead air. By decoupling answer delivery from chip generation, Alhena keeps the conversational rhythm tight. The chips arrive like a follow-up thought, not a loading spinner.

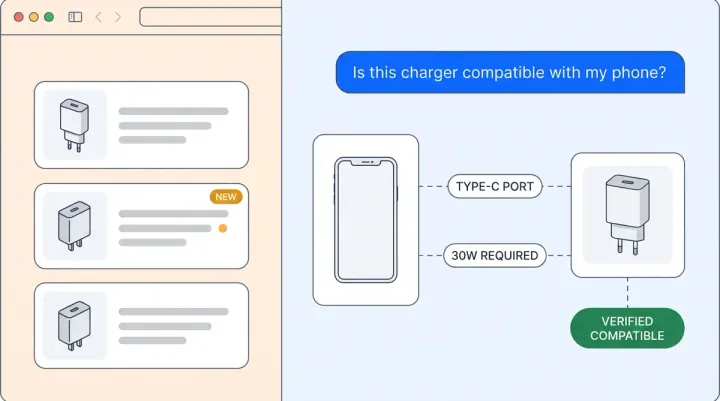

Design Decision 2: Grounded to Knowledge, Never to Guesswork

Every chip must lead to a question the AI can actually answer. This is rule #1 in the agent's system prompt, and it's the single biggest reason these chips convert instead of frustrate.

The dead-end problem

Most chatbot "suggested reply" features pull from static lists or pattern-match against previous conversations. The result: buttons that look helpful but lead to confused responses. A shopper clicks "Is it safe for sensitive skin?" and the AI responds with "I don't have that information." That's worse than showing nothing.

Alhena's SuggestedQuestionAgent receives the same retrieved knowledge chunks (product descriptions, specifications, reviews, and inventory data) that powered the AI's answer. This is what transforms Alhena from a static FAQ bot into an AI-powered suggestion engine. The generation prompt enforces a hard constraint: every suggestion must be answerable using only the provided knowledge context. If the knowledge base doesn't contain the answer, the chip can't exist.

User visibility asymmetry

There's a subtlety here worth noting. The shopper can only see the chat history and the AI's latest response. They can't see the retrieved knowledge chunks. So the agent is also instructed: "Every suggestion must be self-explanatory to someone who has only read the conversation." This prevents insider-y chips that only make sense if you've read the internal documentation. The result is chips that feel like natural next questions a real shopper would ask.

Brevity as a grounding constraint

Chips are capped at 3-6 words. "Is it safe for sensitive skin?" not "Can you tell me whether this product is appropriate for individuals with sensitive skin conditions?" The brevity isn't just UX polish. Short phrases are harder to hallucinate because they force the model to compress meaning into specific, concrete language. Vague buttons ("Tell me more about benefits") are easy to generate without grounding. Specific ones ("Safe for rosacea?") require actual knowledge.

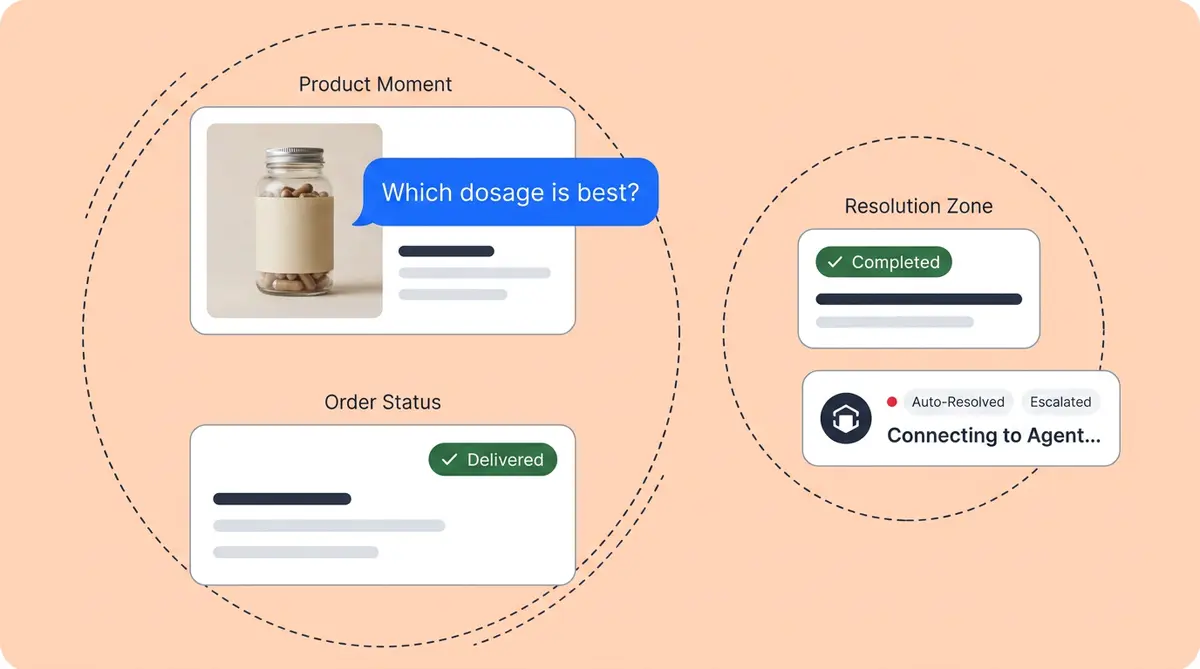

Design Decision 3: Knowing When to Shut Up

The most sophisticated part of Alhena's Related Questions isn't what it generates. It's when it returns an empty array.

Silent exemptions

The agent deliberately produces zero suggestions during:

- Order status lookups - The shopper asked "where's my order?" They want tracking info, not product discovery buttons.

- Human handoff flows - From the moment a shopper requests a human agent through completion. Suggestions would feel dismissive.

- Personal information collection - When the AI is asking for an email address or order number. Chips would interrupt the data entry flow.

- Quiz option displays - When the AI presents quiz-style multiple-choice options (marked with

## optnsinternally), those options already occupy the chip slot. Doubling up would crowd the UI. - Semantically unrelated queries - If there's no meaningful link between the shopper's question and available knowledge, forcing suggestions would feel random.

Why silence builds trust

Suggestions during checkout feel intrusive. Suggestions during a handoff feel like the bot is competing with the human agent. The decision to go quiet is a feature, not a gap. Brands like Tatcha, which achieved 82% chat deflection with Alhena, benefit precisely because the AI knows when its job is done and a different mode (human support, transaction completion) should take over. Meanwhile, while the main agent checks inventory and answers questions, the suggestion agent stays silent during purely transactional turns.

This agentic behavior is also what separates Alhena's approach from static quick-reply systems. Those systems show buttons every turn because they're configured, not contextual. Alhena's system reasons about whether suggestions add value on this specific turn.

Design Decision 4: Conversation-Aware Diversity

If a shopper already told the AI they have oily skin, a suggestion chip shouldn't ask "What's your skin type?" That sounds obvious, but it's genuinely hard. Most suggestion systems don't have access to full conversation history, or they treat each turn independently.

What the agent sees

The SuggestedQuestionAgent receives the complete chat history in OpenAI-formatted roles, enriched with purchase history when available, plus the AI's latest answer, plus the retrieved knowledge chunks, plus a background block listing all collaborating agents (order management, product search, handoff). This means suggestions can surface capabilities the shopper doesn't know exist ("Check my order status" or "Talk to a stylist") without ever retreading covered ground.

Diversity constraints in the prompt

The generation prompt enforces three rules simultaneously:

- No re-asks: Never suggest something the shopper already stated or asked about.

- Distinct angles: Each chip must approach the topic from a different direction, helping shoppers refine their search (comparison, use-case, next step, related product).

- Structural variety: No more than 2 suggestions can share the same sentence structure. This prevents the "Do you want X? Do you want Y? Do you want Z?" monotony.

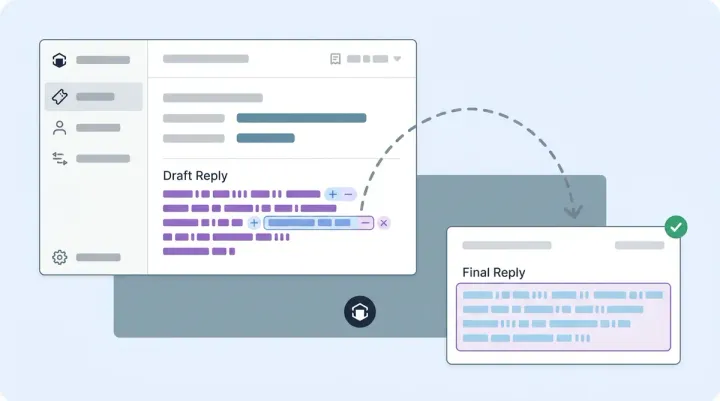

Writing like a real shopper

The prompt specifies conversational tone and enforces punctuation discipline. Question marks appear only when the chip starts with a question word. Action phrases like "Add to cart" or "See similar styles" don't get a question mark. Product names get compressed to 1-2 key words ("Ageless cream" not "Ageless Revitalizing Eye Cream 30ml"). And price questions are banned entirely because price is already visible on product cards.

These micro-decisions add up. The chips feel like things a real person would tap, not like a support taxonomy rendered as buttons.

Design Decision 5: The Self-Reinforcing Loop

Here's what makes Related Questions a conversion engine rather than just a UX convenience. Each clicked chip creates the next user message.

The compounding mechanism

When a shopper taps a chip, that text gets injected as their next message. It flows through the normal chat pipeline: retrieval pulls fresh knowledge chunks, the AI Shopping Assistant generates a new answer, and the SuggestedQuestionAgent fires again to produce new chips from the updated context. Each turn deepens the knowledge window and narrows the product space. The result is AI for personal shopping that actually feels personal, adapting its suggestions based on what you just said.

A session that starts with "What moisturizer do you recommend?" generates chips like "Good for dry skin?" and "SPF included?" Each click retrieves more specific product data, surfacing increasingly targeted product recommendations. By turn 4, the chips become highly targeted: "Compare to Tatcha Dewy?" or "See the travel size." The shopper has gone from category browsing to purchase-ready specificity without typing a single character after their first message. It’s like an AI shopping list that builds itself through taps.

Why depth drives revenue

Shoppers who engage in multi-turn AI conversations convert at 4x the rate of those who don't, with the behavioral science behind this well documented. Each additional turn correlates with higher purchase intent because deeper conversations signal genuine interest and surface better product matches. Related Questions reduce the friction of going deeper. Instead of the shopper needing to formulate their next question (and potentially bouncing), the next step (whether handling objections, comparing alternatives, or adding to cart) is always one tap away.

This is also why Alhena tracks conversational analytics signals like session depth and chip click-through rate. They're leading indicators of conversion, not vanity metrics. Alhena surfaces real-time AI analytics on chip engagement so brands can see exactly which follow-up paths drive revenue.

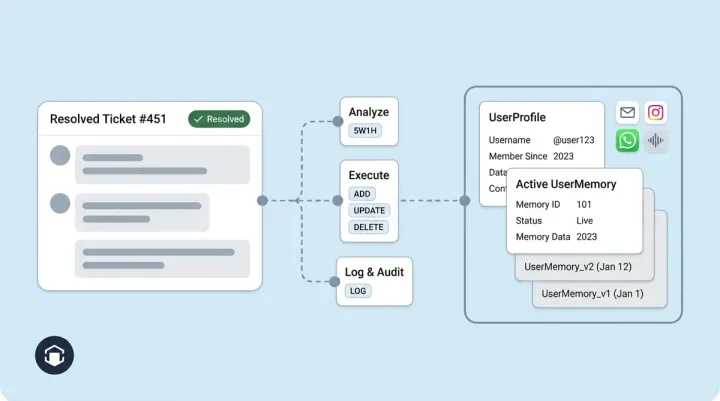

The Architecture Behind the Experience

AI conversational agents live or die by their ability to sustain multi-turn engagement. The best agentic AI systems anticipate what the shopper needs next before they ask. For product managers evaluating this framework, here's the technical flow:

- Shopper sends message - enters the main orchestrator

- Answer streams immediately - shopper sees the response in real time

- Celery task fires in parallel - SuggestedQuestionAgent (Gemini 2.5 Flash) receives: query, chat history, knowledge chunks, AI answer, collaborating agents list, and bot guidelines

- Agent generates 3-5 ordered suggestions (or returns empty if exempt) - output is structured via Pydantic schema, never free text. The AI prompts driving this generation encode years of iteration on what makes a good follow-up question

- Callback POST - suggestions are sent back to the app server and stored in

RelatedQuestiontable, scoped to the specific message - Widget renders chips - styled per dashboard configuration (background color, text color, border radius, optional icon)

- Shopper clicks - chip text becomes the next user message, pipeline repeats

The count defaults to 5 but is configurable per bot profile. Brands can optimize chip appearance through the dashboard under Integrations, with customizable background color (default #EDF3F8), text color (default #293037), and pill-shaped border radius. The feature ships enabled by default on all new profiles.

What Related Questions are not

It's worth distinguishing this from adjacent features. AI Nudges are proactive, AI-initiated prompts triggered by behavioral signals (time on page, scroll depth, exit intent). They start conversations. Product Quizzes are structured selling flows where the AI asks the questions and narrows recommendations through guided steps.

Related Questions sit between these. They're reactive (appearing after the AI answers), user-directed (the shopper chooses whether and what to click), and unstructured (no fixed flow, just contextual next steps). Together with Nudges and Quizzes, they form a complete engagement framework, but each serves a distinct role in the 5.5x engagement gap between proactive AI and passive chatbots.

What This Means for Your Conversion Funnel

An IBM-NRF study of 18,000 consumers across 23 countries found 45% already use AI during their buying journeys. The business case for Related Questions comes down to friction reduction at the point of highest intent. A shopper actively chatting with your AI is already warmer than 97% of site visitors. The question is whether they will keep exploring, overcome their objections, or drop off. Follow-up chips address objections proactively by surfacing answers the shopper was already wondering about. The real question is whether they'll keep exploring or drop off because the next step requires too much effort.

Shopify and WooCommerce brands using Alhena to drive sales's Shopping Assistant with Related Questions enabled see longer sessions, deeper product discovery, and higher add-to-cart rates that boost conversion across every product category, driving measurable customer lifetime value. Victoria Beckham achieved a 20% AOV increase, partly driven by shoppers discovering complementary products through follow-up chips, making cross-sell and upsell feel like natural discovery rather than a sales push. The best sales conversations feel like helpful guidance, and that is exactly what grounded follow-up chips deliver they'd never have thought to ask about. Tatcha saw 3x conversion rates and 11.4% of total site revenue flowing through AI-assisted conversations, proving that AI-driven product discovery directly drives revenue growth.

The feature works because it removes the cognitive load of "what should I ask next?" and replaces it with a tap. For mobile shoppers (where Baymard Institute research shows typing is slow and error-prone, contributing to 80% cart abandonment rates), that single interaction change between browsing and checkout can be the difference between a 2-turn session that goes nowhere and a 6-turn session that ends with a cart.

Ready to see Related Questions in action on your store? Book a demo with Alhena AI or start for free with 25 conversations to experience how grounded follow-up chips keep shoppers clicking toward purchase.

Use Cases Across Ecommerce Verticals

Related Questions adapt to any ecommerce use case because the AI agents pull from whatever product descriptions, specifications, and catalog data the brand has loaded. A Shopify skincare store sees chips like "Good for rosacea?" and "See the travel size" because those details live in the product descriptions. A home furnishing retailer gets "Compare fabric options" and "Check if it fits a king bed" because the AI models can access dimensions and material data from the product catalog.

The AI prompts that generate these suggestions are category-aware. For fashion and apparel, chips lean toward styling and fit: "Runs true to size?" or "See similar in blue." For beauty, they focus on ingredients and skin concerns. For electronics, they surface compatibility and specs. The AI agents recognize which product attributes and specifications matter most in each vertical and generate recommendations accordingly.

Cross-sell opportunities emerge naturally. When a shopper asks about a moisturizer and the AI answers, the follow-up chips might include "Pair with a serum?" or "See the full routine." These aren't random cross-sell suggestions pushed by merchandising rules. They're generated from the knowledge base, which means the AI checks inventory and product relationships before surfacing them. If a complementary product is out of stock, that chip won't appear.

For Shopify merchants running checkout through the native flow, chips that lead to add-to-cart actions feed directly into the checkout process. A shopper who taps through three follow-up chips and adds a product never leaves the chat to find a checkout button. The conversion rate lift comes from removing every friction point between discovery and purchase. Brands that optimize their product descriptions and catalog data see the biggest gains because richer knowledge means more specific, more clickable chips that optimize the entire shopping journey.

Frequently Asked Questions

How does Alhena generate follow-up question chips in real time?

Alhena uses a dedicated SuggestedQuestionAgent running on Gemini 2.5 Flash. It fires as a parallel Celery task after the main answer streams to the shopper, so chip generation adds zero latency to the response. The agent receives the full conversation history, retrieved knowledge chunks, and the AI answer, then outputs 3-5 structured suggestions via Pydantic schema.

What makes Alhena suggested questions different from static quick replies?

Static quick replies are preconfigured buttons that show regardless of context. Alhena chips are LLM-generated fresh on every turn, grounded to the specific knowledge retrieved for that answer. They adapt to what the shopper has already said, never repeat covered ground, and each one is guaranteed to lead to a question the AI can actually answer.

Can the follow-up chips increase ecommerce conversion rates?

Yes. Each clicked chip creates a new turn in the conversation, retrieves deeper product knowledge, and generates more targeted suggestions. Shopify and WooCommerce brands using Alhena to drive sales report 3x conversion rates and 20% AOV increases. Multi-turn AI conversations convert at 4x the rate of single interactions because deeper engagement correlates directly with purchase intent.

When do Related Questions not appear in the chat?

The system deliberately returns zero suggestions during order status lookups, human handoff flows, personal information collection (email, order number), quiz option displays, and queries with no meaningful knowledge match. This prevents suggestions from feeling intrusive during transactional or sensitive moments.

How many follow-up chips does Alhena show per response?

The default is 5 chips per response, but the count is configurable per bot profile through the dashboard. Each chip is capped at 3-6 words, uses conversational language written like a real shopper would ask, and follows strict diversity rules so no two chips share the same angle or sentence structure.

Do follow-up chips work on mobile ecommerce stores?

Yes, and they are especially valuable on mobile where typing is costly. Chips render as tappable pill-shaped buttons with customizable styling (background color, text color, border radius, optional icon). Mobile shoppers can navigate a full product discovery journey through taps alone, without typing after their initial question.

How does Alhena ensure follow-up chips do not lead to dead ends?

The generation prompt enforces a hard grounding constraint: every suggestion must be answerable using only the knowledge chunks retrieved for that conversation turn. If the knowledge base does not contain the answer to a potential chip, the agent cannot generate it. This eliminates the frustrated I do not have that information response that plagues static suggestion systems.

Can I customize the appearance of Related Questions chips?

Yes. Through the Alhena dashboard under Integrations, Website, Configure Settings, you can customize background color (default #EDF3F8), text color (default #293037), border radius (default 32px for pill shape), and add an optional leading icon per chip. The feature is enabled by default on all new profiles.