Eighty-one percent of companies struggle to keep AI-generated content on-brand, even when detailed guidelines exist. For heritage brands sitting on decades of founder letters, atelier notebooks, and runway archives, the problem runs deeper than tone. The risk isn't that AI sounds wrong. It's that AI sounds like everyone else.

Luxury e-commerce is now a $69 billion market growing at nearly 10% annually, and close to 80% of luxury purchases are digitally influenced, according to McKinsey. Yet 56% of luxury clients say they're unsatisfied with their shopping experience (BCG, 2025). The gap between what heritage brands promise and what their digital channels deliver is widening, not closing.

This post breaks down how to train brand voice AI on your heritage, how to choose between fine-tuning and RAG, and why the most important role for marketers in the process isn't technical at all.

Why Generic AI Fails Luxury Brands

LVMH's Chief Omnichannel and Data Officer Gonzague de Pirey put it simply at NRF: "If we want to develop successful technology, the technology needs to be everywhere, but visible nowhere." That's the luxury AI paradox. You need AI to scale personalization across thousands of concurrent conversations, but the moment a customer senses they're talking to a bot, the brand's aura dissolves.

Generic large language models default to an averaged, committee-approved voice. They're polite, competent, and completely interchangeable. For a mass-audience skincare line, that might be acceptable. For a house with 80 years of atelier history and a vocabulary shaped by specific artisans, it's brand erosion, and a trust problem, at speed.

The numbers back this up. Consistent brand presentation across channels drives a 23 to 33% revenue increase, according to Envive AI research. On the flip side, 32% of customers will leave a brand after a single bad experience. When your AI assistant describes a hand-stitched calfskin bag with the same flat language it'd use for a polyester tote, that counts as a bad experience for a luxury buyer.

Soumia Hadjali, Louis Vuitton's Global SVP of Client Development, frames the guiding principle as "augmentation, not automation." The AI should give every sales associate the institutional memory of the brand's most experienced advisor. Scaling that memory starts with choosing the right technical solutions.

Fine-Tuning vs. RAG: A Decision Matrix for Brand Voice AI

Two approaches dominate how brands teach AI agents to speak authentically: fine-tuning and retrieval-augmented generation (RAG). They solve different problems, and understanding the distinction is the first real decision in any brand voice AI project.

Fine-tuning: encoding the "how"

Fine-tuning modifies a model's weights using brand-specific training data. It teaches the AI how to say things, internalizing sentence cadence, vocabulary preferences, signature metaphors, and the register that separates one house's voice from another. Think of it as the difference between how Hermes speaks (understated precision, craft-forward) and how Versace speaks (bold, maximalist, unapologetic).

Modern parameter-efficient fine-tuning (PEFT) creates adapter layers at less than 1% of the base model's size. You don't need massive infrastructure. A focused training set of 200 to 1,000 curated examples is enough for a production-grade voice, according to hillock.studio research. One professional services firm reported a 60% reduction in first-draft creation time after fine-tuning for brand voice.

For a deeper look at when fine-tuning makes sense and when it doesn't, see our guide to LLM fine-tuning trade-offs.

RAG: grounding in the archive

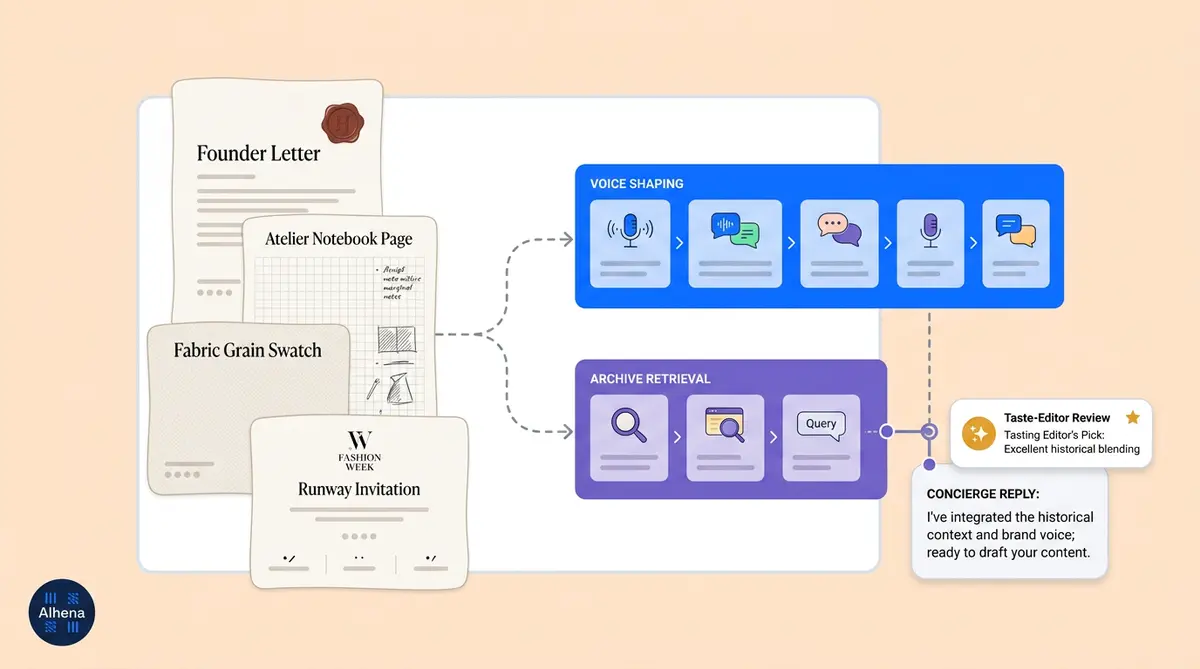

RAG retrieves relevant information from an external knowledge base at the moment a question is asked. It teaches the AI what to say: SKU details, material provenance, store hours, care instructions, collection histories. The knowledge base refreshes automatically without retraining the model, making it ideal for living product catalogs and seasonal storytelling.

For heritage brands, the RAG corpus becomes the digitized archive itself: founder correspondence, runway show notes, craftsmanship documentation, ad copy organized by era, and artisan interview transcripts. When a customer asks about the origin of a particular weave, RAG pulls the answer from the source document rather than hallucinating one.

Our breakdown of how knowledge base architecture powers revenue covers the technical details of turning product data into a sales engine.

The honest answer: you need both

The emerging consensus in 2025 is that the hybrid approach delivers the strongest results. Fine-tune the model lightly to internalize your brand's linguistic DNA. Use RAG to inject factual knowledge from the living archive at query time. Matillion reports hybrid implementations deliver three to five times better ROI than either approach alone. One enterprise deployment achieved an 86.2% approval rate in brand voice audits using this combined method.

When fine-tuning is overkill: If your brand voice fits comfortably in a 200-word style guide, a well-crafted system prompt with a few-shot examples will get you 80% of the way there. Fine-tuning shines when the voice is nuanced enough that a style guide can't fully capture it, when there are specific phrases the brand would never say, or when you need consistency across millions of interactions per month.

Translating Physical Craftsmanship into Digital Dialogue

The hardest part of training brand voice AI isn't the technology. It's the source material. Heritage brands store decades of institutional knowledge in formats that don't map neatly into a training pipeline: handwritten atelier notes, archival photography, founder letters and original writing on aging paper, runway show programs, and fabric swatch books with annotations in multiple languages.

Building a source taxonomy

Not all heritage material serves the same purpose. A useful framework sorts sources by function:

- Voice-defining material: Founder correspondence, original brand manifestos, early ad copy, press interviews with creative directors. These establish the linguistic identity.

- Knowledge material: Product catalogs by era, materials sourcing documentation, craftsmanship process descriptions, care guides. These populate the RAG knowledge base.

- Sensory material: Artisan interview transcripts, atelier photography captions, fabric and texture descriptions. These provide the vocabulary of tactile experience.

- Exclusion material: Dated language, deprecated product lines, internal jargon, legally sensitive documents. These must be deliberately kept out.

Hermes offers a compelling model. The house systematically filmed retiring craftsmen to preserve their memories and techniques, creating the documentary Hearts and Crafts that visits production sites from the historic Paris workshop to a crystal factory in France. They send roughly 80 master trainers to each new workshop opening and maintain a "Club des Anciens" where retired workers serve as living repositories of company history.

The vocabulary of the hand

Luxury buyers expect language that mirrors the physical experience of the product. Weight, grain, patina, drape, tension, hand-feel: these words carry meaning that generic AI descriptions completely miss. Extracting this sensory vocabulary from artisan interviews requires a specific approach. You're not transcribing for accuracy alone. You're capturing how a craftsman talks about their work, the analogies they reach for, the qualities they notice first.

Burberry has explored this territory by using AI-powered animation to bring historical imagery to life, including animating a 1980 photograph by Lord Lichfield. Their storytelling chatbot on Facebook Messenger threads brand history and craftsmanship into natural conversation. The archive isn't static. It's becoming a dialogue.

What to deliberately exclude

Curation is as much about what you leave out. Dated language from 1960s ad copy may reflect attitudes the brand has evolved past. Internal shorthand ("the Q3 capsule refresh") adds noise without value. Deprecated product lines confuse shoppers searching for current inventory. And any legally sensitive material, including licensing disputes, supplier contracts, or pre-release designs, must be scrubbed before it enters any training pipeline.

The model inherits the judgment of whoever curated the corpus. Choose that person carefully.

From Archive to AI Assistant: A Five-Stage Blueprint

Here's how a heritage brand moves from 50,000 archival documents to a living, brand-fluent AI agent. Each step builds on the last.

Step 1: Discovery

Scope the full archive and separate voice-defining material from knowledge material. An 80-year-old house might have tens of thousands of documents, but only a fraction define the brand's linguistic identity. The rest is product knowledge for the RAG pipeline. This step typically takes two to four weeks and requires a brand historian or senior creative working alongside the technical team.

Step 2: Digitization

Convert physical material into machine-readable formats. OCR on handwritten notes remains error-prone, with inconsistent stroke widths and fading ink pushing traditional computer vision to its limits. AI agents powered by deep learning improve recognition significantly, but historical materials still need human review. Archival imagery needs descriptive captioning. Video and audio need transcription with speaker attribution.

Valentino set an early standard here. The Valentino Garavani Virtual Museum was the first international fashion designer to create a permanent digital exhibition, drawing on over 180 videos and 5,000 images covering nearly five decades of work.

Step 3: Voice distillation

Build a fine-tuning dataset from the curated archive. From 50,000 documents, you might distill 500 to 1,000 high-quality examples that authentically represent the brand voice across different contexts: product descriptions, customer inquiries, storytelling moments, and graceful declines. Tag each example with metadata covering channel, target audience, intent, and voice category.

Step 4: Evaluation

Run blind A/B tests with the brand's own copywriters as judges. The metric that matters most is the human edit rate: the percentage of AI outputs requiring revision. Target under 25% for production readiness. Voice recognition rate (can judges identify the brand in a blind test?) should hit 85% or higher.

Step 5: Live behavior

The truest test of brand voice AI isn't what the assistant says when it has a perfect answer. It's how the assistant declines a question in voice. A luxury concierge doesn't say "I don't have that information." It says something closer to "I'd love to connect you with our atelier team who can speak to that in the detail it deserves." That graceful refusal, in the brand's own register, is the final proof that the voice training worked.

For brands running luxury e-commerce, we've mapped out six white-glove AI concierge workflows that show what this looks like in practice.

How Alhena AI Encodes Brand Heritage

Alhena AI was built for exactly this problem: giving e-commerce brands an AI assistant that speaks their language, not a generic chatbot language.

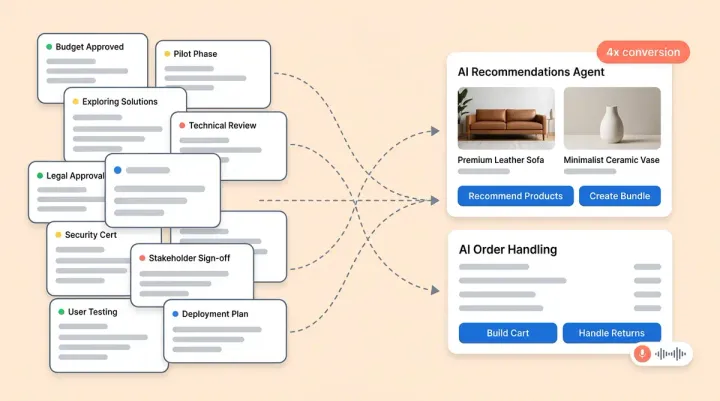

The platform's Identity and Tone configuration lets brands define their AI's persona at a foundational level, not just a system prompt, but a structured voice architecture that shapes every response. You set the agent's role, its tone of voice, and situational guidelines that handle edge cases (how to discuss sustainability claims, how to redirect competitor comparisons, how to decline questions gracefully).

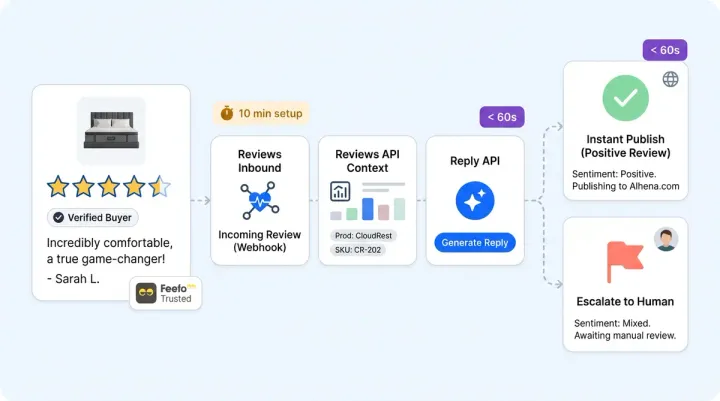

On the knowledge side, Alhena ingests 10 categories of data sources including PDFs, web pages, product feeds, Google Drive documents, video transcripts, helpdesk records, and direct e-commerce platform feeds from Shopify, WooCommerce, Magento, and Salesforce Commerce Cloud. For heritage brands, this means your founder letters (as PDFs), your product catalogs (from your e-commerce platform), and your craftsmanship documentation (as web pages or uploaded files) all feed into a single, grounded knowledge base.

The result is hallucination-free responses that customers can trust, rooted in your actual brand data. When a customer asks about the provenance of a particular fabric, Alhena pulls from your documented sourcing records, not from internet guesswork.

Brands using this approach have seen measurable results. Tatcha achieved a 3x conversion rate and 38% AOV uplift with AI that speaks their skincare philosophy authentically. Victoria Beckham saw a 20% AOV increase with brand-aligned AI recommendations. These aren't support deflection numbers. They're revenue numbers driven by AI that sounds like the brand, not like a helpdesk.

Alhena also operates across every channel where luxury shoppers expect to engage: web chat, email, Instagram DMs, WhatsApp, and voice. The brand voice stays consistent whether a customer is browsing your site at midnight or responding to an Instagram story. And because the platform deploys in under 48 hours with no dev resources needed, heritage brands don't need a six-month implementation timeline to start seeing returns.

The Taste Editor: Why AI Heritage Needs a Human Curator

The most overlooked role in any brand voice AI project is the human curator, what we call the taste editor. This person doesn't write code. They make judgment calls about what the brand sounds like, which archival material is worth preserving, and where the AI's output drifts from authentic to approximate.

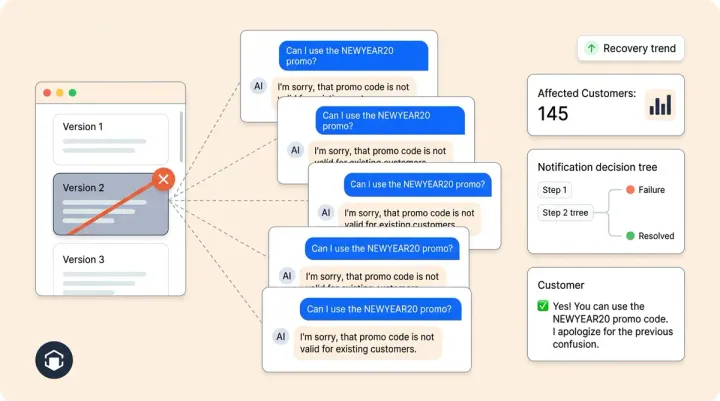

The numbers reveal a governance gap. While 95% of organizations have brand guidelines, only 25 to 30% actively enforce them. Sixty percent of marketing materials fail to conform to established brand standards. Without a dedicated taste editor (or senior marketer) feeding corrections back into the system, AI content creation drifts off-brand faster than human-written content ever could, simply because it produces more volume.

The reinforcement loop matters. When a taste editor corrects AI output, rewording a product description, flagging a tone mismatch, rewriting a response that's technically accurate but stylistically flat, that correction becomes new training signal. Over time, the model learns not just what the brand says but what the brand would never say. That negative space is often what defines a luxury voice more than any positive attribute.

Hermes understood this instinctively. Their 80 master trainers don't just teach techniques. They pass on judgment, the intuitive sense of what belongs and what doesn't. The digital version of that role is the taste editor: someone with deep brand knowledge who reviews AI conversations, flags drift, and continuously refines the training corpus.

Key governance metrics to track:

- Voice recognition rate: 85%+ correct identification in blind tests

- Human edit rate: Under 25% of AI outputs requiring voice-related revisions

- Cross-platform consistency: 90%+ alignment across web, email, social, and voice channels

- Guideline violation rate: Under 20% of outputs flagged for brand standard issues

Alhena AI's Agent Assist product supports this workflow by letting human agents review, correct, and learn from AI responses in real time, creating the feedback loop that keeps brand voice sharp as the business scales.

Key Takeaways

- Generic AI erodes luxury brands. Consistent brand voice drives 23 to 33% more revenue. Inconsistent experiences push 32% of customers away after one interaction.

- Use both fine-tuning and RAG. Fine-tune for voice and style. Use RAG for factual knowledge from your archive. The hybrid approach delivers three to five times better ROI than either alone.

- Not all heritage material is training data. Build a source taxonomy. Voice-defining documents, knowledge documents, and sensory material each serve different purposes in the pipeline.

- The taste editor is your most important hire. The model inherits the judgment of its curator. Invest in someone who understands the brand's voice at an intuitive level.

- Measure voice quality, not just deflection. Track voice recognition rate, human edit rate, and cross-platform consistency alongside traditional support metrics.

- Start with what you have. Alhena AI deploys in under 48 hours, ingesting your existing product data, PDFs, and web content to build a brand-grounded AI agent without a six-month digitization project.

Ready to give your brand's heritage a voice that scales? Book a demo with Alhena AI or start your free trial with 25 conversations.

What is brand voice AI and why does it matter for luxury ecommerce?

Brand voice AI refers to artificial intelligence trained to communicate in a brand’s specific tone, vocabulary, and style rather than defaulting to generic responses. For luxury ecommerce, it matters because consistent brand presentation drives 23 to 33% more revenue, while 32% of customers leave after a single off-brand experience. Heritage brands risk diluting decades of carefully built identity when their AI sounds interchangeable with every other chatbot on the market.

How do you train AI on a brand's heritage and archival material?

The process involves five stages: discovery (scoping archives and separating voice-defining material from product knowledge), digitization (converting physical documents using AI-powered OCR and captioning), voice distillation (building a curated fine-tuning dataset of 500 to 1,000 examples from tens of thousands of documents), evaluation (blind A/B testing with brand copywriters as judges), and live deployment with ongoing human oversight. Each stage requires collaboration between brand historians, creative leads, and technical teams.

What is the difference between fine-tuning and RAG for brand voice?

Fine-tuning modifies a model's weights to internalize how a brand speaks, including cadence, vocabulary, and style. RAG (retrieval-augmented generation) pulls factual information from a knowledge base at query time to ground responses in real product data. Fine-tuning handles the voice; RAG handles the facts. The hybrid approach, using both together, delivers three to five times better ROI than either method alone and achieves 86%+ approval rates in brand voice audits.

How does Alhena AI maintain brand voice across channels?

Alhena AI uses a structured Identity and Tone configuration that shapes every response across web chat, email, Instagram DMs, WhatsApp, and voice. Brands define their AI persona, communication style, and situational guidelines in a single configuration that stays consistent regardless of channel. The platform ingests 10 categories of data sources to build a grounded knowledge base, ensuring the AI pulls from verified brand material rather than generating generic responses.

Can smaller heritage brands afford to build brand voice AI?

Yes. Modern parameter-efficient fine-tuning (PEFT) creates voice adapters at less than 1% of the base model's size, and production-grade results are possible with as few as 200 curated training examples. For brands that want to skip the fine-tuning process entirely, platforms like Alhena AI deploy in under 48 hours by ingesting existing product data, PDFs, and web content to build a brand-grounded assistant with no dev resources required.

What role does a human curator play in AI brand voice?

The human curator, or taste editor, makes judgment calls about what the brand sounds like and catches AI drift before it reaches customers. They review outputs, flag tone mismatches, and feed corrections back into the training loop. Key metrics they track include voice recognition rate (target 85%+), human edit rate (under 25%), and cross-platform consistency (90%+). Without this role, 60% of AI-generated content fails to meet brand standards.

How long does it take to deploy brand voice AI for ecommerce?

A full heritage digitization and fine-tuning project can take several months depending on archive size. But brands don't need to complete the entire process before seeing results. Alhena AI deploys in under 48 hours using your existing product catalog and brand documentation as the knowledge base. Many brands start with RAG-grounded responses and layer in voice refinements over time as they build their curated training corpus.

What results have luxury brands seen with AI shopping assistants?

Tatcha, a luxury skincare brand, achieved a 3x conversion rate and 38% higher average order value using Alhena AI. Victoria Beckham Beauty saw a 20% AOV increase with brand-aligned AI recommendations. At LVMH, generative AI systems serve more than 40,000 active users monthly with over 1.5 million queries across their 75 maisons, giving every client advisor the knowledge depth of the brand's most experienced employee.