TLDR: Most AI customer support tools treat every conversation like a first date. Your customer tells the chat widget she's shopping for maternity clothes, asks about sizing through your product FAQ, and then gets a "How can I help you today?" when she emails two days later. We built a unified memory layer across every Alhena touchpoint so the AI actually remembers who it's talking to, no matter where the conversation happens.

The Problem Nobody Talks About

Here's a scenario that plays out thousands of times a day across ecommerce. A customer named Jennifer lands on your skincare site. She browses a product page and asks your product FAQ widget whether The Rice Wash is gentle enough for dry, sensitive skin. The widget gives her a solid answer. She's happy.

Later that afternoon, she opens the chat widget to ask about eye creams. She mentions she lives in Chicago, that winter is brutal on her skin, and that she's in her early 30s. The agent recommends something great.

Two days later, Jennifer sends an email asking about her order. "Hi there, I have dry, sensitive skin..." She's repeating herself. Again.

She shouldn't have to.

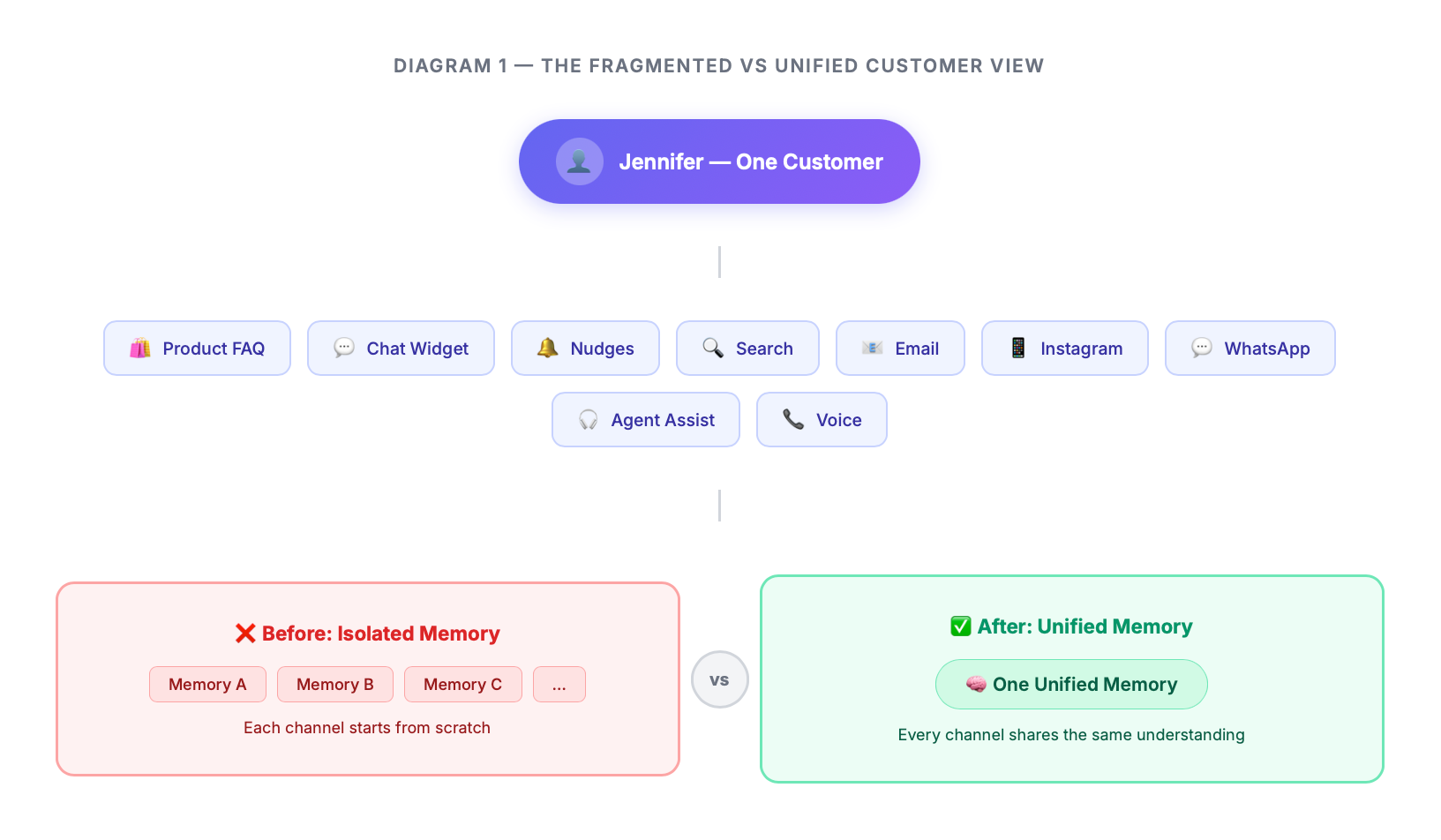

The product FAQ, the chat widget, and the email channel are all powered by the same AI platform. They share the same knowledge base, the same product catalog, the same brand voice. But they don't share the one thing that matters most: what they know about Jennifer.

This is the problem we set out to solve.

What Alhena Actually Looks Like Under the Hood

Before diving into memory, it helps to understand the surface area we're working with. Alhena isn't just a chatbot. It's an entire customer engagement infrastructure. When a brand deploys Alhena, they get:

- Product FAQs that live directly on product pages, answering buyer questions with real product knowledge.

- Chat widget for open-ended conversations, support requests, and guided shopping.

- Nudges that proactively engage visitors based on browsing behavior.

- Conversational search that replaces the clunky keyword search bar.

- Email automation that handles incoming support tickets.

- Social channel support across Instagram DMs, Facebook Messenger, WhatsApp, and more.

- Agent assist that helps human agents by suggesting replies and summarizing conversations.

- Voice agents that handle phone calls.

- Review responses on Trustpilot and Feefo.

That's at least nine distinct touch points where the same customer might interact with the same brand.

And until now, each of those touch points was essentially stateless about the user. They all knew the products. None of them knew the person.

The Idea: One Memory, Everywhere

The core insight is simple, even if the execution is not. Every customer should have one unified memory that follows them across every channel. When Jennifer tells the product FAQ widget about her skin type, the chat widget should already know. When she mentions her mom's birthday in a chat conversation, the email agent should remember.

We call this the Unified Memory Layer.

The vision was that every single interaction, whether it's a quick question on a product page, a back-and-forth in the chat widget, a phone call, or an email thread, contributes to a single, evolving understanding of who this person is.

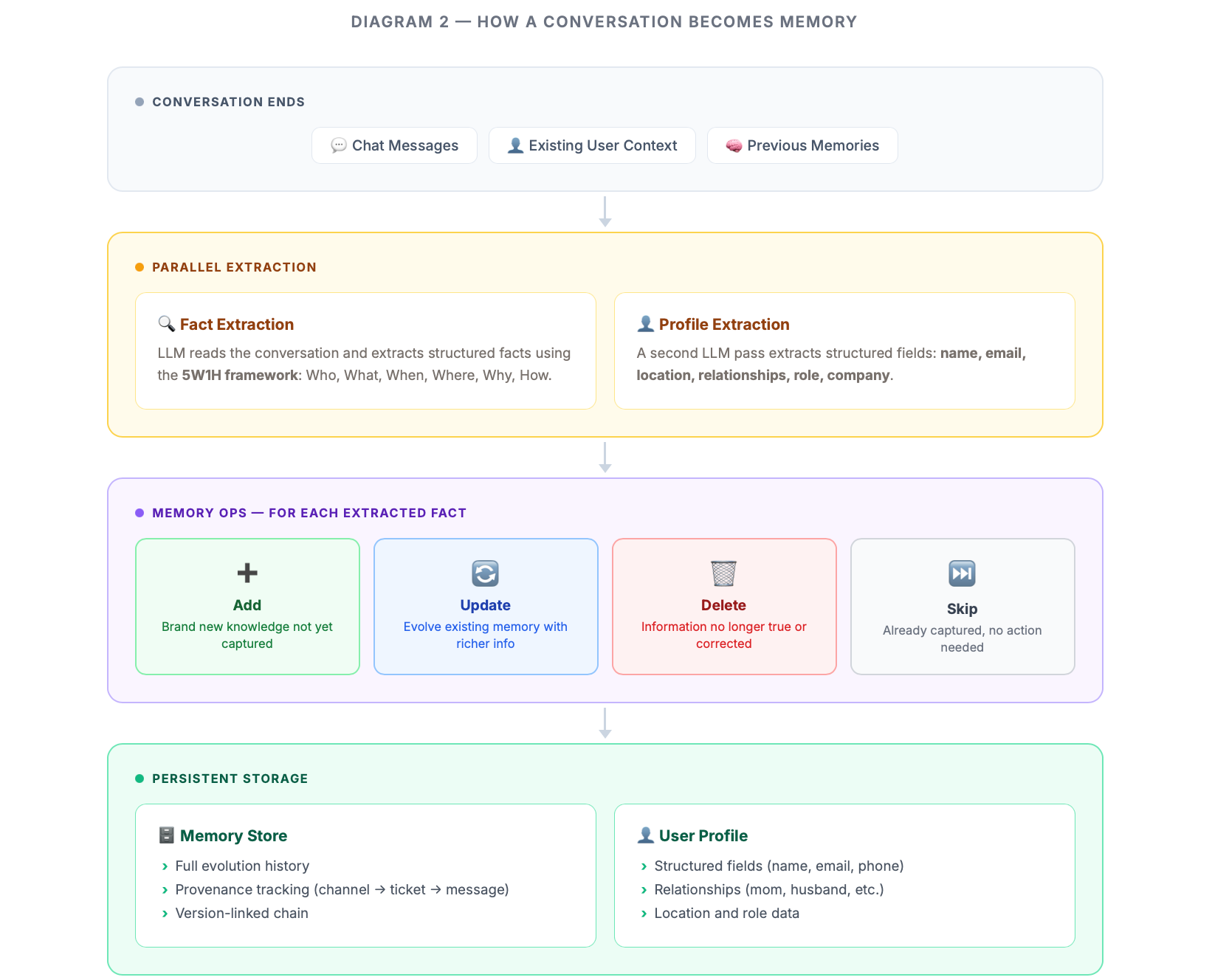

How a Conversation Becomes Memory

Let's walk through what actually happens when Jennifer chats with the AI.

She sends a message: "Hi, I'm Jennifer! I have dry, sensitive skin that gets really flaky in winter. Would The Rice Wash be gentle enough?"

The AI responds with a helpful answer. In the background, once the conversation wraps up, a memory extraction process kicks in. This is not simple keyword matching or slot filling. An LLM reads the conversation and extracts structured facts using what we call the 5W1H framework: Who is involved, What happened, When, Where, Why, and How.

From that single exchange, the system extracts facts like: Jennifer is the user's name. She has dry, sensitive skin that gets flaky in winter. She's interested in whether The Rice Wash is suitable for her skin type.

But extracting facts is only the beginning. The real challenge is what happens when those facts need to live alongside everything the system already knows.

Memory Ops Cycle: Add, Update, Delete, or Skip

This is where things get interesting. When new facts arrive, they don't just get appended to a list. Each fact goes through what we call the Memory Ops cycle: the system decides whether to:

- Add it as new knowledge

- Update an existing memory with richer information

- Delete something that's no longer true

- Skip it because it's already captured

Take this example. In conversation one, Jennifer says she's looking at Adidas Ultraboost shoes in size 10 for her husband Mike's birthday. The system stores that. In conversation two, she mentions Mike tried the shoes and size 10 was too small because Adidas runs smaller. The system doesn't just add a new fact about size 11. It recognizes that the size 10 fact needs to evolve. The memory updates to reflect that Mike needs size 11, preserving the context about why (Adidas runs small) and that size 10 was the original plan.

This is not trivial. The system needs to understand temporal relationships (a birthday that was "next week" becomes "this Saturday"), corrections ("actually he needs size 11, not 10"), and preference shifts ("I'm no longer interested in Adidas, let's focus on Nike"). Each of these triggers a different Memory Ops decision.

We also built special handling for shopping behavior. When someone is browsing and exploring, their interests are additive. Jennifer looking at Nike shoes and then also looking at Adidas means she's interested in both. But when she explicitly says "let's focus on Nike only," that's a preference shift that should replace the previous interest, not add to it.

Memory Evolution: Nothing Gets Lost

Every time a memory updates, the previous version doesn't disappear. We maintain what we call evolution chains. Think of it like version control for human knowledge.

Here's a real chain from our testing:

Version 1: "Mike will receive black/white Adidas Ultraboost shoes in size 10 as a birthday gift"

Version 2: "...for his birthday on the upcoming Saturday"

Version 3: "...but he actually needs size 11 because Adidas runs smaller"

Version 4: "...size 11 was purchased; size 10 was originally planned"

Version 5: "Mike wears shoe size 11; the size 11 fit him well"

Each version links to the previous one. The active version is always the latest, but the full history is preserved. This matters for debugging, for compliance, and for understanding how the system learns over time.

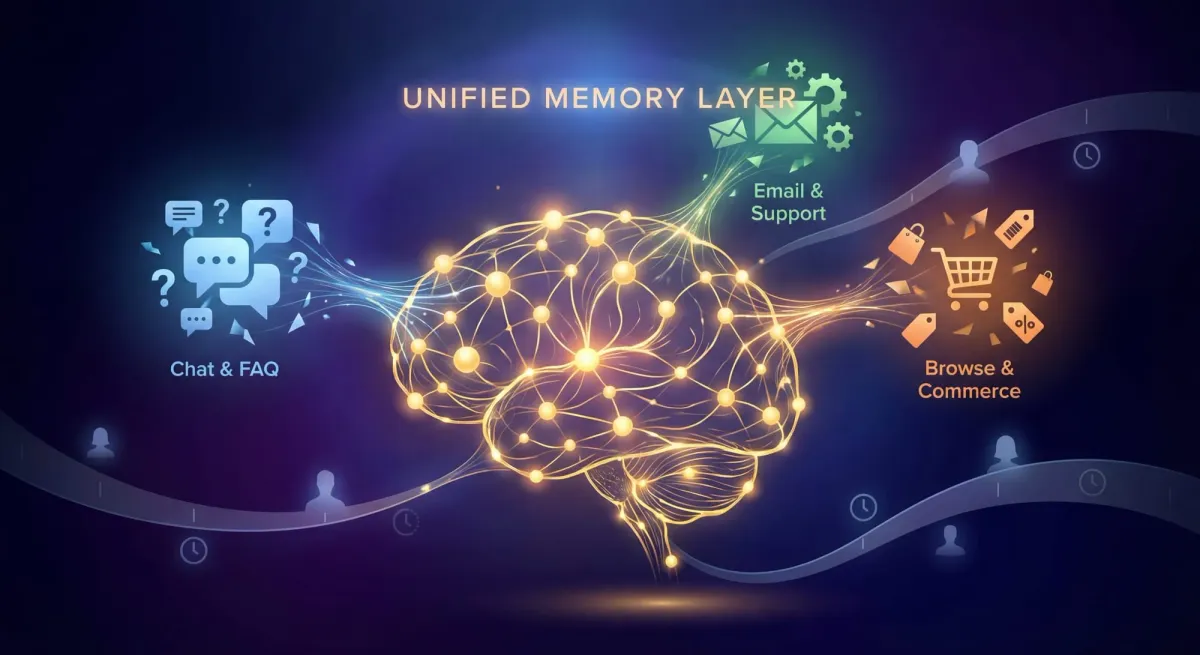

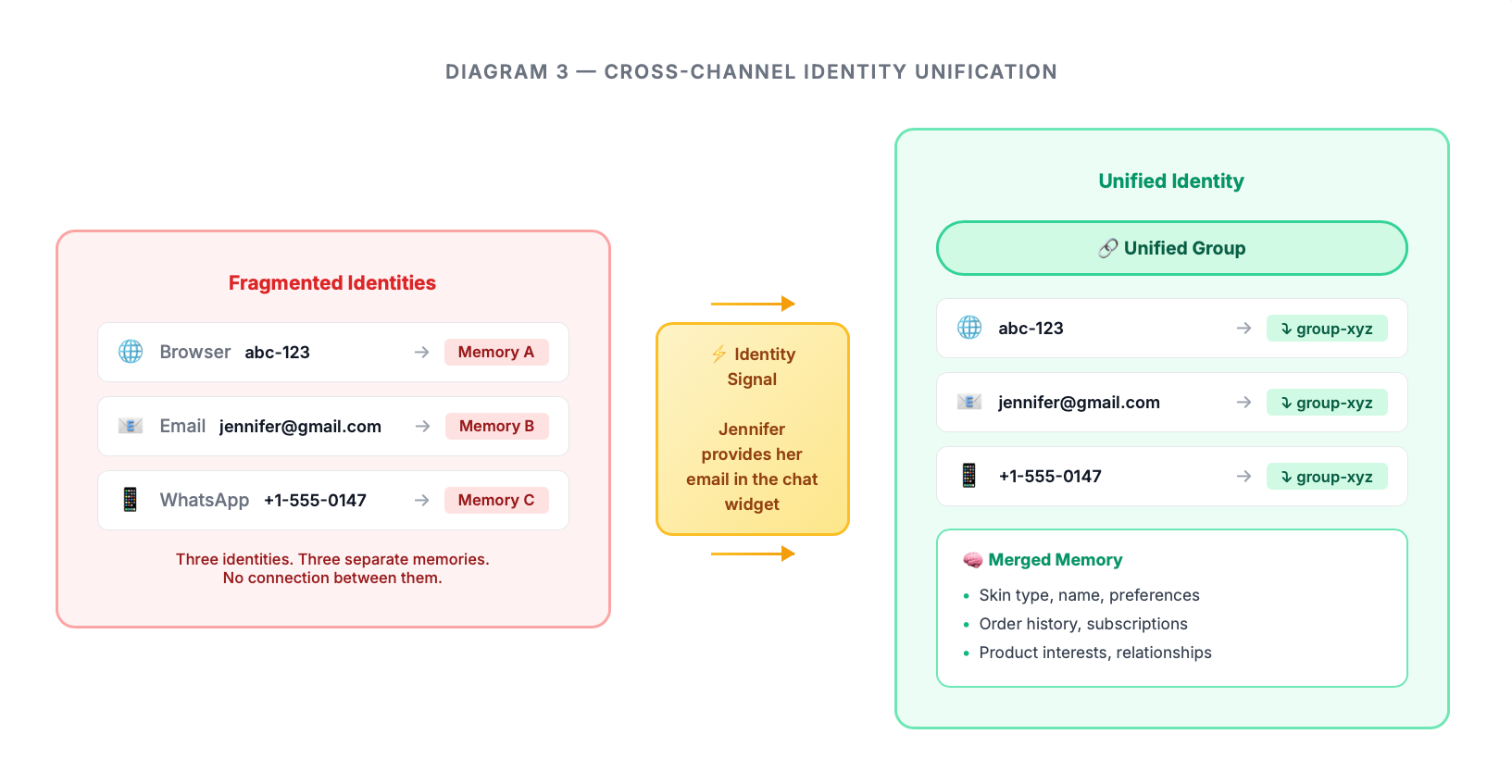

The Cross-Channel Magic

Here's where unified memory earns its name. The same customer interacts across different channels, often without even realizing they're talking to the same system. On the website, they're identified by an Alhena user identifier. We built a unification layer that detects when these different identities belong to the same person.

The practical impact is that Jennifer can start a conversation on the chat widget, continue it over email, and pick it up on WhatsApp, and the AI maintains a continuous understanding of who she is and what she needs.

What Memory-Aware Agents Actually Do Differently

Memory doesn't just make conversations warmer with a "Welcome back, Jennifer!" It fundamentally changes how the AI operates.

- Skipping redundant questions. Our product recommendation agents use guided quizzes to help customers find the right products. "What's your skin type? What are your main concerns? What's your budget?" With memory, the agent checks what it already knows before asking each question. If memory says Jennifer has dry, sensitive skin, that question gets skipped entirely. The quiz becomes shorter and more respectful of the customer's time.

- Avoiding bad recommendations. When the agent knows Jennifer already purchased The Rice Wash, it won't recommend it again. When it knows she tried The Silk Serum and loved it, it can recommend complementary products. Memory turns generic recommendations into personal ones.

- Maintaining context across channels. When Jennifer emails about her order, the email agent already knows she has dry, sensitive skin, that she's building a routine for seasonal dryness and early fine lines, and that she's also shopping for a gift for her mom. It doesn't ask her to repeat any of this.

- Personalizing proactive outreach. Nudges become smarter when they know who they're talking to. Instead of a generic "Need help finding something?" a memory-aware nudge for Jennifer might reference the moisturizer she mentioned wanting during her last chat session.

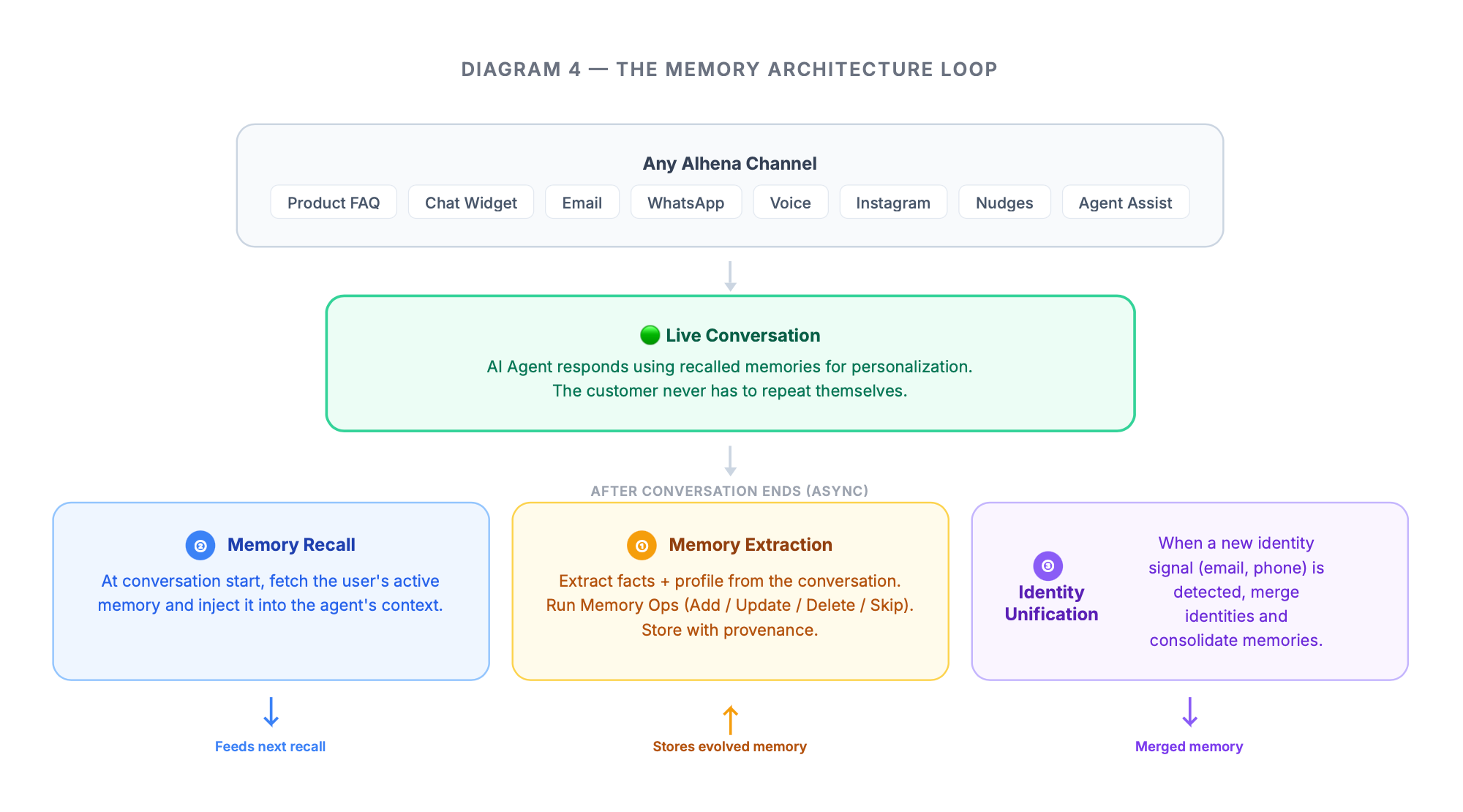

The Architecture at a Glance

For the technically curious (without getting too deep into the weeds), the system has a few key layers:

- Extraction happens asynchronously after conversations end. An LLM reads the conversation and produces structured facts plus a profile update. This runs on a background queue so it never slows down the actual conversation.

- Storage uses a dual model. Structured profile data goes to the relational database and Vector database for fast and accurate lookups. The memory itself (the bullet-point list of everything the system knows about a person) is stored as a single evolving record with full provenance tracking, recording which channel, which conversation, and which specific messages contributed each piece of knowledge.

- Retrieval happens at the start of every new conversation. The system fetches the user's active memory and injects it into the agent's context, so the AI already knows who it's talking to before the first message is even processed.

- Unification runs whenever a new identity signal is detected. It finds all existing identities for the same person, merges them under a single identifier, and consolidates their memories.

A Full Journey

To make this concrete, here's what we observed in our end-to-end testing with a skincare brand.

Jennifer starts on a product page, browsing through the FAQ widget. Across four product questions, the system learns her name, skin type, age, location, profession, skincare goals, budget willingness, and that she's shopping for her mom. That's 11 distinct memories from a handful of product-page interactions.

She then opens the chat widget to build a full morning/evening routine. The agent greets her by name, already knows her skin concerns, and skips the usual intake questions. During this conversation, she mentions interest in auto-delivery subscriptions and preference for moisturizers with specific ingredients. Four more memories added, evolving the existing ones where they overlap.

Finally, she sends an email about a recent order, wanting to add gift wrapping for her mom. The email agent knows about the mom's birthday (from the product FAQ conversation), knows her order history, and handles the request without Jennifer repeating a single piece of context.

16 memories. Three channels. One continuous understanding.

Why This Matters

The shift from stateless to memory-aware AI isn't just a feature upgrade. It changes the fundamental relationship between a brand and its customers.

When every interaction starts from zero, customers learn that the AI is a tool, not a relationship. They adjust their expectations downward. They repeat themselves. They keep their questions simple because they know the context won't carry over.

For a deeper look at how behavioral triggers, timing rules, and conflict resolution connect these touchpoints into one flow, see our guide to AI customer journey orchestration.

When the AI actually remembers, the dynamic shifts. Customers start treating it more like a trusted advisor. They share more. They come back more often. They expect (and receive) the kind of personal attention that used to require a dedicated human associate.

For brands, this means higher conversion on product recommendations, faster resolution on support tickets, and something harder to measure but equally valuable: customers who feel genuinely known.

That's what unified memory unlocks. Not smarter AI. Smarter relationships.