A customer asks your AI chatbot, "Does this retinol serum help with wrinkles?" The AI responds with a technically perfect answer: tretinoin is the prescription-strength retinoid with far stronger clinical evidence, retinol is a weaker derivative that must convert to retinoic acid in the skin, and results take 3 to 6 months instead of weeks. Every word is accurate. The customer closes the tab. At scale, automated responses like this erode trust and revenue.

This is the clear gap nobody talks about in ecommerce AI: the space between what's scientifically true and what's commercially appropriate. For brands selling skincare, wellness products, and supplements on e-commerce platforms, an AI system that says the right thing the wrong way can tank conversions, confuse buyers, and even create regulatory exposure.

The Retinol Problem (and Why It's Everywhere)

The skincare industry has spent decades building consumer confidence in OTC retinol. It works. Dermatologists recommend it. Millions of products sit on shelves across e-commerce platforms and retail stores. But a generic AI trained on medical literature doesn't know any of that context. It knows that tretinoin is hundreds of times more potent and that prescription retinoids have stronger clinical backing.

So when a shopper asks about your $68 retinol serum, the AI gives them a pharmacology lesson they didn't ask for. The product is legitimate and effective within its category, but the AI just made it sound second-rate.

This isn't limited to retinol. Hydroquinone moved to prescription-only status under the CARES Act in 2020, but brands now sell dark spot correctors with alternative brightening ingredients like arbutin and vitamin C. An AI that volunteers "hydroquinone requires a prescription" when a customer is browsing a vitamin C serum creates confusion where none existed.

The Health Claims Tightrope

Supplements and wellness products face a parallel problem. The FDA draws a sharp line between structure/function claims ("supports immune health") and disease claims ("boosts your immune system to fight colds"). That second phrase crosses into drug-claim territory, and the FTC has filed over 120 cases challenging health claims for supplements in the last decade, returning $339 million to consumers in 2024 alone.

A generic AI doesn't understand this distinction. It might describe a collagen supplement as "treating joint pain" instead of "supporting joint comfort." Both describe the same benefit. One is compliant. The other is a potential FTC violation.

The FDA classifies products as drugs based on intended use, which is largely determined by language. A moisturizer that "hydrates skin" is a cosmetic. The same moisturizer that "reduces wrinkles" is a drug. Your AI needs to know the difference to ensure compliance with FDA regulations, because your legal team and regulators certainly do.

Why Generic AI Gets This Wrong

Large language models are trained on the open internet, medical journals, and scientific databases. They default to clinical precision, not brand management or regulation awareness. That's great for a research assistant. It's terrible for a shopping assistant in regulated categories.

The numbers back this up. According to PerformLine, 61% of businesses using AI in marketing faced a compliance-related issue in 2024. One in five marketing assets monitored across 5.7 million pieces of content was flagged for potential compliance issues.

And companies can't disclaim their way out. In the Moffatt v. Air Canada case, a tribunal ruled that Air Canada was fully liable for its chatbot's incorrect statements, rejecting the argument that the chatbot was a "separate legal entity." Your AI speaks for your brand, legally and commercially. As regulations become increasingly evolving and consumer protection standards tighten, the pricing of non-compliance keeps rising.

Training AI to Speak Your Industry's Language

The fix isn't dumbing down your AI tools or filtering out scientific facts. It's training the AI to communicate with the same industry-specific awareness that your best sales associates already have. Here are the key practices that build customer trust in practice.

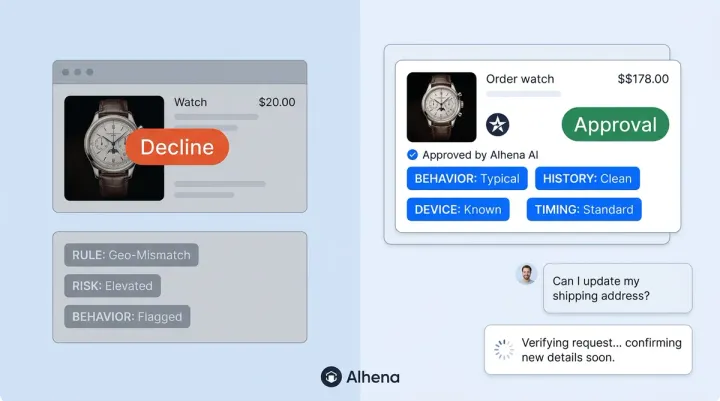

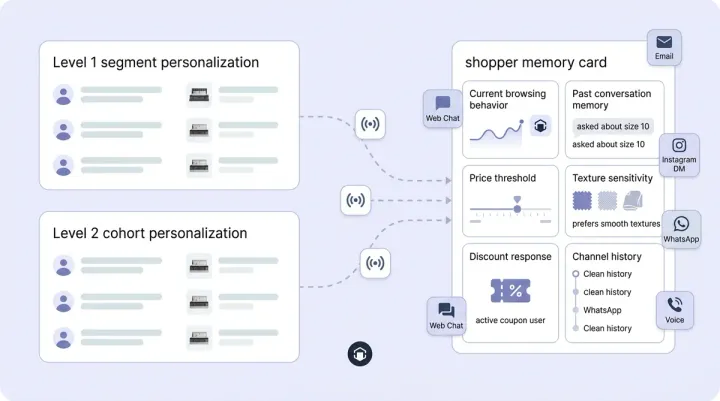

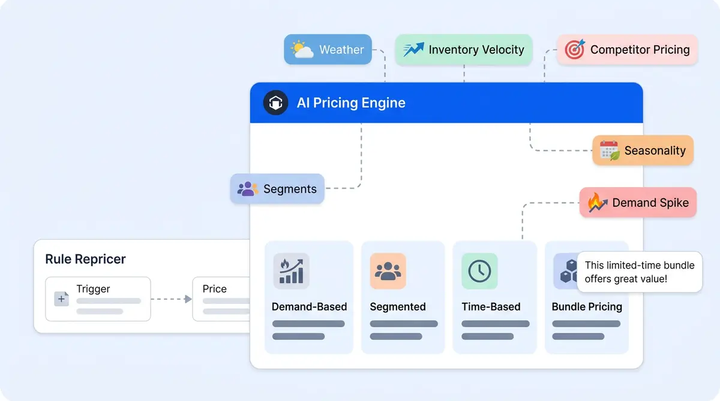

Build an industry knowledge hub. Instead of letting AI pull from general web knowledge, feed it your product data, approved marketing language, and category-specific guidelines. A knowledge hub tells the AI that your retinol serum is positioned as a "powerful anti-aging treatment" within the OTC category, not a lesser alternative to prescription tretinoin. Alhena's Product Expert Agent is built on this principle: it grounds every response in your verified product catalog, not open-internet guesswork.

Define compliant language patterns. Create explicit rules for your AI: say "helps support" not "cures," say "formulated to improve the appearance of" not "treats," say "customers love this for" not "clinically proven to." These aren't vague guidelines. They're guardrails baked into how the AI generates responses. Alhena calls this approach hallucination-free AI, where every response is grounded in approved data sources rather than probabilistic generation.

Set boundary rules for sensitive topics. Your AI should know when to stop talking. If a customer asks whether they should switch from retinol to tretinoin, the right answer isn't a comparison chart. It's "That's a great question for your dermatologist. In the meantime, here's why customers love our retinol formula." The AI stays helpful without playing doctor.

Test with real edge cases. Before deploying, run your AI through the scenarios that trip up generic models. Ask it about prescription ingredients, health claims, ingredient interactions, and off-label uses. If it gives a technically correct but commercially wrong answer even once, review your guardrails. Human oversight during testing is what keeps AI aligned with your brand. Brands like Tatcha have worked with Alhena to achieve 3x conversion rates and 82% chat deflection precisely because the AI understands beauty-specific language norms.

What "Right" Actually Means in Regulated Ecommerce

Accuracy in ecommerce AI isn't about giving customers the most scientifically complete answer. It's about giving them the commercially appropriate, regulatorily compliant, and genuinely helpful answer. Those three things aren't always the same, and the customer experience depends on getting it right, and the brands that get this right will separate themselves from the ones using generic chatbots that treat every question like a medical exam.

With 76% of beauty consumers open to AI-powered shopping and New York's Senate Bill S7263 creating explicit liability for chatbot advice in health domains, the demand for compliant AI technology features is growing fast, and the window for "good enough" solutions is closing.

Your AI should sell the way your best people sell: with product knowledge, regulatory awareness, and the judgment to know when a fact helps the customer and when it just gets in the way.

Ready to train your ecommerce AI the right way? Book a demo with Alhena AI to see how brands in skincare, wellness, and supplements use industry-specific guardrails to sell more without compliance risk, or start for free with 25 conversations.

Frequently Asked Questions

Why does AI give technically correct but commercially wrong answers in skincare?

Generic AI models are trained on medical literature and scientific databases, so they default to clinical precision. They don't understand industry-specific language norms, like how the skincare category positions OTC retinol as an effective anti-aging ingredient without comparing it to prescription tretinoin. Without domain-specific training, AI treats every question like a medical inquiry rather than a shopping conversation.

What are the risks of AI making health claims for supplement brands?

The FTC has filed over 120 cases challenging supplement health claims in the last decade and returned $339 million to consumers in 2024. If your AI says a product "treats" or "cures" a condition instead of "helps support" or "is formulated to support," it crosses from a permissible structure/function claim into a disease claim. This can trigger FTC enforcement, class-action lawsuits, and NAD challenges.

How do you train ecommerce AI to use compliant language?

Build an industry knowledge hub with your approved product data, marketing language, and compliance guidelines. Define explicit language patterns: say "helps support" instead of "cures," "formulated to improve the appearance of" instead of "treats." Then test the AI with edge cases like questions about prescription ingredients, off-label uses, and health claims before deploying it to customers.

Can companies be held legally liable for what their AI chatbot says?

Yes. The 2024 Moffatt v. Air Canada ruling established that companies are fully liable for information their chatbots provide. The tribunal rejected Air Canada's argument that the chatbot was a separate legal entity. New York's Senate Bill S7263, which reached the Senate floor in February 2026, would create explicit civil liability for chatbot advice in health-related domains.

How does Alhena AI handle regulated product categories differently?

Alhena's Product Expert Agent grounds every response in your verified product catalog and approved language, not open-internet knowledge. This means it describes products the way your brand and legal team have approved, respecting both regulatory boundaries and commercial norms. Brands like Tatcha have achieved 3x conversion rates and 82% chat deflection using this approach in the beauty category.

What is the difference between a cosmetic claim and a drug claim in skincare?

The FDA classifies products based on intended use, which is determined largely by language. A product that "hydrates skin" or "improves the appearance of fine lines" is a cosmetic. The same product claiming to "reduce wrinkles" or "treat acne" is classified as a drug and subject to FDA drug approval requirements. Your AI must understand this distinction to avoid reclassifying your products through its language.

How long does it take to set up AI guardrails for regulated ecommerce?

With Alhena AI, deployment takes under 48 hours with no developer resources needed. The system ingests your product catalog, brand guidelines, and compliance rules to create an industry-specific knowledge base. You can start with 25 free conversations to test how the AI handles sensitive product categories before scaling.