The Metric Most Brands Are Getting Wrong

Sixty-one percent of consumers are now using AI platforms for online shopping. That number keeps climbing. Brands tracking their AI visibility have started asking the right question: "Are we showing up?" But most stop too soon. They celebrate a citation, a URL appearing somewhere in an AI-generated answer, and call it a win.

It's not. Being mentioned is not the same as being rendered. And that gap is where revenue disappears.

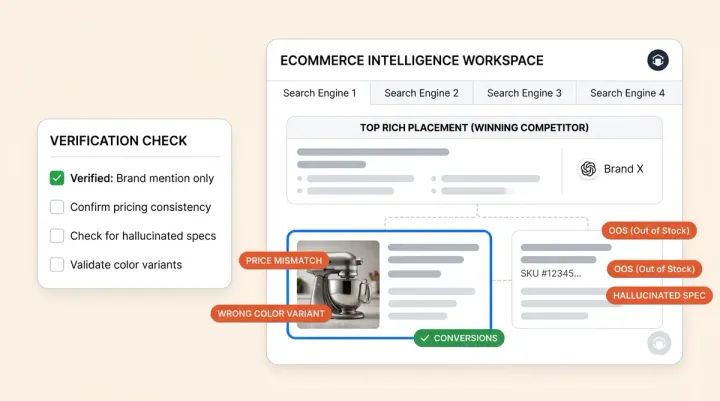

AI product rendering analysis reveals what actually happens after your product gets cited. Did the AI surface a full product card with your price, rating, image, and key attributes? Or did it bury your URL in a wall of text while a competing product got the rich card treatment? This post breaks down why product rendering quality now matters more than citation count, and how to fix it.

Citation vs. Rendering: The AI Visibility Gap

A citation means your product URL appeared in an AI-generated response. A rendering means the AI built a visual product card around your item, complete with image, pricing, star rating, and product attributes. These are two entirely different outcomes.

When a shopper asks an AI assistant for "the best vitamin C serum for sensitive skin," the response typically includes three to five product recommendations. Some appear as rich AI visibility product cards with thumbnails, price points, ingredient highlights, and review scores. Others appear as plain-text mentions with a hyperlink. The products with full cards get the clicks. The text mentions get scrolled past.

Rich results earn 58 clicks per 100 queries compared to 41 for non-rich results. Links displaying star ratings get 20 to 30 percent more clicks than plain text links. In AI shopping surfaces, the difference is even more dramatic because these interfaces function as visual storefronts with rich product visualization, not search result pages.

Brands celebrating AI mentions without checking how their products actually render are measuring the wrong metric entirely.

What Rendering Analysis Actually Measures

AI product rendering analysis goes deeper than presence detection. It answers specific questions about how each artificial intelligence engine displays your products to shoppers:

- Was a product card rendered or just a text mention? A rich card with image, price, and rating converts at a fundamentally different rate than a buried link.

- Were pricing and ratings surfaced? If the AI showed your product without a price, shoppers have no reason to click through.

- Was the product positioned as premium, affordable, or best value? Artificial intelligence engines frame recommendations contextually. Your $85 serum might be labeled "the budget pick" or "the splurge-worthy option" depending on how the AI reads and interprets your data.

- Was the specific SKU referenced or just the brand? A brand mention paired with a different product's recommendation means zero value for the SKU you want to sell. This is why SKU-level AI visibility matters more than brand-level tracking.

- Were key attributes like shade, size, ingredients, or compatibility displayed? Missing attributes mean missing conversions.

Alhena AI's rendering analysis tracks each of these dimensions across ChatGPT, Google AI Overviews, and Perplexity, giving brands SKU-level visibility into exactly how their products appear on every AI shopping surface.

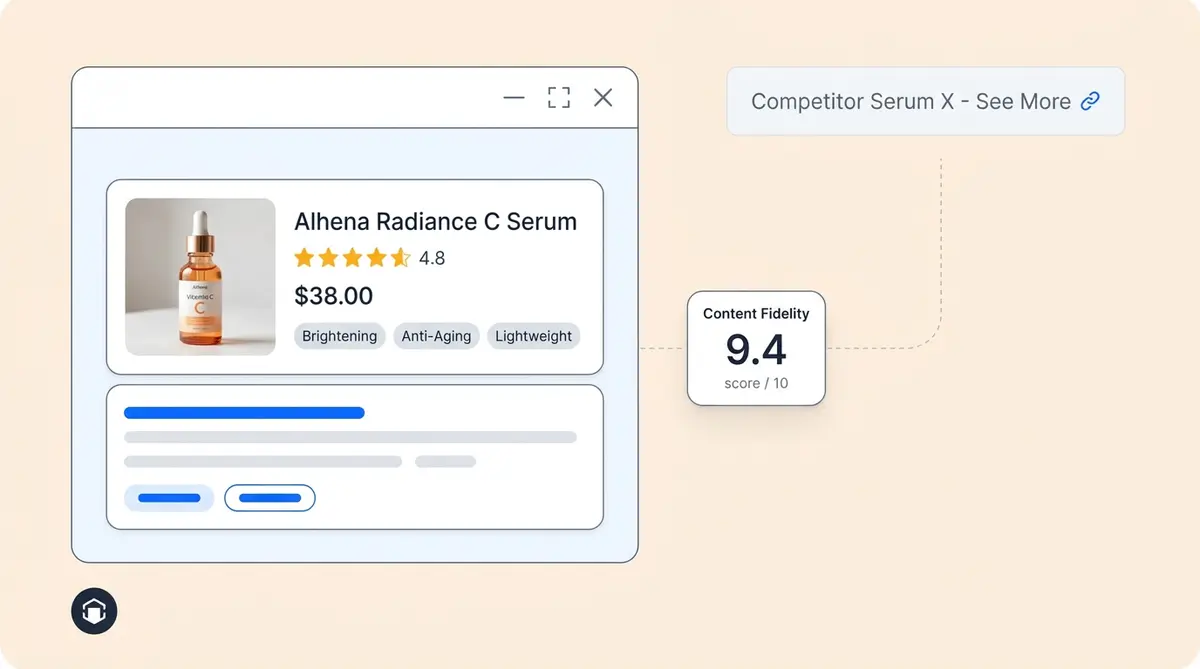

Two Products Cited, One Product Wins: Beauty Example

Picture two vitamin C serums competing for the same AI shopping query. Both brands have strong reviews. Both get cited in the AI response. But only one gets rendered well.

Serum A appears as a full product card: a pure image of the product, $48 price tag, 4.7-star rating from 2,300 reviews, and a short attribute list showing "15% L-ascorbic acid, fragrance-free, suitable for sensitive skin." The shopper can visualize everything they need to make a decision without leaving the AI interface.

Serum B shows up as a text mention: "Brand B also offers a popular vitamin C serum." No image. No price. No rating. No ingredient breakdown. Just a hyperlinked brand name buried in a paragraph.

Both products were "mentioned" in the AI answer. Only one was rendered in a way that drives clicks. The difference comes down to structured data completeness, product feed richness, and review signal depth. Serum A's brand invested in those signals. Serum B's brand didn't, and they're invisible in practice despite being technically cited. For a deeper look at how ChatGPT constructs these product displays, see our guide to ChatGPT rendering analysis.

The Same Gap in Electronics

Here’s another example. The pattern repeats across categories. A shopper asks an AI assistant for "the best laptop for video editing under $1,500." Two models get recommended.

Laptop A renders as a product card showing a 16-inch Retina display, M-series chip, 32GB RAM, 512GB SSD, 4.8-star rating, and $1,399 price. The card includes key specs that match the shopper's query. The realism is striking. It feels like browsing a product page inside the AI interface.

Laptop B gets a single sentence: "You might also consider [Brand B's] latest model." No specs. No price. No image. The shopper would need to click away, find the product page, and compare manually. Most won't bother.

As we covered in our analysis of Google AI Overviews and ecommerce ranking, pages with structured data get cited 3.1x more often in AI responses. But citation alone doesn't guarantee a rich card. The quality and completeness of your product data determines whether the AI renderer can build a compelling visual presentation or falls back to a generic text mention.

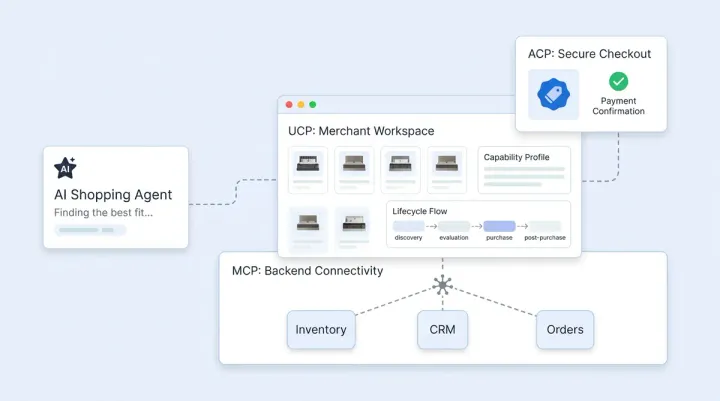

How Each AI Platform Renders Products Differently

Not all AI shopping surfaces treat product rendering the same way. Each platform's renderer pulls from different data sources, applies different display logic, and presents products in its own visualization format. Understanding these differences is the first step to fixing how your products show up.

ChatGPT Shopping

ChatGPT runs two parallel processes for every shopping query. One generates the written narrative answer. The other pulls product data from Google Shopping feeds and renders visual carousel cards with images, prices, and direct purchase links. The top recommendations match Google Shopping's top three results about 75 percent of the time. If your product feed isn't in Google Merchant Center with complete attributes, you won't appear in ChatGPT's visual cards even if the text response mentions your brand.

Open-ended prompts like "I need a moisturizer for dry skin" trigger shopping cards 12.1 percent of the time, while brand-specific prompts trigger cards only 3.1 percent of the time. That four-times difference means AI product rendering favors discovery queries where attribute-rich product data can shine.

Google AI Overviews

Google's AI Overviews pull from the Shopping Graph, massive datasets containing over 50 billion product listings. Product panels are dynamically constructed and can be reordered during follow-up questions. Google is notably less brand-forward than other platforms, including brand mentions in only 6.2 percent of ecommerce responses compared to ChatGPT's 99.3 percent.

Your product needs strong structured data and a high Google data quality score (90 to 100 percent) to earn a rendered card instead of a text mention. Products scoring below 50 percent on data quality get minimal AI recommendations regardless of brand strength or organic ranking position.

Perplexity

Perplexity displays product cards with detailed pros-and-cons breakdowns extracted from reviews and expert guides. Cards are unsponsored and include reasoning for each recommendation. The platform cites brands in 85.7 percent of responses and draws from over 8,000 unique domains, giving it the broadest source diversity of any AI shopping surface.

Products with rich review data and complete attribute markup tend to render better on Perplexity than on other platforms. A product visible on Perplexity may be invisible on ChatGPT, or rendered as a full card on one and a bare link on another. Alhena AI monitors rendering across all three platforms simultaneously so you can spot these cross-platform gaps.

The Revenue Cost of Poor Rendering

The financial impact of rendering quality is measurable. ChatGPT referral traffic converts at 1.81 percent compared to 1.39 percent for organic search, a 31 percent lift. Revenue per session from AI referrals runs 10.3 percent higher than non-branded organic. But these numbers only apply to products that earned the click through strong rendering.

Shopping queries on AI platforms grew 4,700 percent between 2024 and 2025. The AI powered ecommerce software market reached $9 billion in 2025 and is projected to hit $64 billion by 2034. Brands that don't invest in rendering quality now are leaving compounding revenue on the table as these platforms scale.

Stores with 99.9 percent attribute completion see three to four times higher visibility in AI recommendations compared to stores with sparse data. That's not a marginal improvement. That's the difference between being a featured product and being invisible. The Alhena AI ROI calculator can help you estimate what better AI rendering quality means for your specific revenue numbers.

Why Traditional SEO Doesn't Predict AI Rendering

Over 30 percent of AI Overview citations come from pages ranking beyond position 100 in traditional search. Domain authority correlation with AI citations has dropped to near zero. A top-three organic position gives you only an 8 percent chance of being cited in an AI response.

This means your Google rankings tell you almost nothing about your AI rendering quality. A page that ranks first for "best vitamin C serum" might not appear in any AI shopping response for that same query. The signals that matter for AI product rendering, including complete structured data, rich product feeds, review depth, and attribute granularity, are different from the signals that drive traditional search rankings.

Fewer than 10 percent of the sources cited across major AI platforms rank in the top 10 organic search results for the same query. Brands need a separate strategy for AI product rendering quality, and that strategy starts with understanding how shoppers visualize your products when they currently appear. AI visibility tools built for ecommerce can surface these gaps that traditional SEO dashboards miss entirely.

Rendering Quality Is Now a Conversion Factor

AI shopping surfaces in every browser are becoming visual storefronts. ChatGPT displays AI powered product carousels with images and buy links. Google AI Overviews pull product panels from the Shopping Graph. Perplexity shows product cards with pros-and-cons breakdowns extracted from reviews.

Shoppers using AI for product discovery behave like they're browsing a store, not reading search results. They scan cards, compare prices, check ratings, take a screenshot for comparison, and click the product that looks most complete, trustworthy, and aesthetic. Visitors referred from AI-based search platforms convert at 9.84 percent, roughly three times the typical ecommerce rate. But that conversion advantage only applies to products rendered well enough to earn the click.

Stores with near-complete attribute data see three to four times higher visibility in AI recommendations compared to stores with sparse product feeds. The AI shopping assistant landscape rewards data richness, not just data presence.

How Product Imagery and 3D Rendering Feed AI Product Cards

AI shopping surfaces don't just pull text data to build product cards. They also evaluate the visual assets tied to your products. Brands investing in high-quality 3D rendering and photorealistic product images have a measurable advantage in how AI engines render their products.

When an AI engine builds a product card, it selects the best available image from your product feed. If your feed includes only a single flat-lay photo, the card looks sparse. If it includes photorealistic 3D renders showing the product from multiple angles, with lifestyle scenes, context, and detail shots with proper lighting, the AI has more to work with. The result is a richer, more engaging product card that captures attention in a crowded AI shopping carousel.

3D models are becoming especially important for categories like furniture, electronics, and beauty. Brands using 3D rendering (a technique originally developed for architectural projects) and CAD-based product models to create clean, high-resolution product visuals see better AI render quality across ChatGPT, Google, and Perplexity. The AI assisted product card creation and rendering process favors images that are clear, properly sized, and contextually rich.

This is why product visualization matters at the AI level, not just on your own product pages. Your 3D product imagery feeds directly into how AI tools display your products to shoppers. A photorealistic hero image with a pure image background with crisp detail outperforms a blurry lifestyle photo every time in AI product card rendering. Brands that treat their product feed images as an early stage investment in AI visibility will see compounding returns as AI shopping surfaces grow.

High end products benefit most from this approach. A luxury skincare brand with professional 3D renders and 3D models showing texture, packaging detail, and ingredient visualization will render as a premium pick in AI answers. A competitor relying on low-resolution screenshots or sketch-quality images gets relegated to a text mention. Creative control over your product imagery isn't just a branding exercise anymore. It's a rendering quality factor that AI tools evaluate when deciding how to display your product in a browser-based shopping experience.

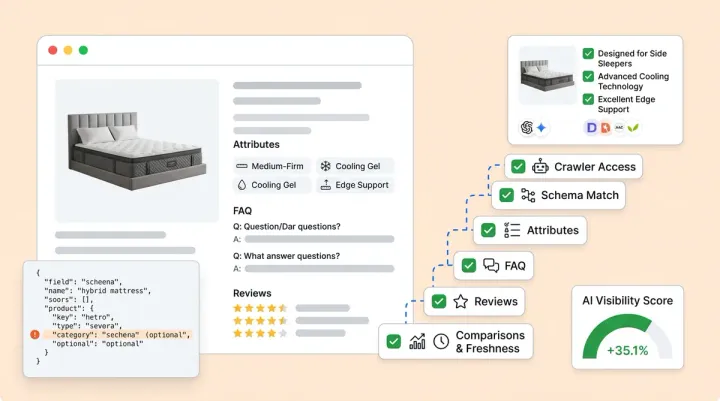

How to Improve Your Rendering Quality

Rendering quality isn't random. It's driven by specific, fixable data signals. Here's where to focus:

Structured Data Completeness

Build your data architecture around full Product schema markup including name, description, image, brand, SKU, GTIN, price, currency, availability, and AggregateRating. Pages with thorough schema are 36 percent more likely to appear in AI-generated summaries. Don't stop at the minimum fields. Every optional attribute you add gives the AI more material to build a rich card.

Product Feed Richness

Your product feed is your new storefront. Include detailed titles with key attributes (size, color, material), professional, high-resolution product images from multiple angles. Images quality directly affects whether AI engines render your product as a card or skip it, and complete product descriptions, video content, and answers to common shopper questions. Artificial intelligence engines pull directly from feeds to construct cards, so sparse feeds produce sparse cards. Our breakdown of product data fields for AI shopping covers which attributes matter most.

Review Signal Depth

Star ratings alone aren't enough. Artificial intelligence engines extract specific claims from review text to populate product cards. "Quiet enough for apartment living" or "perfect for sensitive skin" become the attribute snippets that appear on cards. Encourage detailed reviews, and make sure your review markup is crawlable.

Attribute-Level Optimization

Map your product attributes to the specific queries shoppers ask. For beauty products, that means shade range, ingredient percentages, skin type compatibility, and fragrance status. For electronics, it means processor specs, RAM, storage, display resolution, and battery life. The more granular your attribute data, the more complete your AI product cards become. Brands investing in GEO for product brands are already seeing the payoff in richer AI product cards.

Alhena AI tracks rendering quality across all major AI shopping surfaces, showing you exactly which products render as rich cards and which fall flat. The platform flags missing attributes, pricing gaps, and positioning issues so you can iterate on them before they cost you clicks. With SKU-level rendering analysis, you see how each product appears on ChatGPT, Google, and Perplexity, not just whether it was mentioned.

Stop Measuring Mentions. Start Measuring Product Rendering.

The brands winning on AI shopping surfaces aren't just the ones getting cited. They're the ones whose products render with full cards: image, price, rating, specs, and attributes all visible inside the AI interface. That's what drives clicks. That's what drives conversions.

If your AI visibility strategy stops at "were we mentioned," you're missing the metric that actually matters. Alhena AI's rendering analysis shows you exactly how your products appear, not just where they appear, giving you the data to turn AI citations into AI conversions.

Ready to see how your products actually render across AI shopping surfaces? Book a demo with Alhena AI or start free with 25 conversations.

Frequently Asked Questions

What is AI product rendering analysis and how does it differ from citation tracking?

AI product rendering analysis measures how your products visually appear inside AI shopping answers, not just whether a URL was included. Alhena AI tracks whether each SKU renders as a full product card with image, price, and ratings or appears as a plain text mention. This distinction matters because shoppers engage with rich cards, not buried links, making rendering quality a direct driver of clicks and revenue from AI surfaces.

How do AI visibility product cards affect ecommerce conversion rates?

Products rendered as rich AI visibility product cards with pricing, ratings, and attributes earn significantly more clicks than text-only mentions. AI-referred visitors already convert at nearly 3x the typical ecommerce rate, but only when the product card gives shoppers enough information to act. Alhena AI helps brands identify which SKUs render well and which need product data improvements to capture that conversion lift.

Which product data fields matter most for rendering quality on ChatGPT and Google AI?

The highest-impact fields include product images, price and currency, aggregate ratings with review count, GTIN or SKU identifiers, and detailed attributes like size, color, material, and compatibility. Alhena AI flags specific missing fields per product and per AI engine so brands can prioritize the data gaps that directly reduce their rendering quality on shopping AI surfaces.

Can a product be cited in an AI answer but still lose the sale to a better-rendered competitor?

Yes, and this happens constantly. Two products can appear in the same AI response, but the one with a complete product card (image, price, specs, rating) wins the click while the text-only mention gets ignored. Alhena AI shows you exactly when this happens at the SKU level, so you can take control of the data gaps before they cost you revenue on AI shopping platforms.

How does Alhena AI help brands improve product card optimization across AI shopping surfaces?

Alhena AI monitors rendering quality across ChatGPT, Google AI Overviews, and Perplexity for every tracked SKU. It identifies whether each product renders as a rich card or text mention, checks if pricing and ratings are displayed, flags missing attributes, and tracks competitive positioning. Brands use these insights to prioritize structured data fixes and product feed improvements that directly increase their AI visibility and conversion rates.