Consumer trust research reveals a tension that every ecommerce brand and businesses of all sizes have to reconcile. On one side, shoppers who prefer knowing they're talking to AI say it sets honest, ethical expectations and feels more transparent. On the other side, consumer data shows that shoppers told upfront they're chatting with a bot sometimes convert at lower rates, perceiving the communication as impersonal or less capable. Meanwhile, the regulatory environment is moving decisively toward mandatory notification. The EU AI Act is law. US states are following. The challenge is no longer whether to disclose but how to disclose in a way that builds trust rather than undermining it.

The Case for Full AI Transparency in Ecommerce

Three arguments make a strong case for telling shoppers exactly what they're interacting with.

Regulatory Compliance Is Non-Negotiable

The EU AI Act requires that users be informed when they are interacting with an AI system. Enforcement timelines are already active for some provisions and expanding through 2026, with penalties reaching up to 35 million euros or 7% of global annual turnover. Several US states have proposed or passed similar transparency requirements, including California's Bot Disclosure Act (AB410), which is already in effect, and Colorado's AI Act, which takes effect in mid-2026. Organizations and businesses operating internationally need AI disclosure as a baseline compliance posture aligned with responsible best practices, consumer protection standard, protecting both the business and its customers and clients, not an optional philosophy.

Trust Through Honesty

Research shows that 74% of consumers surveyed say they appreciate brands being upfront about AI use. Disclosure sets accurate expectations and prevents the backlash that occurs when consumers feel deceived after realizing they were talking to a bot pretending to be human. A 2025 study published in Organizational Behavior and Human Decision Processes found that across 13 experiments, voluntary AI disclosure reduced trust slightly, but third-party exposure of undisclosed AI use caused far greater trust damage. Getting caught hiding AI is worse than being transparent about it.

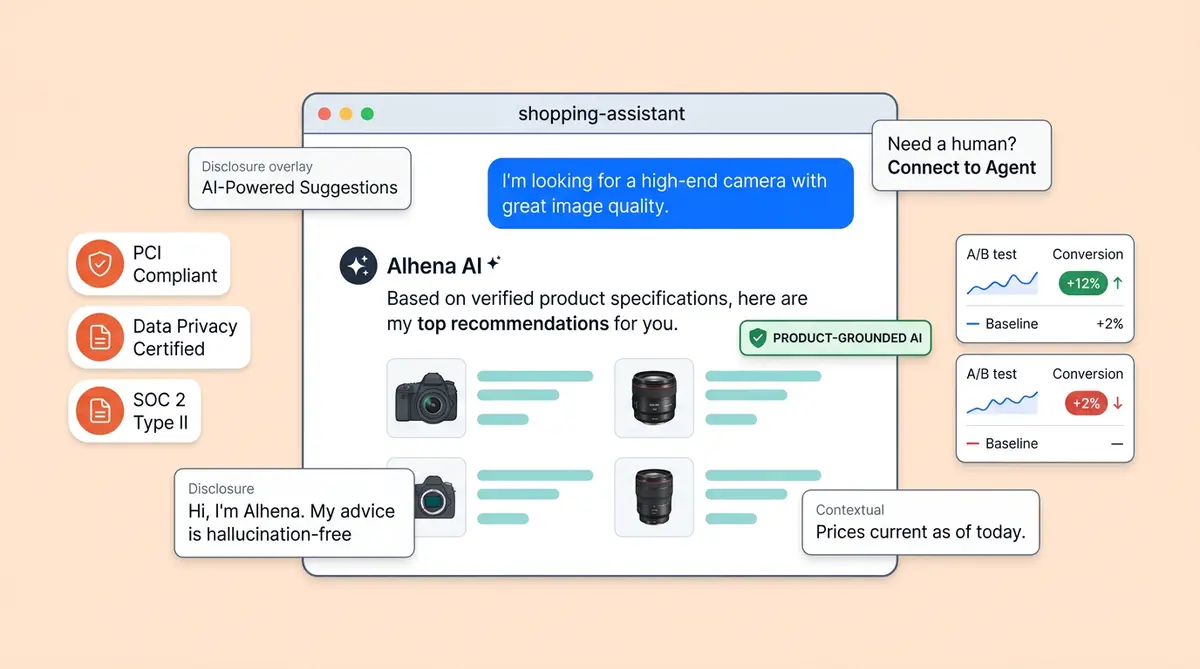

Quality Signal, Not Warning Label

When your AI is genuinely accurate, helpful, and capable, disclosure becomes a strength. Telling a shopper "I'm an AI assistant trained on our full product catalog" signals competence, 24/7 availability, and clear benefits rather than limitation. Brands using Alhena AI's Shopping Assistant have seen 3x conversion rates and 38% AOV uplift with full transparency, because the AI consistently delivers correct, product-grounded answers that customers and clients trust. (See the Tatcha case study.)

The Case Against Aggressive AI Disclosure

The counterarguments and challenges deserve honest consideration. Dismissing them leads to poorly designed disclosure that hurts the shopper experience.

Priming Bias

Studies show that telling shoppers "you're talking to a bot" before the conversation starts can prime them to evaluate responses more critically and forgive errors less readily than they would for a human agent. The label creates a mental framework that works against the AI even when responses are objectively good. A landmark study in Marketing Science (2024 analysis) found that pre-conversation chatbot notification reduced purchase rates by 79.7% in outbound sales contexts, though the effect was significantly smaller in inbound customer service interactions where the shopper initiated the conversation.

Conversion Friction

Some A/B tests show 5 to 15% lower engagement rates when disclosure appears as the first message versus appearing subtly in the interface. Yet the phrasing and timing of identification matters as much as the fact of disclosure itself. A blunt "You are chatting with a robot" creates a different reaction than "I'm your AI shopping assistant, and I know every product in our business catalog." Same information, very different framing.

Irrelevant for High-Quality AI

If the AI delivers accurate, personalized, brand-aligned responses, some believe that identification is beside the point. The shopper cares about getting the right answer through ethical, responsible AI, not whether a human or AI provided it. When Alhena AI's Support Concierge resolves inquiries with 90% CSAT and 63% automated resolution, the distinction between human and AI fades because the outcome is the same: the shopper got exactly what they needed. (See the Puffy results.)

Why Regulation Settles the Debate

Regardless of where you land on the conversion argument, the direction of global regulation around AI is toward mandatory AI disclosure. The EU AI Act is law. California's bot disclosure requirements are already enforceable. Colorado, Illinois, and other US states are proposing and advancing similar disclosure requirements. The The federal FTC treats concealment of AI as potentially deceptive trade practices under its existing federal Section 5 authority.

Brands must build disclosure into their AI experience now avoid the scramble of retrofitting compliance later. For marketers and ecommerce companies, marketers, and leaders, the strategic question shifts from "should we disclose?" to "how do we disclose in a way that builds trust and protects conversion?" That shift is where the real competitive advantage lives.

Four AI Transparency Approaches With Copy Templates

Not every notification approach carries the same conversion impact. Here are four options, each with ready-to-use copy you can adapt to your brand voice.

Approach 1: Subtle Interface Label

A small label on the chat widget reads "Powered by AI" or "AI Shopping Assistant" without a current conversational notification message. This satisfies basic transparency without interrupting the experience. It works well for brands whose shoppers are already comfortable with AI interactions.

Template: Widget label: "AI Shopping Assistant" | No first-message disclosure

Approach 2: Confident Introduction

The AI's first message includes an identity statement framed as capability, not caveat.

Template: "Hi, I'm [Brand Name]'s AI shopping assistant. I know our full catalog and can help you find exactly what you need. What are you looking for today?"

This frames AI as a feature. The shopper reads "I know our full catalog" and thinks expertise, not limitation.

Approach 3: Hybrid Approach

The AI introduces itself as AI and immediately follows with a value statement and a human option.

Template: "I'm an AI assistant trained on our products. I can help with recommendations, sizing, and orders. If you'd prefer a person, just say 'connect me to an agent.'"

This satisfies regulations and requirements while giving the shopper control. It works especially well for companies in regulated verticals or those selling to EU customers where the AI Act applies.

Approach 4: Contextual Notification

The AI does not lead with disclosure but identifies itself honestly when directly asked "am I talking to a real person?"

Template (when asked): "I'm an AI assistant, but I have access to our full catalog and real-time order data. Most shoppers find I can help faster than waiting for an agent. Would you like to continue, or shall I connect you with a person?"

Note: This approach may not satisfy the EU AI Act or California's Bot Disclosure Act, both of which require proactive notification. Use it only in jurisdictions without active chatbot notification rules and mandates around disclosures, and must plan to upgrade before August 2026.

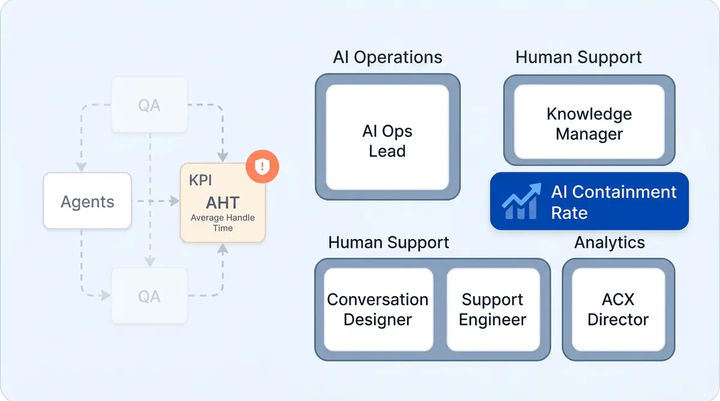

A/B Testing Framework for Notification Impact on Conversions

Don't guess which approach works for your audience. Test it. Here's a framework that gives you clean, actionable data for analysis.

Test structure: Run each of the four approaches against a no-notification control for 4 weeks with at least 1,000 conversations per variant. Four weeks accounts for weekday and weekend traffic patterns, promotional cycles, and seasonal variation.

Metrics to measure:

- Engagement rate: Percentage of visitors who start a conversation after seeing (or not seeing) the disclosure

- Conversation depth: Messages per session, which indicates whether shoppers stay engaged after the AI identifies itself

- Conversion rate: Add-to-cart and checkout events from AI-assisted sessions, tracked with source-level attribution

- CSAT score: Post-conversation satisfaction ratings

- Escalation rate: Percentage of conversations where shoppers request a human agent

The winning approach is the one that satisfies regulatory requirements while maintaining or improving the highest combination of engagement and conversion. Most marketers and businesses find that Approach 2 (Confident Introduction) or Approach 3 (Hybrid Disclosure) outperform both no disclosure and aggressive notification because they frame AI as an innovation and feature rather than a limitation.

Alhena AI's built-in CSAT collection and revenue attribution analytics let you run these tests and measure conversion impact with source-level attribution, so transparency decisions are driven by data, not assumption or uncertainty.

Why Alhena AI Makes Transparency a Competitive Advantage

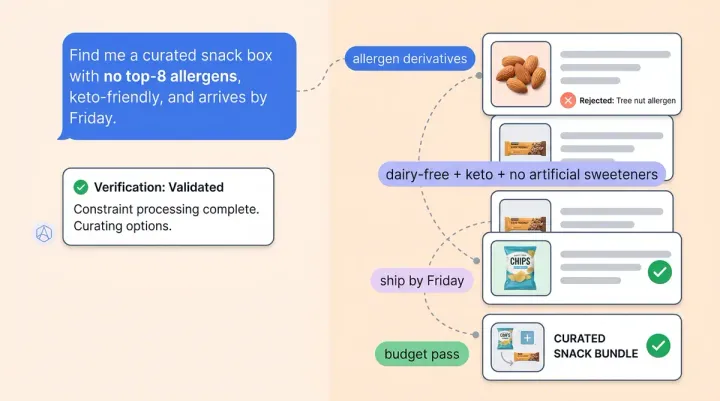

Disclosure becomes a risk when the AI behind it is unreliable. If your chatbot hallucinates product specs, invents return policies, or gives generic responses, telling shoppers "this is AI" invites scrutiny your AI can't survive. That's why the choice of technology platform matters more than the choice of notification wording.

Alhena AI is built to make honesty a strength. Its hallucination-free generative AI architecture, an innovation in ecommerce technology means every response the AI gives is traceable to approved knowledge sources: your product catalog with full access to your policies, your brand guidelines. Disclosure doesn't carry the risk of exposing shoppers to fabricated or ai-generated content that is inaccurate because there is no fabricated information to expose.

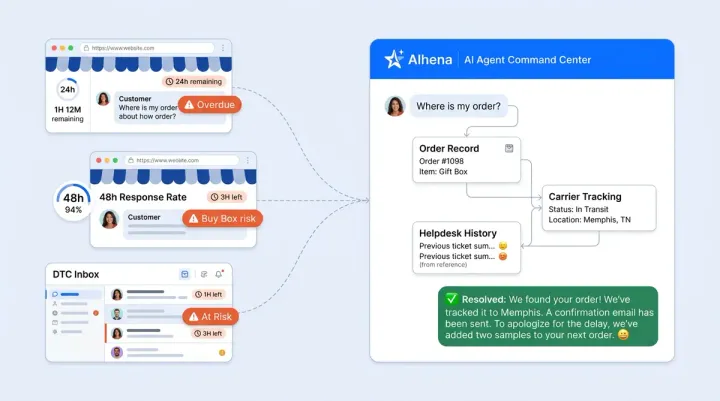

Alhena's Guideline Studio lets you test different disclosure messages against sample conversations before going live, so you can see exactly how each approach reads within the context of real shopper questions. The Agent Assist module ensures that when shoppers do escalate, human agents maintain oversight with full conversation context, making the handoff feel like a continuation rather than a restart.

The results speak for themselves. Tatcha achieved 3x conversion rates and 11.4% of total site revenue from AI with transparent, product-grounded responses. Manawa cut response times from 40 minutes to 1 minute with 80% inquiry automation. Crocus reached an 86% deflection rate with 84% CSAT. (See all customer stories.)

These results hold up under full disclosure because the AI earns the trust that transparency demands. When you tell a shopper "I'm an AI assistant trained on 5,000 products in our catalog," and then the AI actually demonstrates that knowledge, notification becomes a promise fulfilled, not a warning issued.

The Honesty Advantage: Act Now or Catch Up Later

The transparency debate is already settled by regulation. Disclosure is coming for every brand whether they choose it or not. The brands that frame it as a trust signal and ethical standard today will hold a competitive advantage over those forced into compliance later, because their shoppers will already associate AI notification with accurate, helpful, expert-level service rather than the robotic FAQ bots that gave chatbots a bad reputation.

Your shoppers don't fear AI. They fear bad AI. Give them great AI, tell them exactly what it is, and watch disclosure become the reason they trust your brand more, not less.

Ready to turn AI honesty into a conversion advantage? Book a demo with Alhena AI to see hallucination-free, product-grounded responses in action. Or start free with 25 conversations and test your notification approach with real shoppers.

Frequently Asked Questions

How does hallucination-free AI change the disclosure decision for ecommerce brands?

When your AI is grounded in verified product data and never fabricates information, disclosure becomes a confidence signal rather than a risk. Alhena AI's hallucination-free architecture traces every response to approved knowledge sources, so telling shoppers "this is AI" invites scrutiny your AI can actually survive. Brands using Alhena have achieved 3x conversion rates with full transparency because accurate answers earn the trust that openness demands.

Can I test different AI disclosure messages before going live on my ecommerce store?

Yes. Alhena AI's Guideline Studio lets you configure and preview different disclosure messages against sample shopper conversations before deploying them. You can test how each approach reads in context, from subtle interface labels to confident introductions, and then use Alhena's built-in revenue attribution analytics to A/B test and review the winning variant with real shoppers and measure conversion impact with source-level data.

What AI disclosure approach works best for EU AI Act compliance in ecommerce?

The EU AI Act requires proactive notification, meaning shoppers must be informed they are interacting with AI before the conversation begins. Approach 2 (Confident Introduction) or Approach 3 (Hybrid Disclosure) both satisfy this requirement while framing AI as a capability. Alhena AI supports brand voice configuration across all disclosure approaches, so your compliance messaging sounds like your brand, not a legal disclaimer.

How do I measure whether AI disclosure hurts or helps conversion on my ecommerce store?

Run each disclosure approach against a no-disclosure control for four weeks with at least 1,000 conversations per variant. Track engagement rate, conversation depth, conversion rate, CSAT score, and escalation rate. Alhena AI's built-in analytics provide source-level attribution for AI-assisted conversions, letting you isolate the impact of disclosure wording on revenue without relying on third-party tracking tools.

Does AI disclosure affect brand voice consistency across chat, email, and social channels?

It can if your disclosure is bolted on as an afterthought. Alhena AI's brand voice configuration applies consistently across ecommerce web chat, email, Instagram DMs, WhatsApp, and voice channels, so your notification carries the same tone whether a shopper reaches you on your website or through social commerce platforms. This omnichannel consistency prevents the jarring experience of encountering different AI identities on different touchpoints.