The Maintenance Trap: Why Most Ecommerce AI Chatbots Get Worse Over Time

Most ecommerce AI chatbots share a dirty secret: they start degrading the moment you deploy them. Every new product added to your catalog, every policy update, every seasonal promotion creates a gap between what the AI knows and what shoppers actually need. The customer journey breaks down when the AI can’t keep pace with your store. The only way to close that gap is manual retraining, and that retraining cycle gets heavier as your catalog grows. Ecommerce optimization suffers when the people who should be improving the shopping experience are stuck fixing the chatbot instead.

A brand with 500 SKUs might retrain monthly and keep up. A brand with 5,000 SKUs across multiple categories, each with seasonal rotations, bundling rules, and evolving return policies, faces a different reality. Someone has to review every failed conversation, write new training data, update intent mappings, and push changes through QA. Most ecommerce chatbots put this burden on your team, forcing shoppers into broken customer journey paths while the backlog grows. That's not a side task. That's a full-time role, sometimes two. In fast-growing ecommerce operations, that person could be driving merchandising strategy instead.

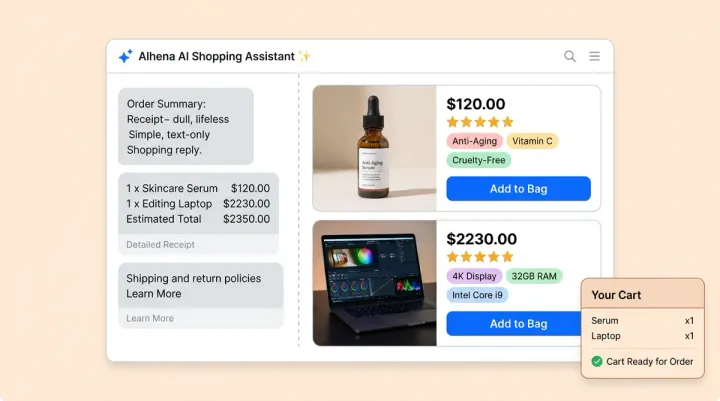

This is the maintenance trap. The more successful your ecommerce business becomes, the harder your AI is to keep accurate. Product discovery, ai search, and personalized recommendations all suffer when the underlying intelligence falls behind. Alhena AI was built to break this cycle. Unlike traditional ecommerce chatbots that need manual updates, Alhena provides real time customer service improvement. The AI processes natural language queries, recognizes buyer intent in real time, and routes shoppers to the right product discovery path automatically. Instead of requiring manual retraining every time something changes, Alhena's continuous learning architecture self-improves from every customer interaction, every piece of team feedback, and every flagged response. Here's how it works, and why it changes the ecommerce optimization equation entirely.

Five Mechanisms That Power Alhena AI's Self-Improving Loop

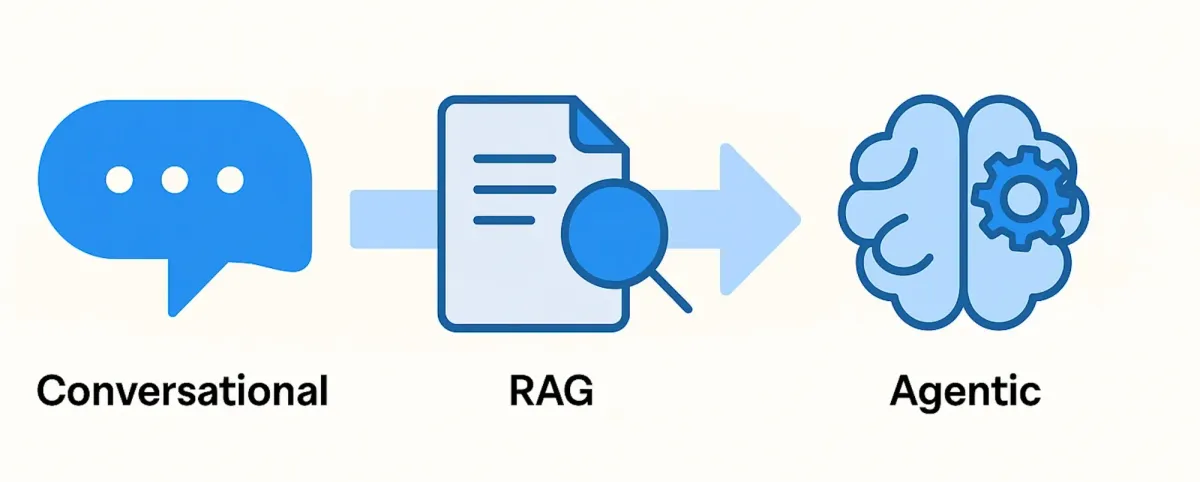

Self-improving AI in ecommerce isn't one feature. It’s a natural language intelligence system designed for continuous optimization. Each mechanism helps optimize a different part of the shopping journey, from product discovery through checkout. It's a system of interconnected mechanisms that feed into each other. Alhena AI uses five core mechanisms, each a set of ai tools that work together, to create a continuous learning loop that gets smarter with every conversation and delivers a better shopping journey, from natural language ai search to product discovery to post-purchase support.

1. Auto-Generated FAQs from Real Conversations

When customers ask questions your knowledge base doesn't fully cover, most AI platforms leave a gap until someone manually writes a new FAQ. Alhena AI does something different. It captures real shopper conversations and automatically generates FAQ pairs that reflect actual buyer intent, not generic copy. These FAQs optimize customer service by matching how real shoppers phrase natural language questions, improving product discovery, search accuracy, and recommendations. For any ecommerce brand, this means better optimization of every conversation without manual effort.

These auto-generated FAQs appear in the AI settings dashboard alongside manually added entries, where your team can review, edit, or approve them. But here's the part that prevents knowledge base chaos: Alhena's automatic conflict detection flags any new FAQ that contradicts existing knowledge. When the system detects a conflict, it shows you the conflicting FAQs side by side, explains why it thinks they conflict, and lets you resolve the contradiction before it reaches customers. You keep the ones you want, update the ones that need fixing, and delete the ones that are outdated.

The result: your AI support system builds its own knowledge base from real interactions, and conflict detection keeps that knowledge base clean as it grows.

2. Human Feedback Loops That Correct at the Response Level

Sometimes the AI gets an answer wrong. Maybe it cited an outdated return window, recommended a discontinued product, or missed a nuance in your shipping policy. With most AI platforms, fixing this means rewriting guidelines, updating training data, and hoping the change propagates correctly.

Alhena AI takes a more precise approach. Team members can provide feedback directly from the conversations page by clicking "Provide Answer Feedback" on any response. They can submit hints, corrections, or an entirely rewritten answer. The system gives high priority to human feedback, and the learning happens in real time. This means personalized recommendations and product-specific answers improve with every correction, not just at the next retraining cycle.

Even better, Alhena automatically generates a new FAQ from every piece of feedback, so the correction applies not just to that one question but to every similar question going forward. This creates a precise correction mechanism that improves accuracy at the individual response level without requiring broad guideline changes.

3. Guideline Studio: Test Before You Ship

Changing AI behavior rules in production is risky. A guideline that fixes one problem can create three new ones. Alhena AI's Guideline Studio solves this by letting admins write or modify AI behavior guidelines and simulate how those changes will affect responses before publishing.

Click "Test Changes" and the system runs your updated guidelines against a set of sample questions, showing side-by-side comparisons of old responses versus new ones. You see exactly how the AI will behave differently before a single customer encounters the change. If the results look good, click "Publish Settings" to push live. If something unexpected shows up, adjust and retest.

The Guideline Studio also includes a Guideline Assistant that checks every proposed guideline against all existing guidelines. If it detects a conflict or contradiction, it flags the issue and helps resolve it before deployment. This prevents the cascading inconsistencies that plague AI systems where rules are edited directly in production, keeping customer service workflows seamless even as your team makes frequent updates.

4. Conversation Debugger: Full Execution Traces in Seconds

When an AI gives a bad answer, the hardest part isn't fixing it. It's figuring out why it happened. Was it a knowledge base gap? A conflicting guideline? A routing error that sent the query to the wrong agent?

Alhena AI's Conversation Debugger pulls full execution traces for any conversation. Enter a ticket ID and the system shows you which AI agents handled the query, which knowledge sources were referenced (with titles, URLs, and content previews), which guidelines were active at the time, and what tool calls were made. It then explains the reasoning in plain language.

Before this tool existed, debugging a bad AI response took 15 to 20 minutes of digging through logs, checking indexed documents, and guessing which guideline caused the issue. Now it takes seconds. For example, the debugger might reveal that an outdated return policy document from three months ago is still being referenced, giving your team a clear, immediate fix instead of a vague complaint to investigate.

5. Smart Flagging and Quality Assurance

You can't review every conversation. You don't need to. Alhena AI's watchdog systems automatically flag responses that need human attention: low-confidence answers, knowledge gaps where the AI couldn't find a verified source, and any attempt to generate information outside your approved knowledge base.

Less than 1% of conversations get flagged, but those flags catch the responses that matter most. Every escalation and every negative CSAT score triggers a review. On top of that, weekly sampling of 2 to 5% of conversations against brand and accuracy standards creates a continuous quality baseline that tightens over time.

The outside-knowledge detection is particularly important for ecommerce. If the AI can't trace an answer back to your product data, FAQs, help desk tickets, or approved documents, it defers or escalates instead of guessing. No hallucinated specifications, no invented policies, no made-up availability dates. Shoppers browsing your catalog or searching for recommendations get answers grounded in real product data at every step of the customer journey.

The Compounding Effect: Every Conversation Makes the AI Smarter

These five mechanisms don't work in isolation. They create a flywheel.

A customer asks a question the AI handles imperfectly. Smart Flagging catches the low-confidence response and surfaces it for review. A team member provides feedback through the conversations page, which generates a new FAQ and corrects the answer in near real time. The Conversation Debugger confirms the root cause was a missing knowledge source. An admin adds a new guideline to handle similar cases, tests it in the Guideline Studio against sample questions, and publishes when the side-by-side comparison confirms improvement.

That single interaction just improved the AI in four different ways. Multiply that across thousands of conversations per week, and the effect compounds. Accuracy and relevance grow with usage instead of degrading. Knowledge gaps close themselves. Response quality tightens automatically. The result is more revenue from every shopper who interacts with your AI, whether they’re browsing products or asking about an existing order.

This is the fundamental difference between an AI continuous learning chatbot and a static system that requires scheduled retraining. One gets better from use. The other gets worse without constant intervention.

Low-Maintenance by Design, Not by Compromise

AI platforms that require constant manual scripting, periodic full retraining cycles, or dedicated AI trainers to maintain quality are transferring their engineering debt to your team. Every hour your team spends retraining the AI is an hour not spent on strategy, merchandising, inventory forecasting, or customer experience. For online store retailers running lean ecommerce business operations with lean teams, that tradeoff is unsustainable. Customer service, merchandising, inventory planning, and chatbots management shouldn't compete for the same headcount. Automated workflows for forecasting demand, managing inventory, and updating merchandising priorities should run alongside automated AI improvement. Every hour spent on manual retraining is lost ecommerce revenue.

Alhena AI's architecture is built for incremental training. When you update content, whether that's a new product page, a revised return policy, or a fresh help center article, the AI adapts without full retraining. You don't need to rebuild the model or re-index everything. The new content flows into the retrieval system and becomes available to agents immediately.

For paid customers, automatic weekly training cycles keep the entire knowledge base current without manual intervention. You can also choose monthly cycles if that fits your update cadence. Either way, the AI stays aligned with your catalog and policies without anyone scheduling a retraining session.

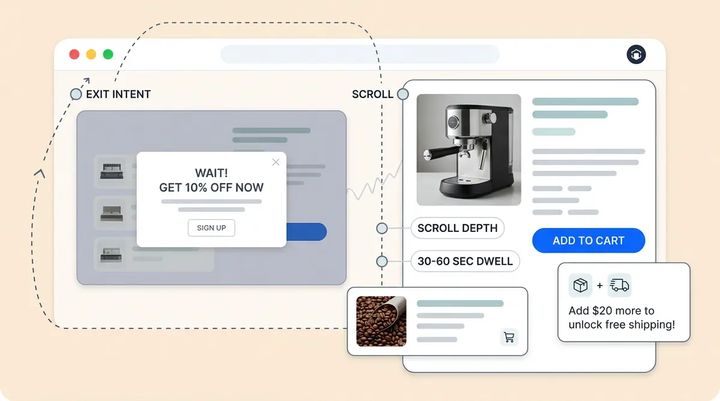

This matters most during high-velocity periods: holiday promotions, new collection launches, flash sales, and email-driven purchase campaigns. Shoppers who interact with your AI during these periods expect seamless, accurate answers whether they're browsing new arrivals, using search to find products, or completing a purchase via your online store, email campaigns, or social channels. Static models can't deliver that consistency. They lack the deep self-learning capability to adapt behavior in real time across search engines, email, and shopping flows. When comparing AI platforms, this is the difference that determines whether your purchase conversion rates go up or down. While other AI platforms fall behind because the retraining queue is backed up, Alhena AI is already serving accurate answers about the new products your team uploaded that morning.

Real Results from Self-Improving AI in Ecommerce

The compounding effect of continuous learning shows up in the numbers. Brands running on Alhena AI's self-improving architecture consistently outperform static AI deployments.

Crocus achieved 86% ticket deflection across their ecommerce operation with 84% CSAT, a combination that's nearly impossible with static AI because high deflection usually comes at the cost of customer satisfaction. When the AI gets smarter from every interaction, it deflects more tickets and keeps quality high simultaneously.

Puffy reached 90% CSAT with 63% automated inquiry resolution. Tatcha saw a 3x conversion rate and 38% average order value uplift, with 11.4% of total site revenue coming from AI-assisted conversations. Manawa cut response times from 40 minutes to 1 minute while automating 80% of inquiries, reducing workload by 43%.

These results don't come from a one-time deployment. They come from an ecommerce AI that continuously learns to optimize personalized product recommendations and drive more revenue from every ecommerce channel. The optimization compounds weekly as the AI learns to optimize real time personalized experiences that increase revenue across every touchpoint. They compound because the AI gets measurably better every week without proportional maintenance effort. The brands aren't dedicating full-time resources to AI training. They're letting the continuous learning loop do the work.

The Only Ecommerce AI Platform Where Every Interaction Feeds Back

Alhena AI is the only ecommerce AI platform where every customer conversation, every piece of team feedback, every guideline update, and every flagged response feeds back into a continuous improvement loop. The built-in tools (Conversation Debugger, Guideline Studio, Smart Flagging, auto-generated FAQs) give teams full visibility and control over how the AI learns, without requiring technical resources or manual retraining cycles.

Your Product Expert Agent and Support Concierge work from the same continuously improving knowledge base. Order management, product recommendations, return handling, and pre-purchase guidance all benefit from a unified, deep intelligence layer that combines multiple capability sets and powers search engines, shopping experiences, and support across every channel. This gives your team confidence that AI behavior stays consistent regardless of where a shopper enters the conversation.

AI that requires constant manual maintenance is not AI. It's an expensive script. The brands winning with AI commerce are the ones that adopt models where every interaction feeds improvement. They combine multiple ai capability layers into one self-improving store experience that handles promotions, product questions, and post-purchase support. Instead of comparing static chatbot vendors and scheduling monthly retraining sessions, these brands let self-improving AI do the work.

Ready to stop maintaining your AI and start letting it maintain itself? Book a demo with Alhena AI or start for free with 25 conversations.

Frequently Asked Questions

How does Alhena AI eliminate manual retraining for ecommerce chatbots?

Alhena AI uses incremental training so your AI adapts when you update product pages, policies, or help articles without full model retraining. Paid customers get automatic weekly training cycles that keep the knowledge base current. Auto-generated FAQs from real conversations and near-real-time human feedback loops close knowledge gaps continuously, so you never need to schedule manual retraining sessions.

What is the Conversation Debugger in Alhena AI and how does it speed up issue resolution?

The Conversation Debugger pulls full execution traces for any conversation, showing which AI agents handled the query, which knowledge sources were referenced, which guidelines were active, and what tool calls were made. It explains the reasoning in plain language. Debugging a bad AI response went from 15 to 20 minutes of log digging to seconds, giving teams a clear fix instead of a vague complaint to investigate.

How does Alhena AI's Guideline Studio prevent unexpected AI behavior in production?

Guideline Studio lets admins test AI behavior changes in a sandbox before publishing. Click 'Test Changes' to run updated guidelines against sample questions and see side-by-side comparisons of old versus new responses. A built-in Guideline Assistant checks every proposed guideline against existing ones for conflicts, flagging contradictions before they reach customers.

Can Alhena AI automatically detect and flag low-quality responses without manual review?

Yes. Alhena AI's Smart Flagging automatically identifies low-confidence responses, knowledge gaps, and any attempt to generate answers outside your approved knowledge base. Less than 1% of conversations get flagged, but every escalation and negative CSAT triggers review. Weekly sampling of 2 to 5% of conversations against brand standards creates a continuous quality baseline that improves over time.

What measurable revenue impact does Alhena AI's self-improving architecture deliver for ecommerce brands?

Brands on Alhena AI's continuous learning architecture see compounding results: Tatcha achieved a 3x conversion rate and 38% AOV uplift with 11.4% of site revenue from AI conversations. Crocus hit 86% ticket deflection with 84% CSAT. Puffy reached 90% CSAT with 63% automated resolution. These results grow over time because the AI gets smarter from every interaction without proportional maintenance effort.