For AI-savvy Customer leaders, the next 18 months will feel like upgrading from manual to a self-driving car.

Retrieval-Augmented Generation (RAG) bots - today’s default for knowledge-based chat, are great at answering questions, but they stop there. Agentic AI - layers planning, tool use, and memory on top, so the AI Support Solutions can resolve complex multipart tickets, smartly offer upsells before triggering replacements or refunds without a human ever touching the case.

Below is a deep-dive that (1) demystifies the tech, (2) shows where RAG tops out, (3) explains how Agents and qualitatively better, and (4) some questions you need to think if planning a move from RAG to Agentic

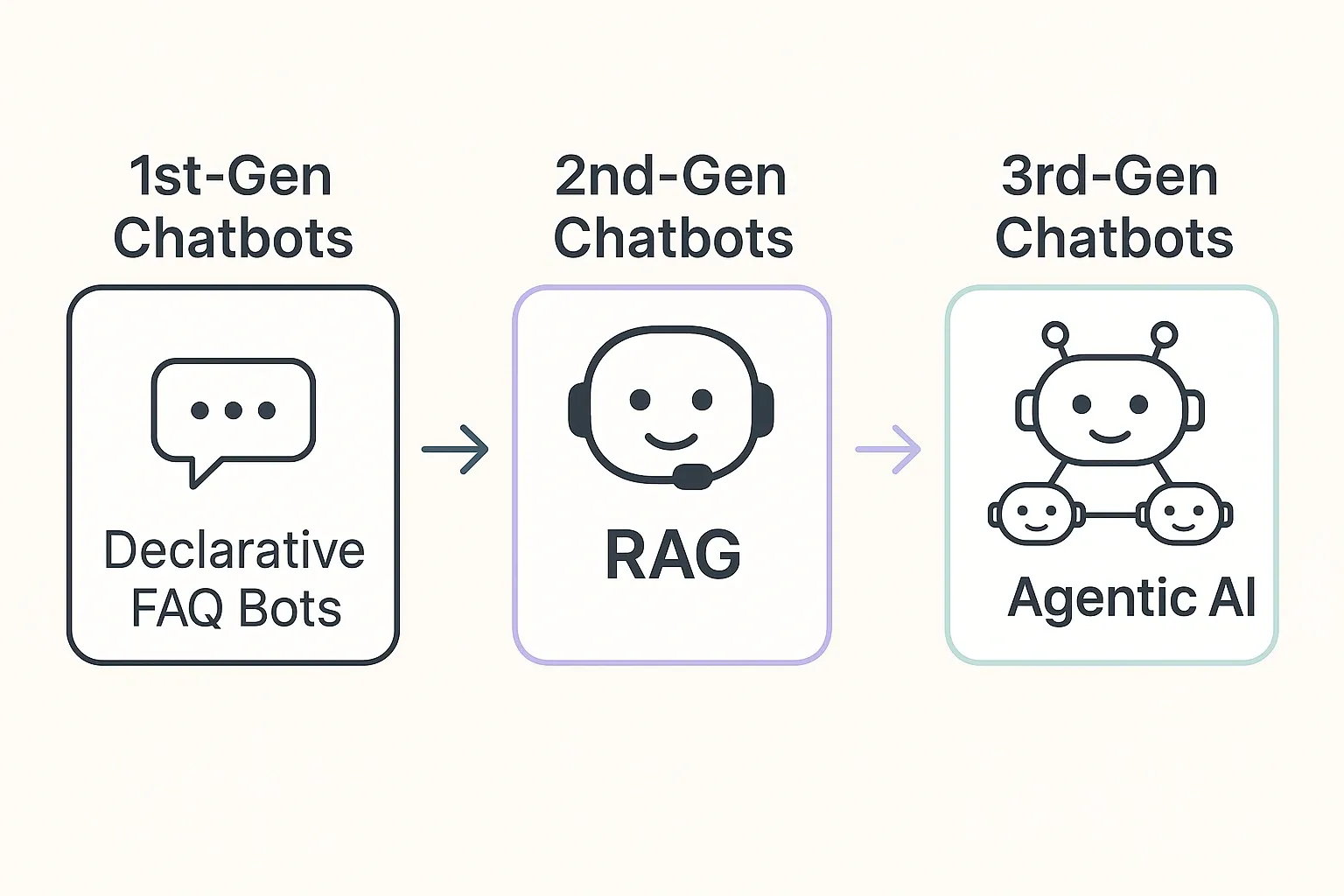

From 1st-Gen Chatbots to 3rd-Gen Agentic AI

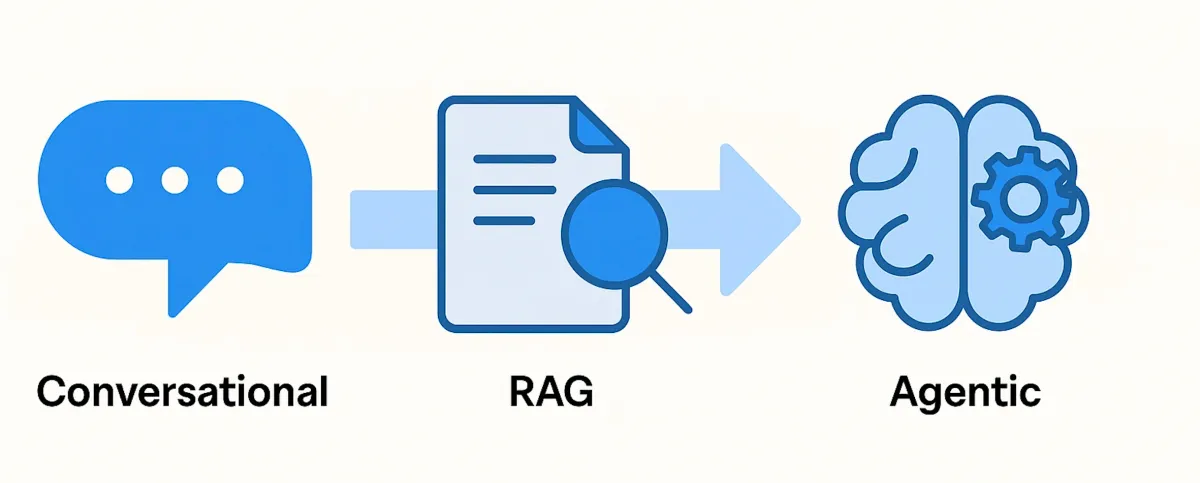

Chatbots that followed rigid flows were “Gen 1 - declarative chatbots.” RAG bots are “Gen 2” - they ground an LLM’s answers in your help-center snippets to cut hallucination. We’re now entering “Gen 3,” where an agent loop (observe → plan → act → reflect) lets the system decide the next best action until the customer’s goal, not just their question is met.

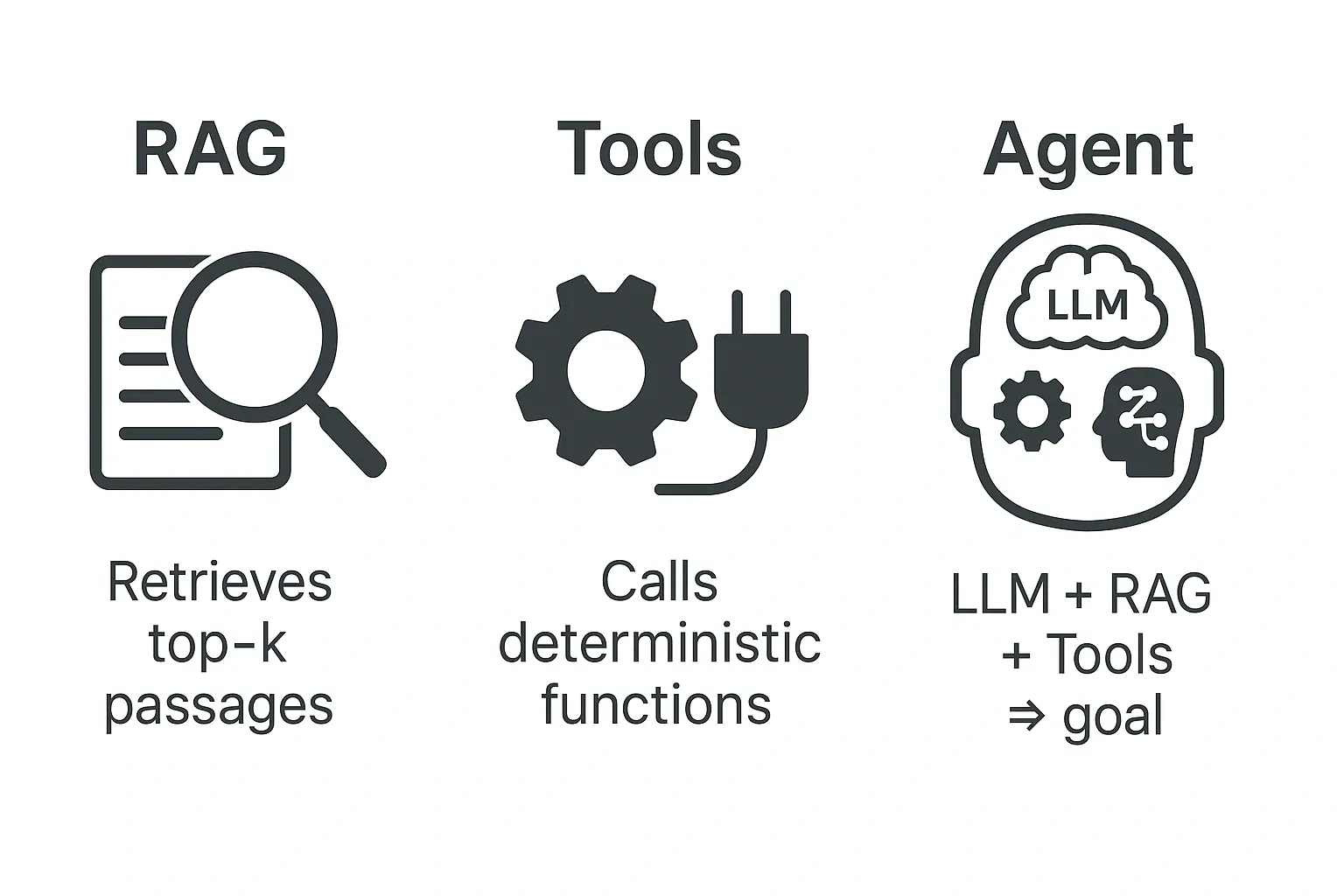

Quick Primer: RAG, Tools, Agents:

There are three important concepts to be familiar with:

| Concept | What it is | Why buyers care |

|---|---|---|

| RAG | LLM + vector search that retrieves the top‑k passages before answering (Medium) | Fast to launch; cuts wrong answers vs. raw LLMs. |

| Tool |

Any deterministic function the model can call via JSON—e.g.,

getOrderStatus(id)

(OpenAI Platform)

|

Unlocks live data & actions (refunds, ticket updates). |

| Agent | A reasoning loop that can chain RAG + tools + memory until a goal is done (Medium, NVIDIA Docs) | Automates whole workflows, not just Q&A. |

Why RAG is Popular Now?

Speed to Deployment:

A simple RAG is relatively easier to build and deploy. For example, Intercom shipped Fin in eight weeks by scraping its help center and tuning prompts. At Alhena, we can build our first RAG in 2 Days (Dec'22)

Lower hallucination risk:

At Alhena, we have been able to completely stop hallucination. Even others, while not so good, have been able to make some progress. For example, Zendesk reports a double-digit drop in wrong answers when grounding responses in docs

Stateless scaling:

Most 2025 support bots still run pure RAG because latency is a single LLM call

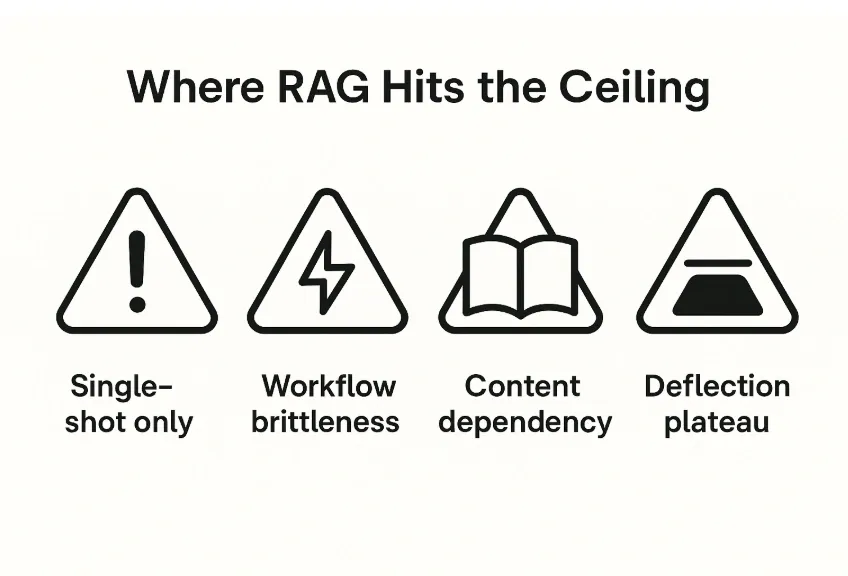

Where RAG Hits the Ceiling

- Single-Shot Only: In RAG, each query is treated in isolation; no plan spans steps.

- Workflow brittleness. Stitching multiple tools together by prompt engineering quickly becomes unmaintainable.

- Content dependency. If the doc is missing, or stale, the bot stalls. Updating knowledge base weekly is tedious and nobody’s idea of fun.

- Deflection plateau. You can only deflect so much with answering informational queries. Industry benchmarks show containment flattening at ~30-40 % with answer-only bots. With some high-quality AI solutions that can take actions, this can go as high as 70%, but that's it.

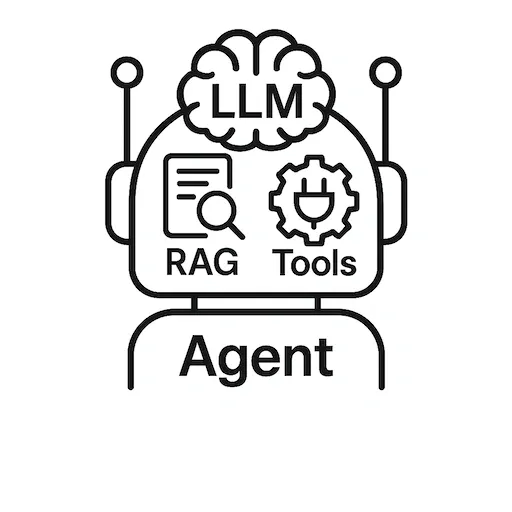

Agentic AI ≠ RAG + Tools

Solution providers confuse customers by saying they have agentic AI. Actually, all they have is RAG that has ability to call some tools.

Most vendors shouting “Agentic AI” are really shipping classic Retrieval‑Augmented Generation with a few API calls bolted on.

That’s not an agent - it’s still a single‑shot LLM response dressed up with tool access.

A True Agentic AI:

- Plans: decomposes the user’s goal into multi‑step actions.

- Acts: selects and sequences tools autonomously.

- Checks: evaluates intermediate results and self‑corrects.

- Learns: updates its strategy from feedback and new data.

RAG, no matter how many plugins you attach, does none of the above. It retrieves context, drafts an answer, and stops.

In the next section we’ll unpack Agentic AI’s architecture and show exactly how it diverges from RAG (and RAG + Tools) in both design and outcomes.

Enter True Agentic AI - And What It Lets You Do

Resolve multi-step tickets.

Agents can handle way more complex, multi-turn queries that requires reasoning. For example, in some telecom pilots, agent loops cut RMA handling time by over 50 % because the bot could file return labels, generate RMAs, and send status updates autonomously

Ask clarifying questions—then act:

In RAG, the AI has a tendency to simply answer. Agentic AI solutions can ask follow-up questions before answering. For example, in E-Commerce Shopping Solutions, AI asks questions to understand user's requirement before recommending a product.

Human-like UX tweaks in minutes:

Changing the behavior of Agents should be as easy as tweaking the guideline, rather than changing hard to find/understand settings in traditional softwares

Future-proof for agentic commerce:

As we move to agentic commerce, or social commerce - changing agents/adding new agents to enable new functionalities is a lot easier. This is because while RAG can use tools to accomplish a workflow. Those tools are stitched together traditionally. This means that RAG is not made to solve for new workflows.

With Agentic solutions, you can truly solve novel workflows without having to have thought about them at the onset. These Agents will orchestrate and collaborate with each other to surprise and delight you/your users.

Guardrails & Governance (Read This Twice)

| Risk | Counter‑measure |

|---|---|

| Unbounded actions | Whitelist tool scopes & spending limits with frameworks like NVIDIA NeMo Guardrails. |

| Evaluation complexity | Use conversation replay plus ALMITA dataset benchmarks to track full‑thread success (arXiv). |

| Over‑claiming autonomy | Ask vendors for an autonomy score before deployment so you can compare apples to apples (arXiv). |

Our Opinion: Where This Is Headed

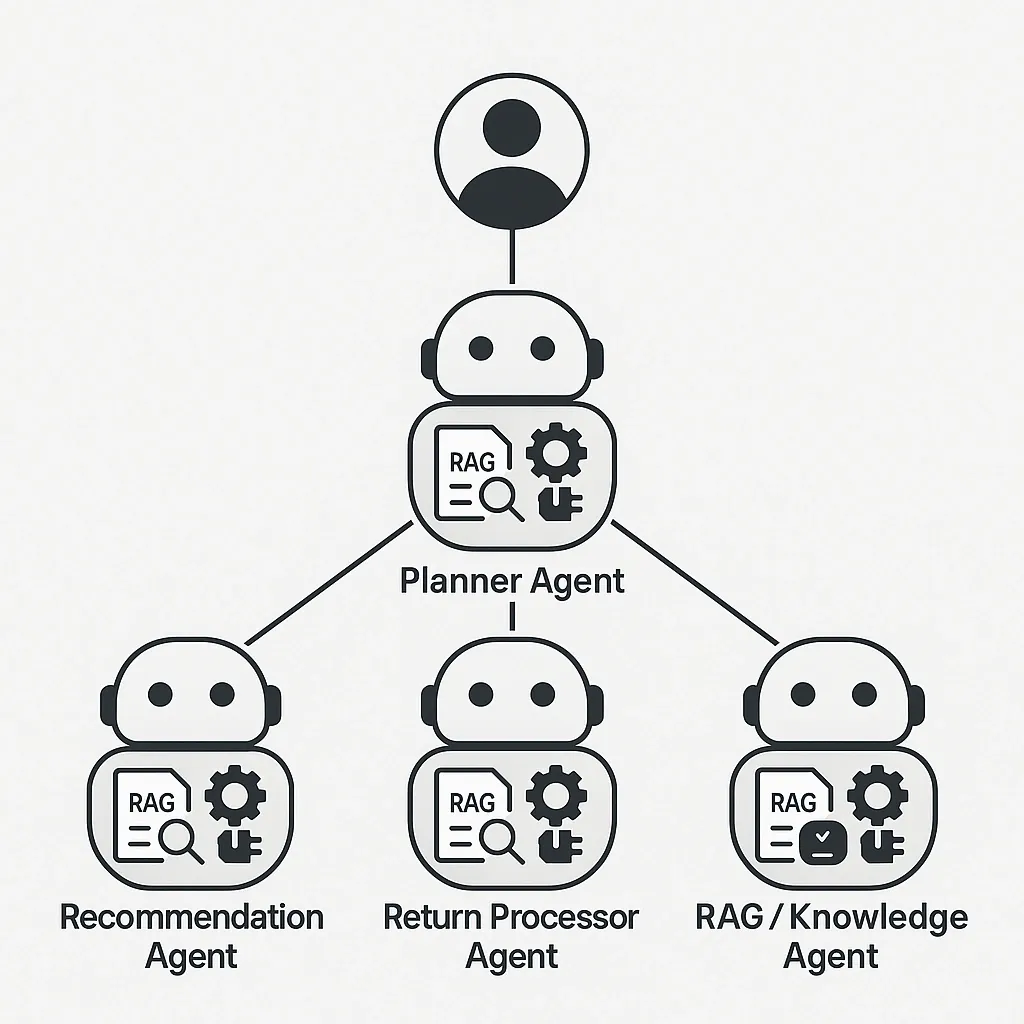

Agentic solutions won’t replace RAG, rather they’ll encompass it. A well-designed agent calls:

- A RAG tool when it needs trusted knowledge.

- Domain tools to act (refund, reship, escalate).

- Fellow agents for planning and collaborating on workflows.

Customer teams and e-commerce brands that invest in this stack early will own the next decade of customer experience, while pure-Q&A chatbots will look as dated as the IVR trees.

Technical Deep-dive into Multi-Agent Architecture

For technical folks, we have also published a detailed architecture of multi-agent AI systems. It shows how a planner-led multi-agent LLM: Planner, Orchestrator, shared ChatState & specialist agents - outperforms single-agent chatbots for e-commerce CX, eliminating prompt bloat, tool confusion and goal clashes to deliver instant support, smart recommendations and automated order care. You can also read about how we moved beyond RAG to build a plan-execute-verify system that dramatically improved accuracy.

Ready to See Agentic AI Live?

Book a 15-minute demo, and we’ll put an Alhena agent on one of your real tickets. Watch as it magically retrieves, plans, and resolves in front of you.

At Alhena, we are leading the industry in transforming the customer support using an Agentic AI architecture. If you enjoyed reading this, you will also reading this detailed and practical primer on Agentic Chunking.

- Learn More: Alhena AI For ECommerce

- Create and Test Your Own AI Agent for Free: Sign Up

- Read Customer Success Stories: Customer Success stories